Update: Square Enix is aware of this issue, has acknowledged its existence, and is working on an update for launch.

Although we don't believe this to be intentional, the Final Fantasy XV benchmark is among the most misleading we’ve encountered in recent history. This is likely a result of restrictive development timelines and a resistance to delaying product launch and, ultimately, that developers see this as "just" a benchmark. That said, the benchmark is what's used for folks to get an early idea of how their graphics cards will perform in the game. From what we've seen, that's not accurate to reality. Not only does the benchmark lack technology shown in tech demonstrations (we hope these will be added later, like strand deformation), but it is still taking performance hits for graphics settings that fail to materialize as visual fidelity improvements. Much of this stems from GameWorks settings, so we've been in contact with nVidia over these findings for the past few days.

As we discovered after hours of testing the utility, the FFXV benchmark is disingenuous in its execution, rendering load-intensive objects outside the camera frustum and resulting in a lower reported performance metric. We accessed the hexadecimal graphics settings for manual GameWorks setting tuning, made easier by exposing .INI files via a DLL, then later entered noclip mode to dig into some performance anomalies. On our own, we’d discovered that HairWorks toggling (on/off) had performance impact in areas where no hair existed. The only reason this would happen, aside from anomalous bugs or improper use of HairWorks (also likely, and not mutually exclusive), would be if the single hair-endowed creature in the benchmark were drawn at all times.

The benchmark is rendering creatures that use HairWorks even when they’re miles away from the character and the camera. Again, this was made evident while running benchmarks in a zone with no hairworks whatsoever – zero, none – at which point we realized, by accessing the game’s settings files, that disabling HairWorks would still improve performance even when no hairworks objects were on screen. Validation is easy, too: Testing the custom graphics settings file by toggling each setting, we're able to (1) individually confirm when Flow is disabled (the fire effect changes), (2) when Turf is disabled (grass strands become textures or, potentially, particle meshes), (3) when Terrain is enabled (shows tessellation of the ground at the demo start' terrain is pushed down and deformed, while protrusions are pulled up), and (3) when HairWorks is disabled (buffalo hair becomes a planar alpha texture). We're also able to confirm, by testing the default "High," "Standard," and "Low" settings, that the game's default GameWorks configuration is set to the following (High settings):

- VXAO: Off

- Shadow libs: Off

- Flow: On

- HairWorks: On

- TerrainTessellation: On

- Turf: On

Benchmarking custom settings matching the above results in identical performance to the benchmark launcher window, validating that these are the stock settings. We must use the custom settings approach, as going between Medium and High offers no settings customization, and also changes multiple settings simultaneously. To isolate whether a performance change is from GameWorks versus view distance and other settings, we must individually test each GameWorks setting from a baseline configuration of "High."

High-quality fur from 10,000 feet. These are still rendered even without Ansel, validated with performance testing far from the location. We believe this issue is higher up in the engine pipe, as Square Enix is calling the large host object (the buffalo), so this seems to be outside of an nVidia/GameWorks-specific bug. Note that we did notice non-HairWorks animals also being rendered, like the bird (in the video) and the cactus.

Here's what's happening: The cows -- buffalo, whatever -- are being rendered at all times during the benchmark. This includes being rendered before trigger events, after trigger events, and even upon conclusion of the benchmark. The best example might be the campsite, when we've had a clear time-of-day change and location change: In the blackest of nights -- and this was hard to find -- the buffalo still roam, miles away and in the pitch black dark of a completed benchmark, still requiring system resources. Square Enix demonstrates functioning occlusion for other objects (rocks, for one), has selectively removed objects until the player meets a trigger (the boathouse), and even has LOD lines drawn. All of this indicates that Square is fully capable of culling unseen objects, or minimizing the LOD, but has failed to do so with the particularly resource-intensive buffalo, one of the only (the only?) objects that use HairWorks in the entire benchmark sequence.

This isn’t an accidental cow. That doesn’t mean it’s a malicious cow, but it seems to be an oversight. That's not the only issue in the whole benchmark, though; GameWorks settings, as we display in the video, rarely show their merits in the benchmark. Turf is among the only visible effects, yet the framerate does fluctuate heavily. We anticipate a bigger change as the game ships. What this means for now, though, is that the benchmark is fully unreliable and unrealistic, failing to represent what the final game will hopefully be. And if it does represent the final game, well, that's another issue entirely.

We found an LOD line drawn along the map, where you can see the transition of detail – so Square Enix has marked this space as being clearly not visible to the player, and yet still – way beyond the boundaries of this line, miles away – we see the cows in full detail. There are also examples of culling present in the game, there are examples of selective removal of unseen objects, there are examples of LOD scaling, and instances of triggered object spawning and despawning.

Above: Both culling and LOD scaling are demonstrated in this image, as is selective object removal.

Square Enix demonstrates in its own benchmark that the company is capable of selective objective removal, capable of culling, capable of triggered spawns, and yet the load-intensive cows remain, taxing the GPUs even when they rest 2 minutes in the future and 4 minutes in the past. To be very clear here: The cows, endowed with HairWorks (significant for its load generation), are being rendered by the GPU even when the player has no line of sight, and will have no line of sight for several minutes. They are still rendered after cutscenes, they are rendered after nightfall, they are always rendered.

Above: A road found under the world -- one of many objects rendered under the map.

(We'd strongly encourage watching the accompanying video for this one -- the visual factor is significant for this piece).

There are even game objects, not GameWorks-endowed, that are rendered out of bounds of the map. In noclip, we found fully detailed tombs (as in, contained inside of doors), including high LOD textures and meshes, pieces of road, houses submerged under the earth and below the level, and more. These objects don't strain AMD or nVidia in uneven ways (and do not use GameWorks), so we don't believe there is malicious intent here; however, it does further emphasize that the benchmark is unreliable as a tool for performance approximation, regardless of GPU vendor.

This investigation derived from the realization that a 90-second benchmark, which does not ever make it to the hairy mammals (cuts off just before), still somehow experiences significant framerate drops on both AMD and nVidia hardware. NVidia would see 15% improved framerate with HairWorks disabled, even when just in the first 30 seconds of the bench, while AMD would see 37% improvements in the same area.

We even validated that the grass is specifically using TurfWorks, not HairWorks, as we assumed them possibly semi-interchangeable. The only non-cow thing that might use HairWorks would be the characters, and we know that the characters aren’t using HairWorks presently for two main reasons: One, there’s no dynamic movement of hair as the characters move, and two, by using nVidia Ansel, we can fly into the character’s head and see that the hair is being drawn by using alpha textures.

The hair is attached to a plane on the character’s head; meanwhile, looking inside the buffalo, we see individual strands of hair protruding from the body, clearly indicating HairWorks integration.

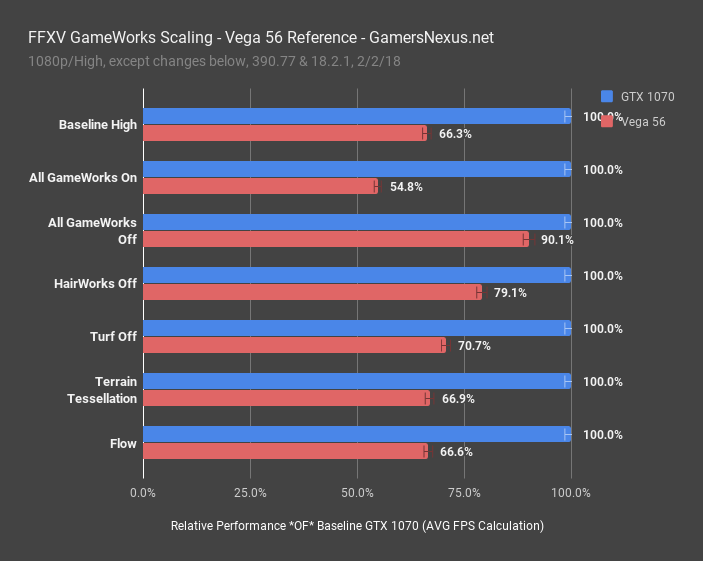

Let’s look at those GameWorks settings charts. This is using the same test platform as yesterday’s GPU benchmark.

Before even getting to the details and comparisons, this chart of relative performance scaling shows how the AMD GPU is affected unequally by GameWorks settings. NVidia’s GTX 1070 serves as baseline, marked at 100%. With stock 1080p/High settings, the Vega 56 card maintains 66.3% of the GTX 1070’s performance. When we completely disable GameWorks, something typically impossible while still maintaining equal graphics settings, we see that the Vega 56 card manages to maintain 90% of the GTX 1070’s performance – hiked from 66% baseline, that’s a massive gain, and illustrates uneven performance scaling. For perspective, nVidia cards scale with one another almost 1:1 on this same chart. This unequal impact isn't news, as nVidia creates the technology, optimizes for it, and has presumably had closer access to the title. AMD, meanwhile, is still working on its launch-day drivers (no FFXV drivers were released for this benchmark utility).

As for what’s specifically causing the biggest performance hits, that award goes to HairWorks, the single disablement of which grants Vega a massive performance uplift (and the GTX 1070 a less impressive, but still large uplift). Turf is the next most responsible, and is what Square Enix is using for its grass technology.

For terrain tessellation, our initial hypothesis was completely wrong: There’s almost no impact. Despite AMD’s historical troubles with tessellation, it appears the newer geometry pipeline is coping well, and is busy getting smashed by geometry from hair, anyway. We've also noticed that terrain tessellation goes away as the benchmark progresses, later being replaced with more traditional normal maps. The impact of this setting could change at game launch, assuming Square Enix makes more use of terrain tessellation.

Above: Terrain tessellation enabled at game start.

Below: Later in the benchmark, with terrain tessellation still enabled, we see the ground has reverted to a normal map.

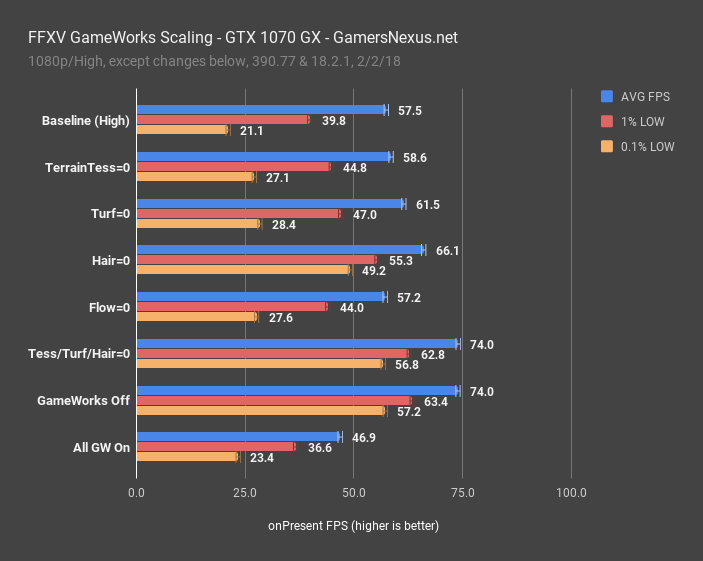

Before getting the FPS charts on screen, this is the settings configuration we’re using for these benchmarks: We’re toggling off GameWorks options under High, one at a time, while leaving other default options still enabled. Stock “High” configuration enables Flow, HairWorks, Turf, and Terrain Tessellation, with VXAO & Shadow Libraries disabled. We disable one at a time in testing. To that end, you should always be comparing baseline to the toggled option, giving a look at which option grants the greatest performance uplift relative to baseline.

First, note that AMD still has a lot of work to do optimizing this game. Even more so if hair is being rendered 18 miles from the camera. Even with GameWorks completely disabled, the Vega 56 card is operating at a 10% deficit to nVidia – that’s not unreasonable, but some optimization should help Vega along significantly. Ultimately, though, the frametime performance is still undesirable under all settings, even with GameWorks completely disabled. AMD does have drivers for this game coming; we’re told to expect them closer to launch, and we’ll revisit at that time to see if Vega improves in frametimes.

More importantly, however, is the performance delta between the various settings. Using just the native 1080p/High settings, with our 90-second benchmark, we recorded 38FPS AVG across multiple runs. Disabling Turf and HairWorks nearly doubled our framerate, giving some perspective as to the perceived performance capabilities. We don’t encounter Flow very much in the benchmark (the fire at the end), so can ignore that, and terrain tessellation also has much less impact than we expected. Versus baseline, AMD is gaining an insane 37% performance by disabling HairWorks, but leaving all the other native GameWorks technologies enabled. NVidia, meanwhile, gains about 15% from the same conditions. Enabling VXAO and Shadow libraries, done under the “All GameWorks On” category, all devices take a further tumble, but these settings appear to be disabled by default.

Here’s the GTX 1070’s handling of the same settings.

First off, note that we complained about frametimes on nVidia cards in our GPU benchmark, showing that the company had trouble processing its own GameWorks features without tripping over wide frame-to-frame intervals. The result was occasional stutter and more disparate frame creation time. We can finally illustrate this: At Baseline, our 0.1% lows were at 21FPS which, although better than AMD’s, aren’t really better in terms of AVG-to-low relative scaling. Disabling HairWorks specifically grants us a major uplift in frametime performance, but just a moderate one in AVG FPS. The average increases by 15%, with the lows increasing by more than 2x.

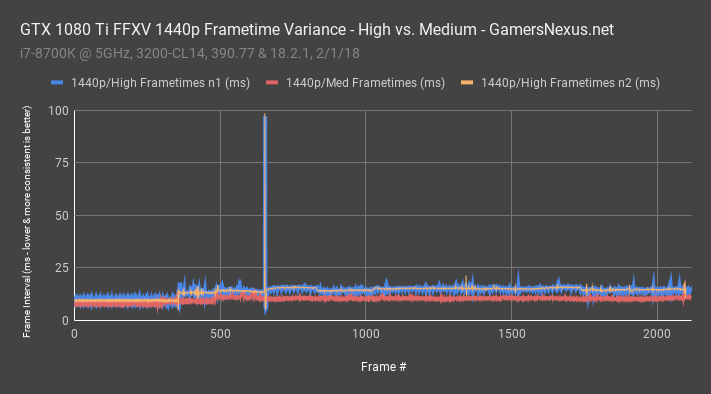

Here's a reminder of the frametime impact on nVidia hardware, toggling between "High" and "Medium" settings (which disabled all GameWorks options, among reductions elsewhere):

GameWorks seems to be hurting performance for no apparent gain, aside from some grass detail and, in one location, some hair detail. Just again as a reminder: Toggling settings like HairWorks has performance impact even when testing in areas bereft of said setting, even without using Ansel and just using the native benchmark (lest there be any doubt).

And that’s the big deal. This isn’t just needless rendering of an unevenly intensive object out-of-frustum, it’s the noticeable, measurable performance impact in exchange for no noteworthy visual quality improvement. This benchmark isn’t representative of the final game (and if it is, well, yikes), so we’d anticipate the next 97GB of game content will overhaul much of the current lack of visual gains for high performance hits. As it stands now, we’ve noticed:

- There’s terrain tessellation at the beginning (Square is pushing the road down, pulling the rocks out as tessellation). There’s no big impact on performance, though, at less than 2FPS per card, and terrain tessellation also does not persist through the entire benchmark.

- Grass: Turf gives taller blades, but they don’t seem to conform to player movement like in the promised tech demos. Either way, it’s denser strands, which we think might be particle meshes, versus textures for grass on the ground. The result is that disabling TurfWorks gives a patchier, balder look to the ground. Whether or not you think that’s worth a performance hit is up to you, although nVidia users don’t have as much to worry about – we plotted about a 6.5% deficit on the GTX 1070 for Turf, whereas AMD plotted a 12% performance deficit.

- We were left wondering where HairWorks is impacting our benchmarks. During our timed test, the benchmark cuts off just before the furry buffalo enter the shot, meaning that these don’t factor into our test data. The buffalo, as we found through clipless flight, are being drawn at all times.

This benchmark isn’t the game – they aren’t the same thing, and the benchmark is not representative of the game. We hope it isn't, anyway.

But that’s the problem. This isn’t representative of the game, and yet, as a high-profile title, people are basing GPU purchases off of the benchmark results. This goes beyond “AMD vs. NVIDIA” – even if we assume a vacuum where a user only buys from one vendor, that user is still being misled into purchasing overly powerful hardware. Say someone only buys nVidia, the company clearly advantaged here – that someone might jump to a 1080 Ti or a 1080, rather than a perfectly capable 1070, and that’s entirely because the benchmark (emphasis: not the game) makes things look more demanding than reality. The benchmark, what with its unimpressive implementation of otherwise promising graphics technologies, shows upwards of 40% performance dips from settings that realize no visual improvement. Again, this is with fixed high graphics settings outside of GameWorks. Our video embedded in this article demonstrates on vs. off GameWorks implementations, clearly showing that grass is the only realized, global effect. We’re not complaining that GameWorks is more taxing on AMD than nVidia – that’s to be expected, as it is nVidia software, and is a separate discussion – but that there is no visual improvement conveyed in the benchmark utility, yet there most certainly is numerical impact to benchmark numbers.

The fact of the matter is that these results are poisoned by buggy or incompetent execution, largely attributable to Square Enix and their needlessly rendered objects, scenes, and needless rendering of highly intensive settings that unevenly impact the GPU vendors. The aggregate numbers are further poisoned by a lack of control, poisoned twice more by the ability to break the benchmark scoring by going off-rails, and one more time by the locked-down graphics settings. Had Square Enix granted the ability to tune these settings manually, we would have found out about this closer to launch. Instead, thanks to help from reddit users, we had to manually find all of these profound inadequacies in the FFXV benchmark, long after most users had already found numbers pertaining to purchase options.

This comes down to rendering unnecessary detail and being disingenuous in the intentions of the benchmark, which were to demonstrate the game, and we're not confident that this tool represents the final product. It’s likely that Square Enix will optimize this later. NVidia walks away looking bad here, as their technology and their brand are plastered all over the game’s digital box art, with their competitor receiving the worst end of the perceived performance deficit (although both brands suffer, AMD undoubtedly suffers more – and for no visual gain). Although we don't believe this to be malicious, nVidia does ultimately have some responsibility to ensure its ISVs are properly implementing the GameWorks technology. We have been in contact with nVidia for the last few days, and we know that the company is working diligently with Square Enix to address and resolve the issues raised within this content. AMD isn’t free to go either, granted, as the company’s Vega 56 operates at a deficit to the 1070, but AMD’s deficit falls under more usual, expected categories of driver tuning and architectural differences. AMD has to work on its frametime consistency – but again, we’re basing this off of a flawed benchmark utility, so we’ll have to re-evaluate all devices on launch of the game. We’d put most blame on Square Enix for a clearly rushed, poorly thought-out benchmark utility. Its execution is lazy, with (actually rendered) objects scattered all over unviewable, subterranean void, and the result is that GPUs look significantly underpowered, with one vendor looking needlessly and excessively disadvantaged.

We liked this benchmark utility at the start – automation and scripting came easily, and consistency wasn’t so bad once kinks were figured out. Now, unfortunately, having personally discovered all of these issues, we strongly recommend against basing any purchases off of the utility. We’d encourage waiting until the game ships, then re-evaluating to see if Square Enix has reconsidered its decision to burden GPUs with occluded-but-rendered fur. We’re not sure precisely nVidia’s involvement with development, as the company doesn't make or code the game, but it is undoubtable that nVidia’s brand adorns the game’s pages up-and-down. Whether or not nVidia shares blame with Square Enix is irrelevant – collateral damage over an already-controversial GameWorks package will include the company. Given the sensitivity of GameWorks, some care in addressing this issue would benefit the image of all involved parties. NVidia has indicated to GamersNexus that the game is being looked at closer. We will share updates as we receive them. Even if this is "just a bug," it is one which largely invalidates the reliability of the utility.

As it stands now, this benchmark is functionally useless as a means of determining component value. It is bereft of realism and plagued with, at best, optimization issues. We hope that this changes with the final game release; again, the benchmark is just 3.7GB, and the game will exceed 100GB. The point, though, is that the benchmark is likely unrepresentative of the final game, and therefore useless outside of synthetic testing and academic studies of performance. We believe that this is primarily on Square Enix, and ask that the pitchforks be held until the final game launches. We will revisit at that time. The appropriate parties are aware of our concerns, and are actively investigating. Although the GameWorks options aren't fully visualized and realized in this current benchmark, like the conformity of grass upon player interaction, it is our understanding that this will change with launch of the game. It would appear that Square Enix shipped a benchmark which is not only incomplete (see buggy rendered objects throughout the void), but is also inconsistent (character spawns, camera movements) and does not fully integrate GameWorks properly. Until a time at which Square Enix can properly enable GameWorks settings, and can properly cull unnecessary objects that place high load on the system, we can't rely on the benchmark utility for relative performance. That's all this comes down to -- Square Enix is falling victim to the usual high-paced development timelines, and has rushed something out which fails to accurately depict three things: The relative performance on all hardware, the relative performance between AMD and nVidia hardware, and the visual impact of GameWorks effects (which we expect to change later, but we'll see). We have no doubt that, given GameWorks' controversial past, this will lead to calls of intentional deceit by nVidia. Although we allow for the possibility, we also urge that development incompetence is more likely the cause of improper graphics implementation and improper object culling. If that doesn't turn out to be the case in the final launch, we'll naturally let you all know. The teams have about a month to finalize the content. Although the benchmark is on the same engine, it only contains 4% of the game's data. Hard to get too conclusive, knowing that.

Square Enix is responsible for the higher-pipeline functions of calling and rendering objects, like the buffalo, and it's not like GameWorks has the ability to reach outside of the world, grab assets, and force them into the game. We would put this squarely on Square Enix, for now, but nVidia does have some responsibility in getting the rest of their GameWorks features fully integrated and working (even if Square is ultimately coding it, nVidia has its name on the product).

What we do know is that the benchmark is unreliable, and we suggest not using it. Wait for our final launch benchmarks. This will take more time to research, but we will keep our eye on it and are in touch with the teams responsible for the game's graphics.

If you need more visual examples, check the accompanying video for this piece.

Editorial, Testing: Steve Burke

Additional Testing: Patrick Lathan

Video, Additional Research: Andrew Coleman