Exploratory Look at Fan-Requested "Multitasking" Benchmarks: G4560 & 1200

Posted on

Since AMD’s high-core-count Ryzen lineup has entered the market, there seems to be an argument in every comment thread about multitasking and which CPUs handle it better. Our clean, controlled benchmarks don’t account for the demands of eighty browser tabs and Spotify running, and so we get constant requests to do in-depth testing on the subject. The general belief is that more threads are better able to handle more processes, a hypothesis that would increasingly favor AMD.

There are a couple reasons we haven’t included tests like these all along: first, “multitasking” means something completely different to every individual, and second, adding uncontrolled variables (like bloatware and network-attached software) makes tests less scientific. Originally, we hoped this article would reveal any hidden advantages that might emerge between CPUs when adding “multitasking” to the mix, but it’s ended up as a thorough explanation of why we don’t do benchmarks like this. We’re using the R3 1200 and G4560 to primarily run these trials.

This is the kind of testing we do behind-the-scenes to build a new test plan, but often don’t publish. This time, however, we’re publishing the trials of finding a multitasking benchmark that works. The point of publishing the trials is to demonstrate why it’s hard to trust “multitasking” tests, and why it’s hard to conduct them in a manner that’s representative of actual differences.

In listening to our community, we’ve learned that a lot of people seem to think Discord is multitasking, or that a Skype window is multitasking. Here’s the thing: If you’re running Discord and a game and you’re seeing an impact to “smoothness,” there’s something seriously wrong with the environment. That’s not even remotely close to enough of a workload to trouble even a G4560. We’re not looking at such a lightweight workload here, and we’re also not looking at the “I keep 100 tabs of Chrome open” scenarios, as that’s wholly unreliable given Chrome’s unpredictable caching and behaviors. What we are looking at is 4K video playback while gaming and bloatware while gaming.

In this piece, the word “multitasking” will be used to describe “running background software while gaming.” The term "bloatware" is being used loosely to easily describe an unclean operating system with several user applications running in the background.

Test Pattern Options

We narrowed potential workloads down to a few things our readers do regularly while playing games:

- Watching a video

- Recording a video

- Logging performance (with a tool like AIDA64)

- Participating in a Discord or Skype call

- Running a bunch of awful, awful bloatware in the background

Don’t get too excited about that list, though: there’s a reason this article isn’t titled “multitasking test that was easy and also worked really well.” Results are included for #5, but there were problems that make them unreliable at best.

The R3 1200 and Pentium G4560 were chosen as test subjects. Budget CPUs made the most sense, since high-end CPUs should have no problem playing a video and a game at the same time (and if they do, there’s probably something else going on). The G4560 in particular has been a target for “bad at multitasking” complaints because of its two physical cores.

The R3 1200 performed nearly identically to the G4560 in our original benchmarks, especially in the four games we chose to test: Ashes of the Singularity: Escalation, Metro Last Light, Rocket League, and Total War: Warhammer. This was to make it easier to roughly compare performance changes. We wanted baselines that are nearly identical for the starting point, so that we eliminate some of the complications of determining absolute performance versus relative performance decay from multitasking.

Two monitors were used for all tests, even the baseline FPS benchmarks. Games were run at 1920x1080 fullscreen. Benchmarks were done in multiple passes, 150 seconds apiece, and were not the same as our usual benchmark scenes, nor did they use the same hardware.

- CPUs: R3 1200, G4560

- Motherboards: ASUS Crosshair VI Formula, MSI Z270 Gaming Pro Carbon

- RAM: 16GB GEIL 3200MHz (for each)

- PSU: 750W EVGA G2L

- GPU: GTX 1080 FTW

THE TEST

Video Testing

We started with a simultaneous video/game benchmark. We know Google Chrome is the #1 “other thing” that our readers have open while gaming, but decided against using it for testing. It’s not a good starting point. Chrome is updated constantly, and there are so many little optimizations built-in (caching, not loading tabs until they’re active, etc.) that we have no confidence in it as a consistent benchmarking tool. Instead, we used 64-bit VLC.

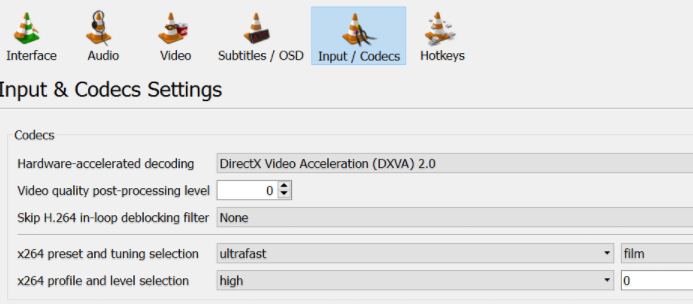

VLC was attractive because it has an expansive options menu and because it can count dropped frames, but it also came with some issues. Out of the box, VLC was hitting nearly 100% CPU usage while watching a 4K video. Toggling some options in the Codecs section (as seen above) helped with that, but then the software was no longer stock. In addition, here’s a little sample of what it took to log a single benchmark run:

Windowed mode is mentioned because VLC was crashing when certain games were launched in fullscreen. Logging the framerate of video playback was important, since one of the issues people experience is video choppiness, not just loss of framerate ingame. That meant using two framerate loggers on two windows at the same time. We considered switching to Windows Media Player, but playback was detecting at more than 60 frames per second (of a 60 FPS video), which indicates possible Microsoft shenanigans. Video testing was shelved for the time being and we moved on.

The Nuclear Option

At this point, the bloatware option looked the most reliable. The scenario we created isn’t especially likely, but it’s possible, and it was designed to hurt performance in a definitive way so that comparisons could be made. Behold, an abomination:

Game clients: Blizzard, Origin, Uplay, and Steam

Monitoring software: HWiNFO64, MSI Afterburner

Chat clients: Discord (in call), Skype

Hardware customization clients: NZXT CAM, Corsair Utility Engine, Logitech Gaming Software (with Overwolf running but unused)

Productivity software: Teamviewer, Dropbox (both installed but not actively used)

Other: VLC (looping an MP3)

These programs were installed from the same files on both boot drives, which now need to be thoroughly wiped before they’re reused.

Here’s the problem: At least half of these programs are internet-attached, but that’s what people keep asking us to test – so we did. Another thing: If we got these benchmarks working in a satisfactory manner, we’d want to look at performance loss from baseline to multitasking, not outright performance. Outright performance has the R3 and G4560 starting at different baselines in some games.

Testing Background Applications & Gaming

For some reason, these processes affected the R3 1200 much more heavily, even at idle. We don’t believe this is because the G4560 is inherently better, but it seemed as though things were being handled differently on the AMD platform. After further digging, we found the culprit: the Blizzard client, or Battle.net, whatever it’s called by the time this is published, was the biggest contributor in decreasing the 1200’s performance, but barely affected the G4560 during its day-prior test pass.

Blizzard’s client was unreliable to have open for the benchmark -- but wasn't alone. Other software also had incalculable behavior. Beginning with expectations, most of you would agree that it is reasonable to assume one of two primary outcomes when playing a game while Battle.net is open: Either there’s effectively no performance difference between the G4560 and R3, or there’s a slight advantage for the R3. What you wouldn’t expect, though, is for the G4560 to outperform the R3 by 31%. But that’s what happened – and we can show you why.

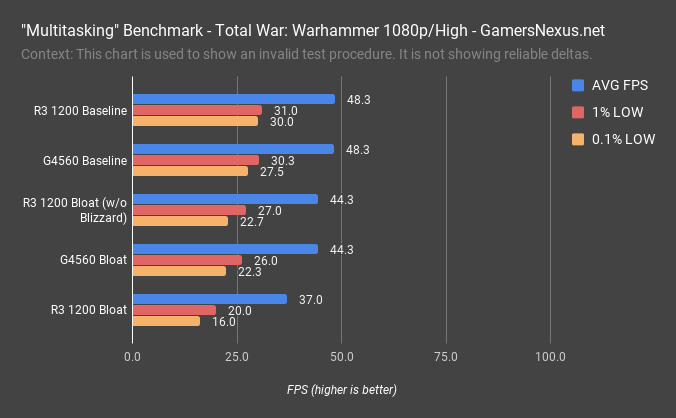

We’ve got a few main figures, here: The G4560 baseline is 48.3FPS, with lows at 30.3 and 27.5FPS. The R3 1200 is remarkably close by, with the two parts more or less tied and within variance in the frametime department.

With these background applications, including popularly requested game clients like Battle.net and Steam, we lose 4FPS on the 4560 AVG, dropping 8% of performance. The hit to frametimes is a bit worse, illustrated by 1% lows and 0.1% lows. Here’s a surprise, though: The R3 1200 drops 11FPS, or 24%, and now suffers with halved 0.1% lows that align with more stutter.

Clearly something went wrong.

We started disabling applications, ultimately finding that Battle.net decided to do something in the background during the R3 tests. Solving for this bumps the R3 1200 up to G4560 performance once again, with the two more or less tied when we cut Battle.net from the R3.

Again, these are not definitive benchmarks. We are not telling you that one of these CPUs is better than the other in “multitasking,” or even that they are tied in “multitasking.” What we’re telling you is that it’s difficult to create a reliable testing scenario with the applications that everyone wants to see benchmarked. You’re going to get wrong data.

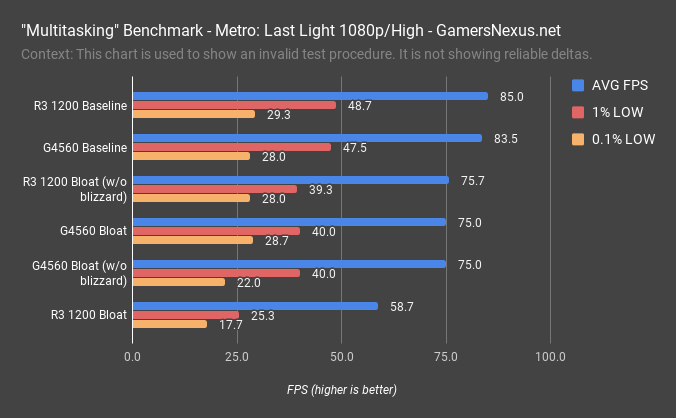

Metro: Last Light + Multitasking

Here’s Metro: Last Light between the same CPUs: Baseline, we’ve got the G4560 at about 84FPS and R3 1200 at about 85FPS AVG, with lows more or less equal. The G4560 drops to 75FPS AVG with bloatware, or about 10% performance loss. The R3 1200 drops 31% of its performance – again, because of completely unpredictable internet-attached software in the background – and bumps to a more proportional loss when we drop Battle.net.

And who knows what other software was responsible for dropping some of our frames, here. We got lucky for even figuring out it was Blizzard, because retesting the R3 1200 doesn’t always produce those dismal results – it just depends on what Battle.net is doing and when.

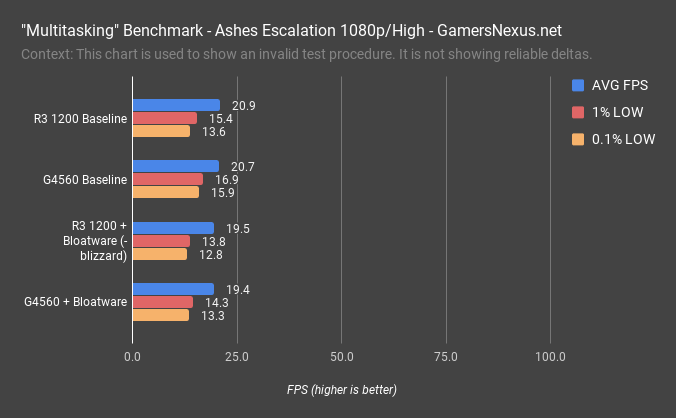

Ashes & “Multitasking”

Here’s Ashes: Escalation, where we see a 1-2FPS loss with bloatware – though the G4560 ran Blizzard’s Battle.net and the R3 did not, so again, this isn’t a test you can rely on for comparative data between the two, aside from showing that we saw little performance loss in this particular title.

But here’s the thing: This title is taxing the CPUs fully anyway, so now we need another means of measurement: We need to know if the background software stops logging, stops playing back music smoothly, or begins reporting data later than average. In the past, for instance, we’ve seen that simultaneous FurMark and Prime95 workloads cause monitoring software to stop logging every second, and instead log at random intervals as wide as 20 seconds apart. You have to account for the performance of that software, too, and then you’d have to figure out what’s the subjective best result.

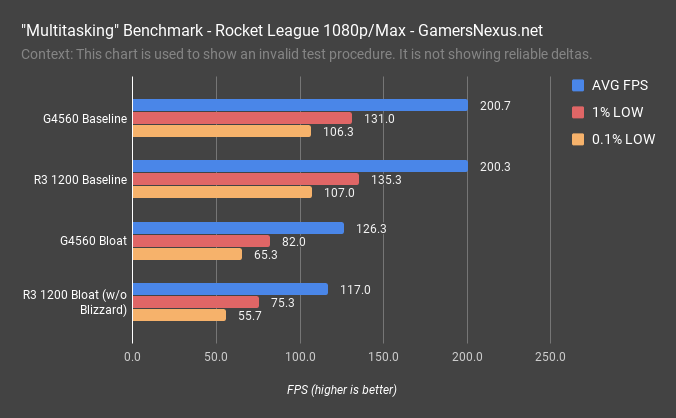

Rocket League

Here’s one more: Rocket League, where we’ve again got roughly equal performance to start, then a gap of about 8% when bloated. Here’s the thing: We have no idea if we’re getting game priority on the G4560 and some negative effect to the background software, or if the R3 1200 is genuinely just slower, or if there’s some sporadic and unpredictable background process firing-off as a result of all the game clients and internet-attached applications.

None of the game clients were updating or downloading games during testing, but they’re all connected to the internet and constantly active at some level. By stopping the Blizzard client, the R3 1200 once again performed more or less the same as the G4560. The Blizzard client was having a disproportionate effect, and it’s unclear why.

Conclusion

Again, the problem of multitasking is unique to everyone that experiences it. HWiNFO and Presentmon are free hardware and FPS logging tools that can be used by anyone to record some numbers with and without background processes running. There are many, many hardware and software factors to consider, and sometimes programs hog resources for mysterious reasons. Keep an eye on task manager for processes that are keeping CPU usage high.

We could test FPS in games with a low, constant synthetic load in the background, but that’s not realistic, which defeats the purpose. We could just open a YouTube video and log a game of CS:GO, but there are uncontrolled variables in that case. We might revisit this subject in the future, but for now, we can’t draw relevant conclusions with a level of accuracy that makes us comfortable.

The point is that this stuff is hard to do right, and ideally requires things that aren’t internet-attached. We could, for instance, make Excel iterate through some formula ad infinitum – that’d be reliable – but then we’re not really doing something that folks have asked for, and commenters would again complain about an “unrealistic” test environment.

Editorial: Patrick Lathan & Steve Burke

Video: Andrew Coleman