Everyone talks game about how they don’t care about power consumption. We took that comment to the extreme, using a registry hack to give Vega 56 enough extra power to kill the card, if we wanted, and a Floe 360mm CLC to keep temperatures low enough that GPU diode reporting inaccuracies emerge. “I don’t care about power consumption, I just want performance” is now met with that – 100% more power and an overclock to 1742MHz core. We've got room to do 200% power, but things would start popping at that point. The Vega 56 Hybrid mod is our most modded version of the Hybrid series to date, and leverages powerplay table registry changes to provide that additional power headroom. This is an alternative to BIOS flashing, which is limited to signed drivers (like V64 on V56, though we had issues flashing V64L onto V56). Last we attempted it, a modified BIOS did not work. Powerplay tables do, though, and mean that we can modify power target to surpass V56’s artificial power limitation.

The limitation on power provisioned to the V56 core is, we believe, fully to prevent V56 from too easily outmatching V64 in performance. The card’s BIOS won’t allow greater than 300-308W down the PCIe cables natively, even though official BIOS versions for V64 cards can support 350~360W. The VRM itself easily sustains 360W, and we’ve tested it as handling 406W without a FET popping. 400W is probably pushing what’s reasonable, but to limit V56 to ~300W, when an additional 60W is fully within the capabilities of the VRM & GPU, is a means to cap V56 performance to a point of not competing with V64.

We fixed that.

AMD’s CU scaling has never been that impacting to performance – clock speed closes most gaps with AMD hardware. Even without the extra shaders of V64, we can outperform V64’s stock performance, and we’ll soon find out how we do versus V64’s overclocked performance. That’ll have to wait until after PAX, but it’s something we’re hoping to further study.

Test Platform

| GN Test Bench 2017 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing | - | - |

| CPU | Intel i7-7700K 4.5GHz locked | GamersNexus | $330 |

| Memory | Corsair Vengeance LPX 3200MHz | Corsair | - |

| Motherboard | Gigabyte Aorus Gaming 7 Z270X | Gigabyte | $240 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | Plextor M7V Crucial 1TB | GamersNexus | - |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | Asetek 570LC | Asetek | - |

BIOS settings include C-states completely disabled with the CPU locked to 4.5GHz at 1.32 vCore. Memory is at XMP1.

Max Clock Stability vs. Power Limit

Given all the uncertainty surrounding the initial driver launch and limited voltage and broken clock reporting functionality, we first needed to check whether the powerplay tables were actually doing anything. Fortunately, the clock reporting was fixed in drivers 17.8.1 onward, something we showed in our latest livestream with the Hybrid 56, so we now need to isolate the power target. During the last livestream, we also checked voltage at the back of the card (rather than just trusting software) to better determine undervolting functionality with the latest drivers. Check that stream for more of the undervolting aspect.

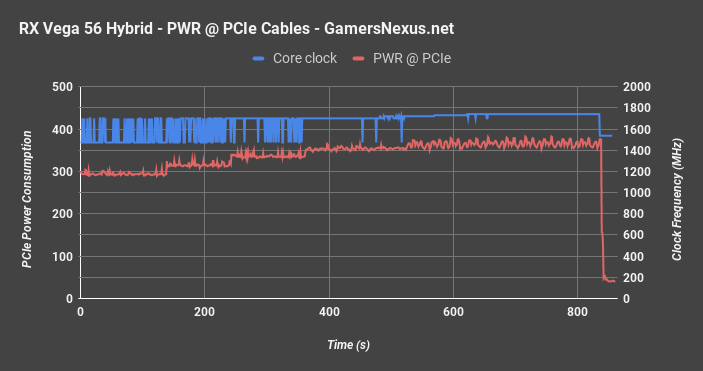

Here’s a look at clock stability versus the power target, represented by PCIe power draw. Power consumption starts off at around 300W when we have a 50% offset (stock supported), a bit below what we saw on the reference Vega 56 card. We’ve reduced power leakage a bit here, so the 50% offset now comes down to 296W rather than 308W, a reduction of 4%. That doesn’t really do enough to get us much extra; regardless, that’s the starting point. The clock bounces around sporadically at this point, jumping between 1474MHz and 1702MHz (our target). Because the card’s power consumption is limited to about 300W, we can’t achieve stability at higher clocks without pushing power. Dithering clocks are a direct result of the card not getting enough power, and in this case, that’s because AMD set a hard cap on what V56 can pull.

We first go to 60% power, which gets us another 16W to work with in this workload. The clocks still aren’t stable, so we move to 70% power around the 240-second mark. That boosts us 33W over the original 50% offset, providing a clear boost to somewhat stabilize the frequency. At 80% power, we start pushing 350W through the PCIe cables, which largely helps stabilize the 1702MHz target. The next attempt is 1722MHz, which proves unstable, and requires a further jump to 90% power. This puts us at 360W-365W. 1732MHz is roughly stable at this same power draw. For 1742MHz, we boost to 95% power to fully stabilize and draw 370-380W.

The result is 70-80W more than reference, but a clock that stabilizes at 1742MHz rather than one that bounces between 1474 and 1702. This is better than we saw in the livestream, where we just blasted power at 406W down the PCIe cables to achieve stability the quickest. Our max clock in the stream was also 20MHz lower, so we’ve done better here. Instability began to emerge regularly at 1762MHz (even with 120% power), so we called it at 1742/980MHz for synthetic tests, eventually dropping down to 1732MHz for gaming tests.

V56 Hybrid Temperature Response

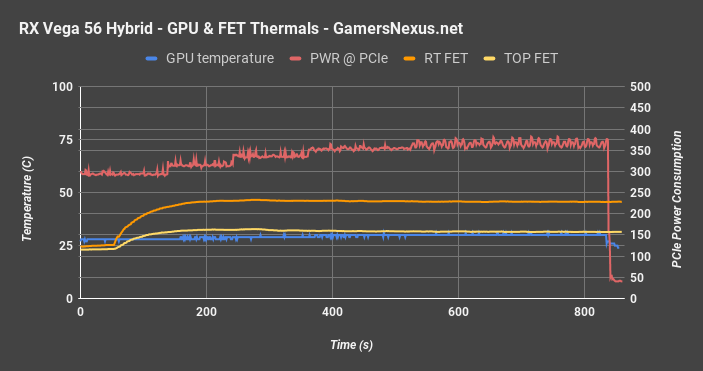

Here’s the temperature response to all of that. Core temperature pings 28C quickly, maxing out at 31C. This is not a delta T over ambient reading – ambient is about 24C here, but we know that our Vega 56 underreports its temperature by at least a few degrees. We can’t be sure just how much, unfortunately. Either way, we’re about 40-45C below the reported temperature on air. The MOSFET temperatures max out at 46C for the middle-right FET and 32C for the top FET, both of which were cooled by direct airflow from a high-RPM fan. For perspective, the reference card had FET temperatures of about 63C for the right FET and 73C for the top FET. We’ve managed to reduce MOSFET temperatures by blasting them with air, despite the 70-80W of extra power to the core.

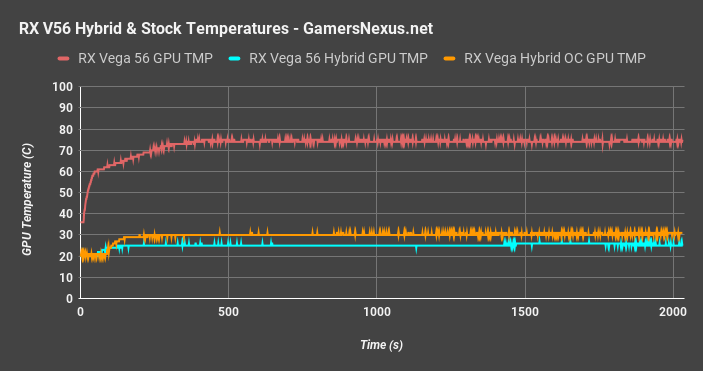

V56 Comparative Thermals – Reference vs. Hybrid

Getting into comparative thermals, we see the RX Vega 56 reference card operates close to the 75C target, with our Hybrid mod operating a 25C (reported) GPU diode temperature. Overclocking to 1742MHz core and 980MHz HBM2, using a 105% power target, we push 30C GPU diode temperature. Note here that ambient temperature is about 23-24C during most of these tests and is logged actively, which means that Vega’s temperature reporting – on our card, at least – is not fully accurate. It is common for thermistors and thermocouples to have some level of inaccuracy, but this output does appear more impacted than even K-types, which have a +/-2.2C variance, in most cases. Under idle workloads, we’re sitting between 2-4C below ambient, which obviously is physically impossible with this kind of cooling setup. You’d need something chilled to actually achieve that, so the temperature reporting is incorrect to some degree.

Looking at power consumption at the wall, our RX Vega 56 Hybrid system pushes 465W when overclocked to 1742MHz core and 980MHz HBM2, an increase of 165W over the reference RX Vega stock card.

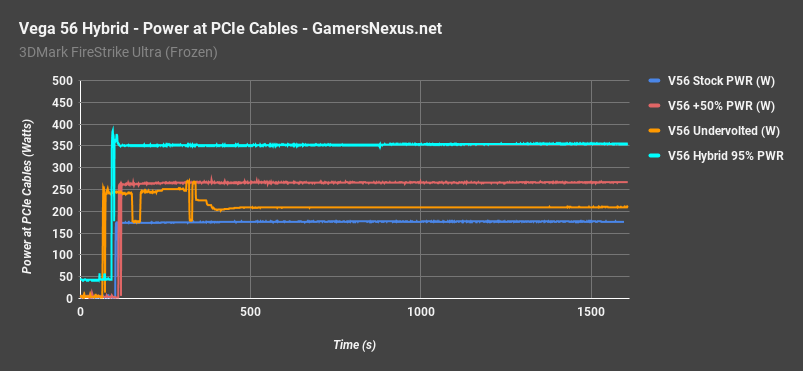

V56 Hybrid Power Consumption at the Rails

This chart will be quick: This is what we used when doing our initial undervolting – that sporadic orange line is from fighting with the software – and shows our range of PCIe consumption being roughly 180W to 355W. We were able to wrangle the Hybrid down to 355W in this particular test, done by dropping to a 95% power target. The drop proved stable for FireStrike, though it was ultimately increased a bit more for gaming. Keep in mind that power consumption varies based on scene and benchmark used, so you’ll see different results based on which test we’re actively showing.

Power Consumption at the Wall

Big note, here: We’ve got a ton more fans hooked up, which means more power consumption at the wall. That’s why the at-rails measurements above are important – they ignore other system variable changes, like the extra fans and pump for the V56 Hybrid.

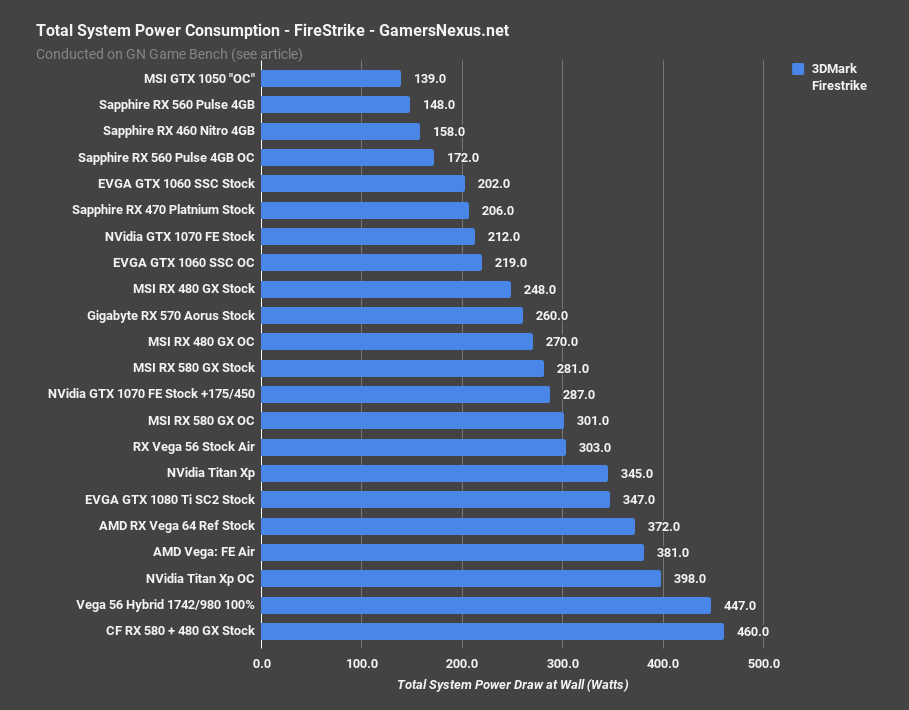

For some quick FireStrike numbers at the wall – again, we’re switching back to wall draw – we see total system power at 447W for the Hybrid OC V56, nearly tying it with CrossFire RX 580 and 480 cards. The stock Vega 64 system draws 372W – so we’ve got a 75W increase – and stock V56 system draws 303W. This aligns with our reported ~60W advantage to V64 out-of-box, despite using the same VRM. A GTX 1080 Ti system draws 347W, or 100W less than the V56 Hybrid OC. Efficiency is not in our favor with this mod – but it’s also not the point. We’re spending a lot of power to keep this thing cool.

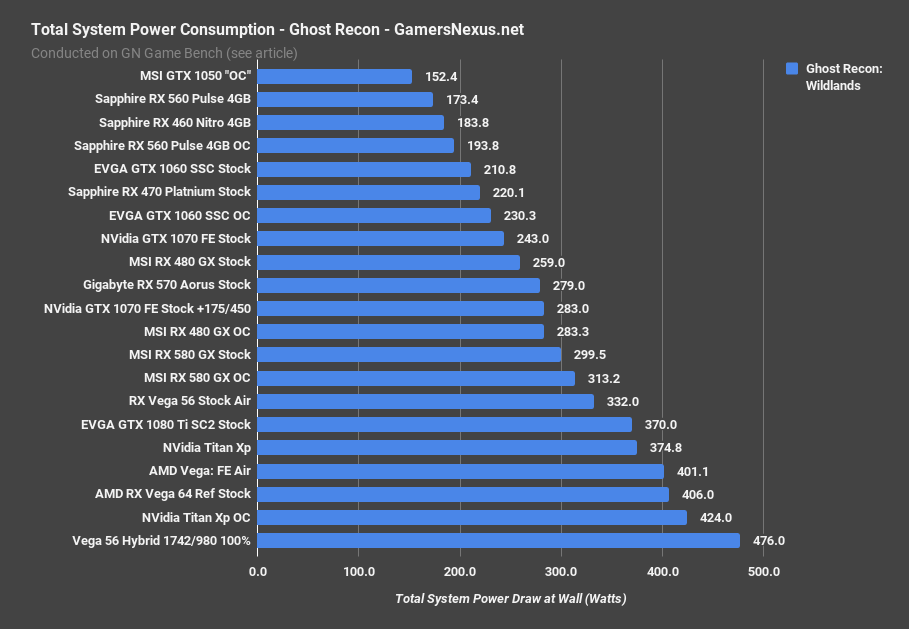

A gaming workload (Ghost Recon) places our V56 mod’s system power consumption ahead of the nVidia Titan XP by about 50W, maintaining the same ~70W offset from the stock V56.

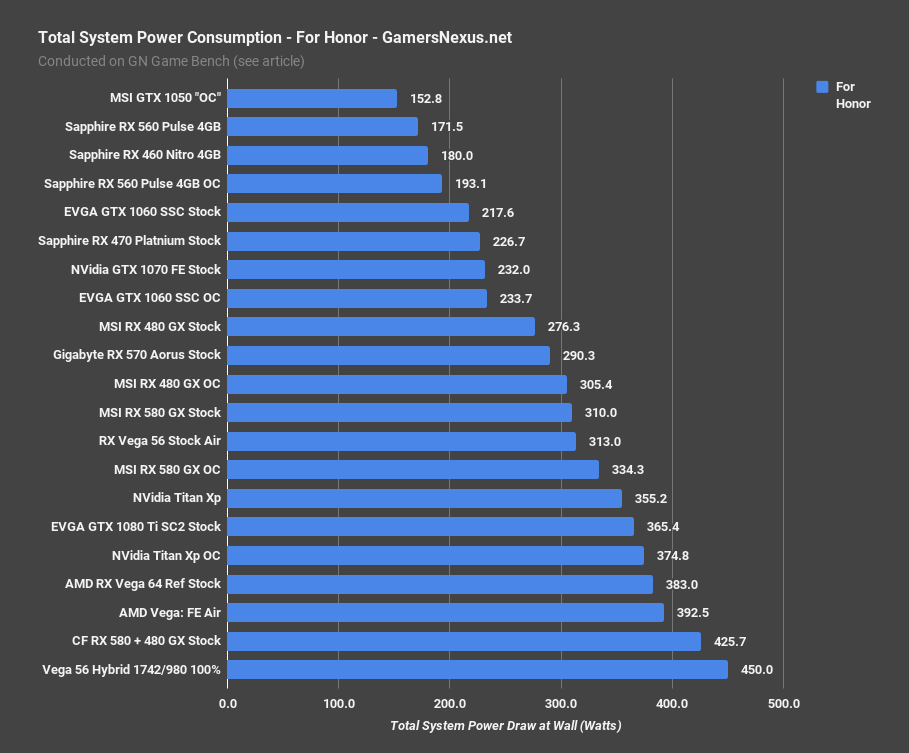

Here’s For Honor, just to show another game.

Similar scaling to GRW.

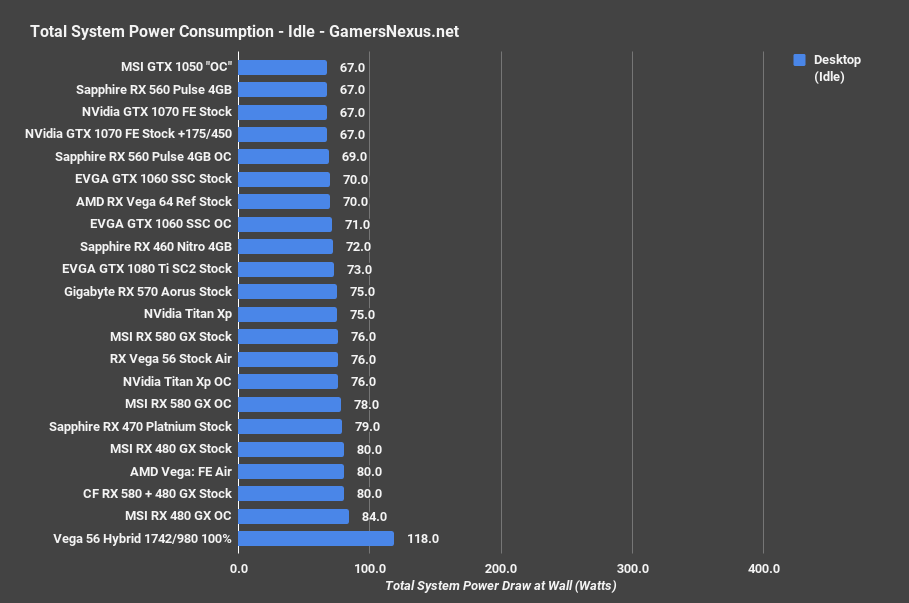

And idle at desktop, which shows increased power consumption both from GPU modifications and from adding more fans to the system:

Continue to the next page for game benchmarks.

Some Quick Notes: Still Adding to Charts

The last few weeks have offered an endless stream of releases. Between Destiny 2, Threadripper, and two Vega cards, we’ve had trouble keeping up. We still want to add a few cards to these charts: The GTX 1080 is not present in every single test yet, and we haven’t yet added an AIB partner or overclocked 1080 to all charts. That time will come for the Vega 64 review and coverage, which will go live a bit after PAX. The Vega 64 card also hasn’t yet been overclocked, and will be done in a separate content piece. As usual, we’re against time windows. Most the effort was focused on overclocking V56 Hybrid; we decided it made more sense to allocate time there than rebenching various 1080s, as V56H is the focus of the piece. There’s only so much time to split between tests.

As for gaming results, we’ll start with the more exciting ones, then work our way toward the disappointing outcomes. This also means starting with games that tend to be favored toward AMD.

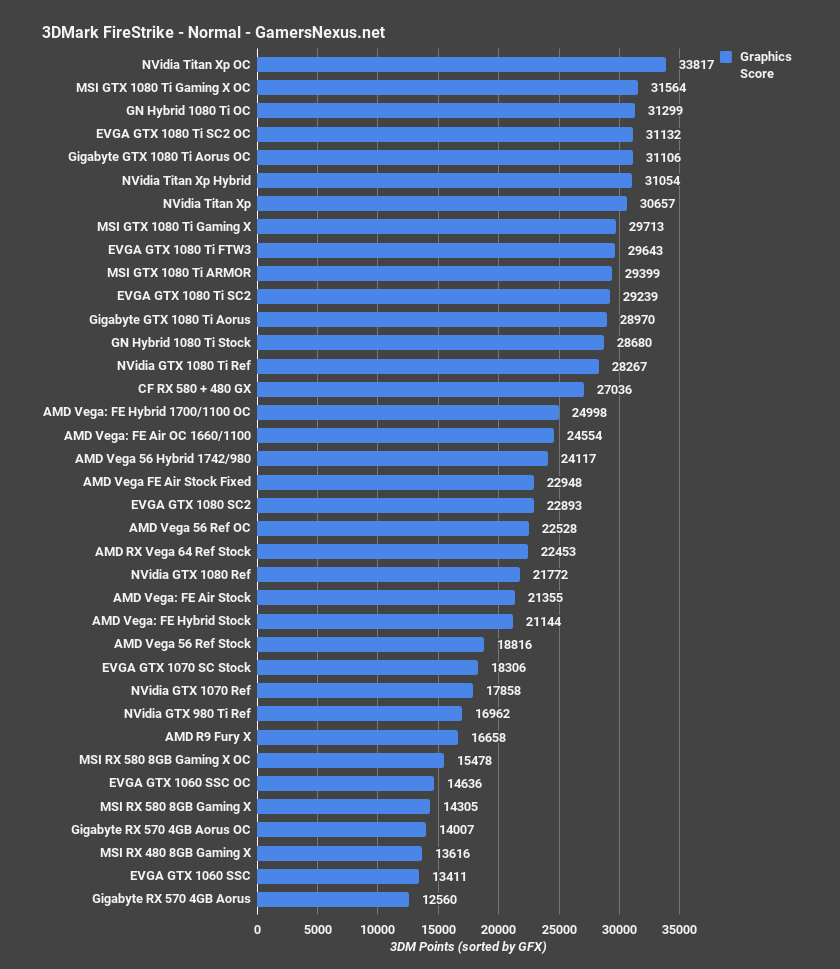

3DMark FireStrike Results

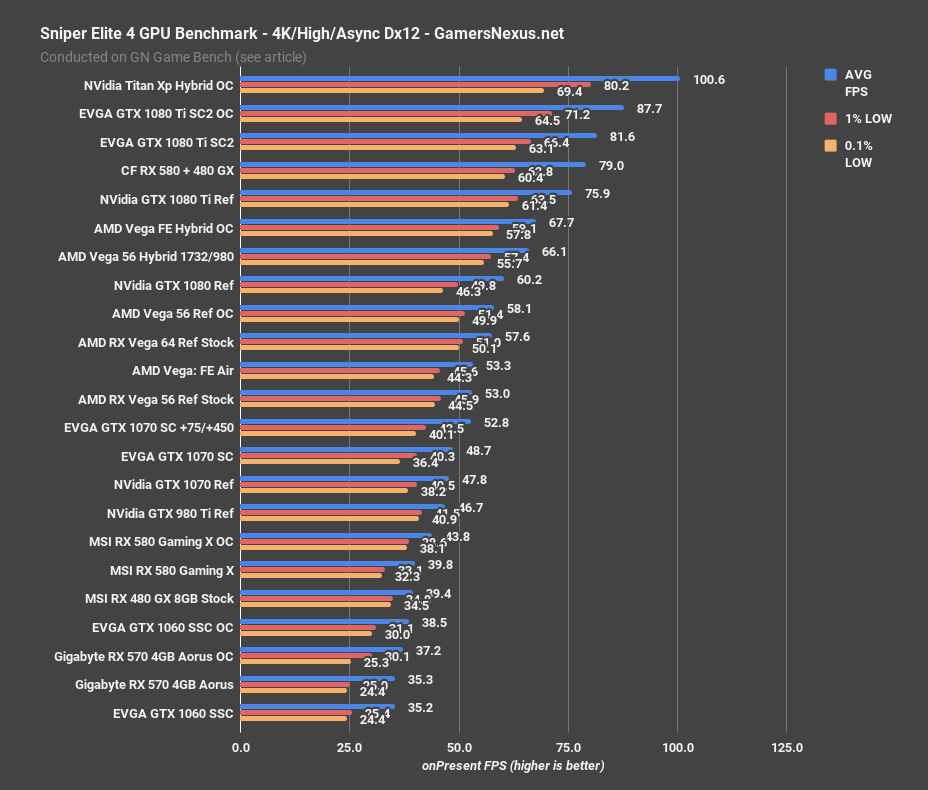

Vega 56 Hybrid OC vs. Vega 64, GTX 1080 – Sniper Elite 4

Sniper Elite 4 has a few relevant numbers: The stock RX Vega 56 card operated an AVG FPS of 53, with a 9% OC and 950MHz HBM2 landing us at 58FPS AVG. That’s an increase of 9.4%, and is largely from the boosted power limit and HBM2, as we learned in previous content. The Hybrid card, clocked to 1732MHz and 980MHz for stability, lands at 66FPS AVG with its 100% power target. Sure, we’re pushing 30A down the PCIe cables for 80W more power consumption, but we’re not concerned about power today. For all those saying they don’t care about power, this is your version of Vega without concern for power.

The gain is 13.8% over the overclocked Vega 56 card, or 25% over the stock Vega 56 card. Compared to the stock Vega 64 card, we’re 14.8% ahead in performance. Note also that Vega 64 is basically tied – technically, behind – the overclocked reference V56, which sort of further reinstates V64’s poor value. Just get V56 and overclock it. Even with AMD’s BIOS locks and other limitations, we’re doing better than a stock V64. We haven’t overclocked V64, but that’ll come after PAX West.

Looking to nVidia cards, the V56 Hybrid OC card is now a bit ahead of the GTX 1080 reference card without an overclock. We haven’t yet gotten around to overclocking the 1080 for this new round of tests.

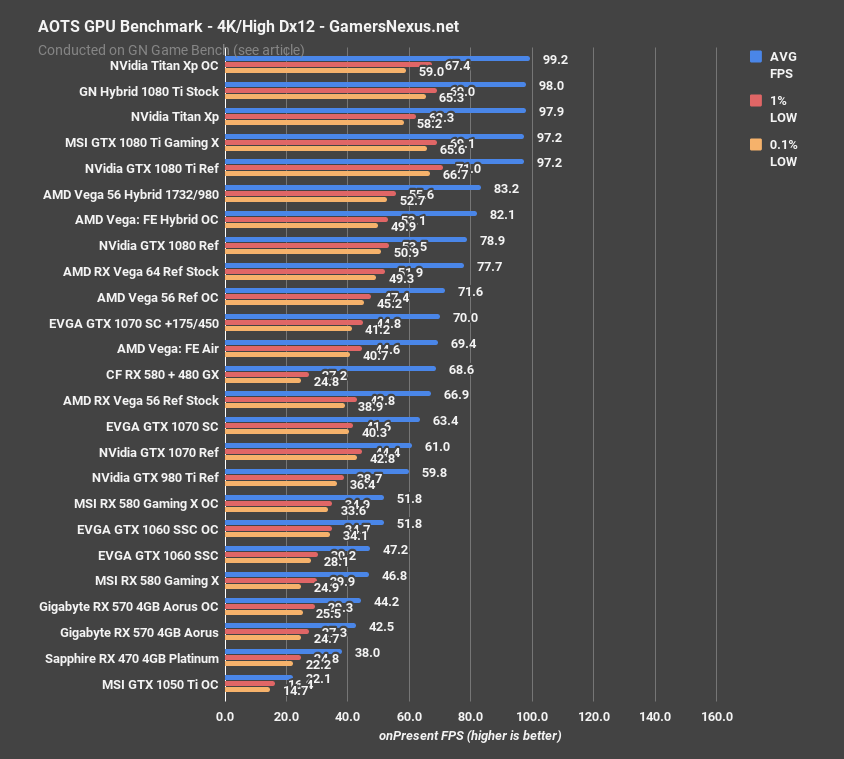

Ashes of the Singularity – Vega 56 Hybrid OC vs. Vega 64, GTX 1080

Ashes of the Singularity testing lands our stock RX Vega 56 blower card at 67FPS, with the overclocked reference card at 72FPS – a gain of 7.5%. Vega 64 runs 8.5% faster, and our Vega 56 Hybrid runs 7% faster than that. At 83FPS AVG, the Hybrid mod is now 16% ahead of the overclocked Vega 56 reference card and 24% ahead of the full stock card.

This lands the overclocked Hybrid 56 ahead of the reference, stock-clocked GTX 1080.

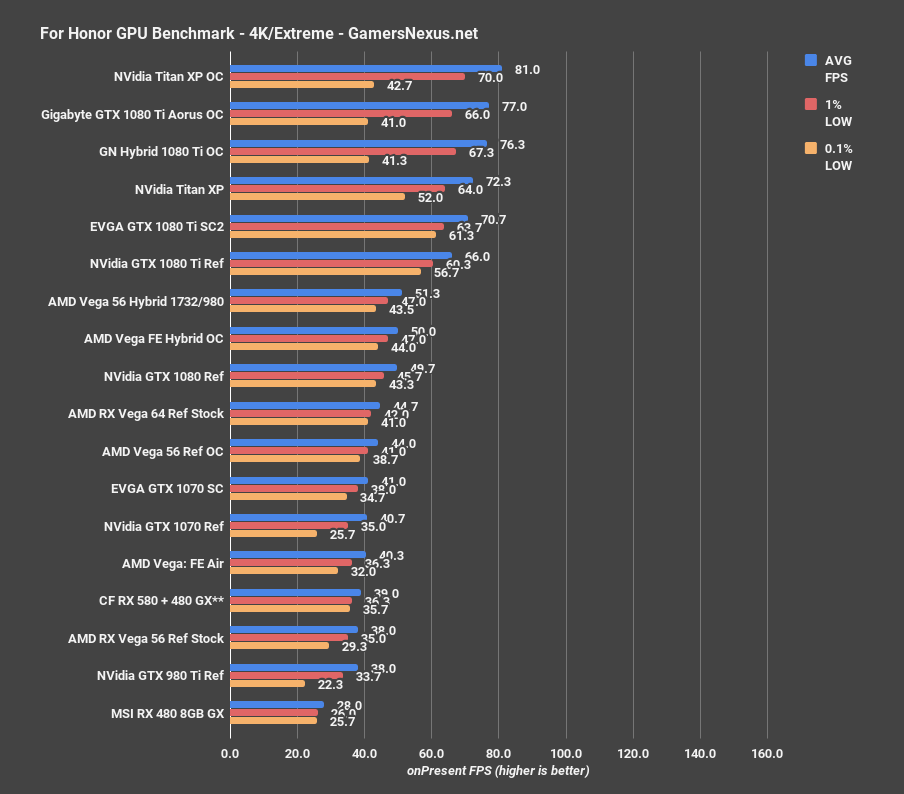

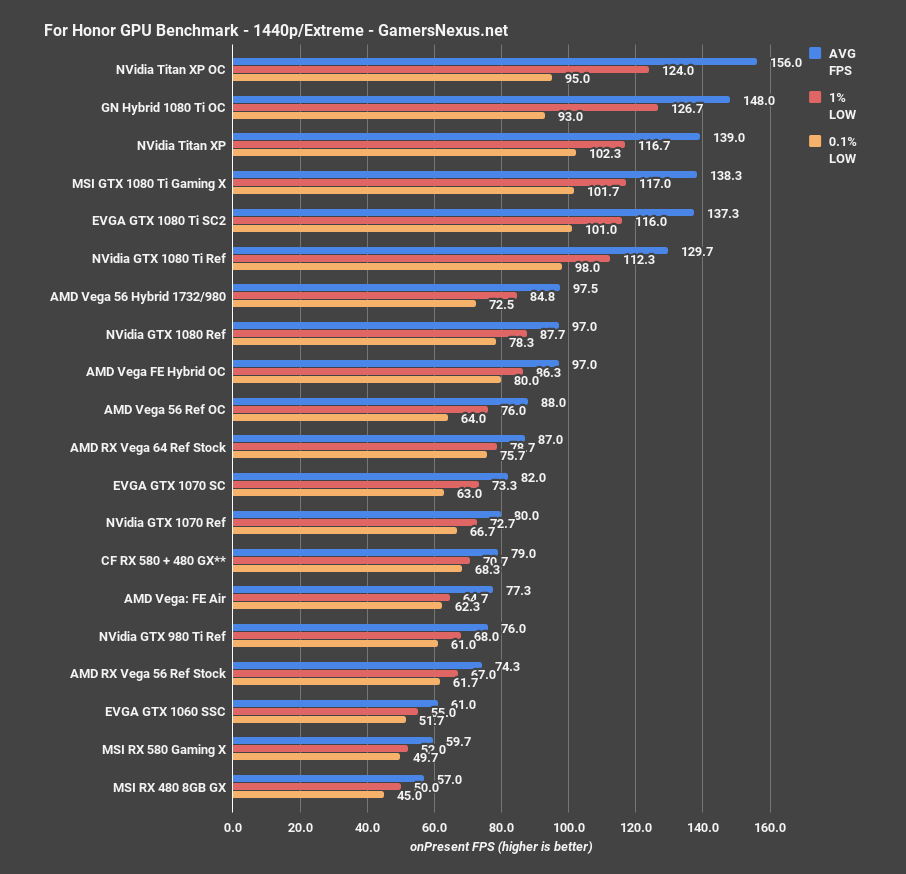

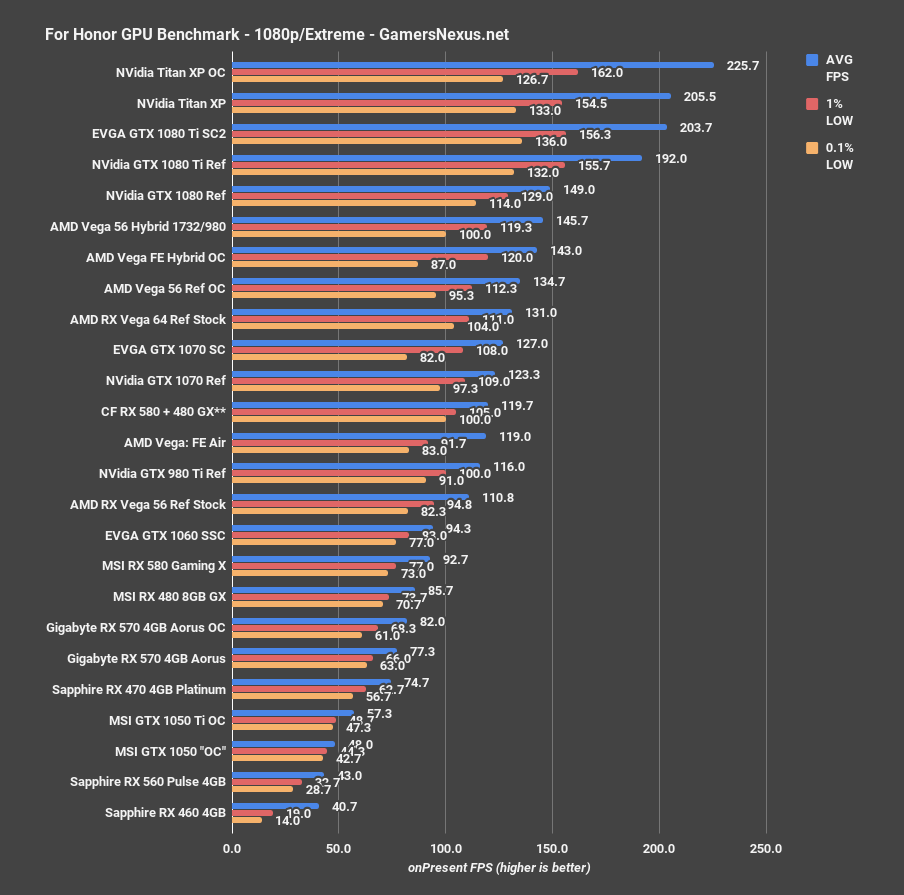

For Honor – Vega 56 Overclocked vs. Vega 64, GTX 1080

For Honor shows surprisingly positive scaling for this overclock. At 4K, we move from 38FPS AVG stock to 44FPS AVG overclocked on reference, which ties-up the Vega 56 with Vega 64, showing that CUs matter less than clock speed on this architecture. The Hybrid OC gets up to 51FPS AVG, a gain of 16.5% over the overclocked reference 56, and now surpasses a stock-clocked reference 1080. Note, of course, that a partner model 1080 would outmatch the Hybrid OC, but still, it’s an admirable jump. If we’re roughly tied with partner models, that’s not a bad place to be. Granted, it’s drawing more power than a 1080 Ti, but that’s the price of powerplay tables mods..

At 1440p, we’re at 98FPS AVG on the Hybrid OC, a gain of 11% over both the overclocked reference card and Vega 64 at stock clocks, with a gain of 31% over the stock Vega 56 card. Again, we’re at way higher power consumption, but have boosted performance greatly. We don’t yet have the 1080 tested at this resolution.

1080p posts similar results: We’ve gained 8% over the overclocked reference card, which is getting much less exciting.

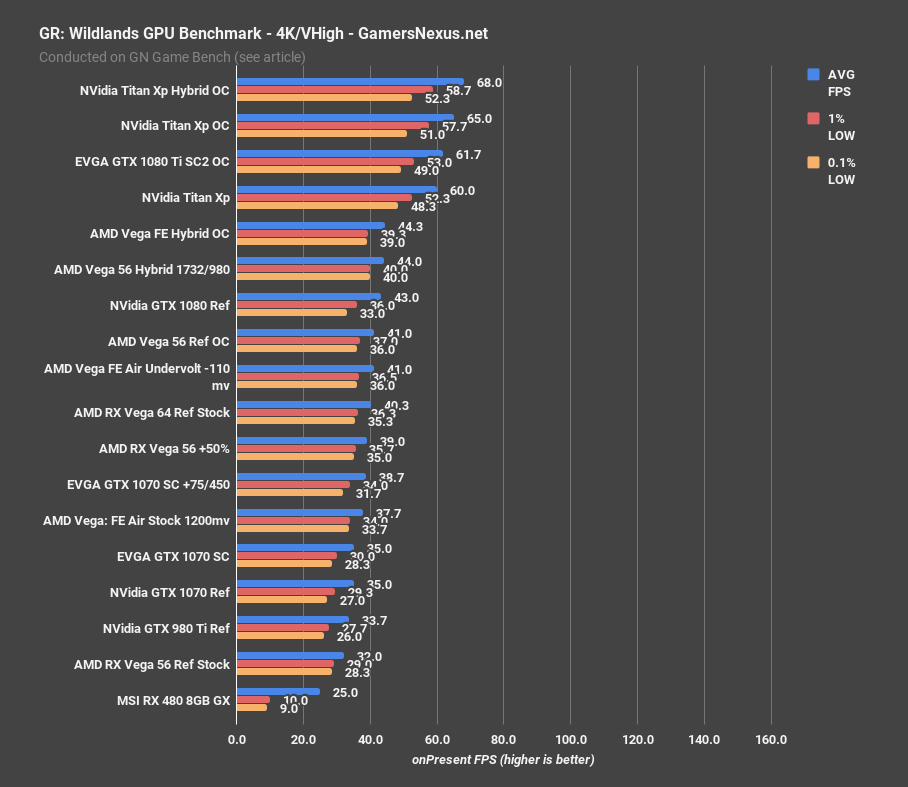

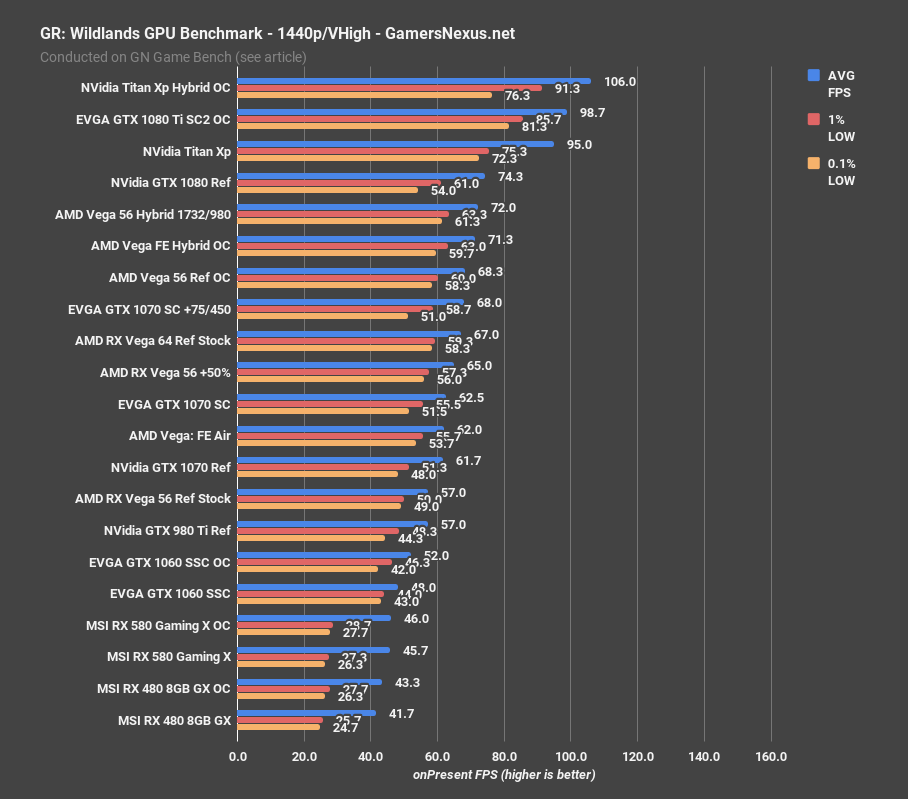

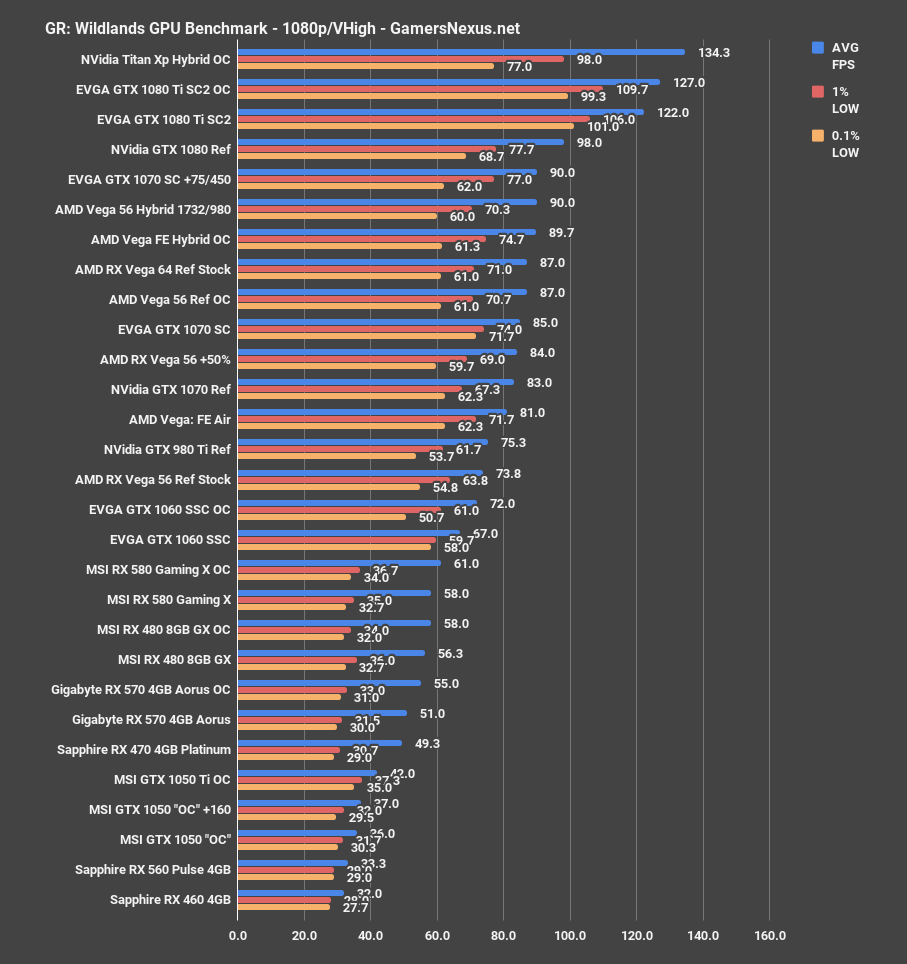

Ghost Recon: Wildlands – Vega 56 Hybrid OC Results

Brace yourselves for the next one. Ghost Recon: Wildlands at 4K positions us at 32FPS AVG for the stock card, 39FPS AVG for the 50% power offset, and 41FPS AVG for the overclocked reference card with the offset. Compared to the power offset, that overclock does almost nothing. The Hybrid OC doesn’t do much, either, as we gain just 5FPS over the 50% offset with the stock card. Not at all worth the power or the time, in this instance.

1440p is next: Vega 56 reference runs 57FPS AVG, the 50% offset is at 65FPS AVG (a 14% improvement just from the offset), but the overclock and 50% offset gets us only an extra 3FPS, disappointingly. The Hybrid OC mod runs 72FPS AVG, another couple-percent gain. Not at all exciting.

Yikes. All that effort, all that power, and we're performing at levels of an overclocked 1070. Not even a modded 1070 -- just an out-of-box card with an OC through Precision. It's safe to say that, for this particular game, the mod isn't at all worth it.

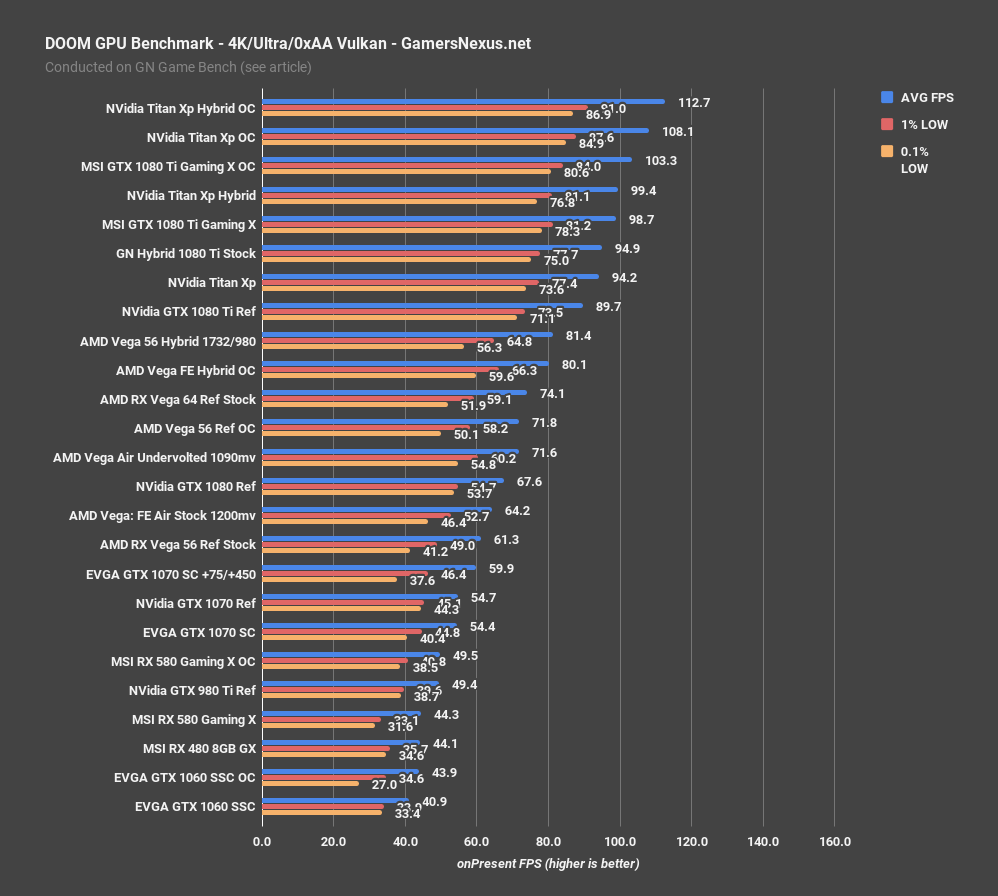

DOOM GPU Benchmark – Vega 56 Hybrid OC vs. Vega 64

DOOM is another AMD-favored title. At 1732/980, our Hybrid V56 lands at 80FPS AVG, planting it firmly ahead of the V64 (at 74FPS AVG, and with worse frametime performance) and GTX 1080 FE stock-clocked card. As always with DOOM, these results can’t really be extrapolated into other games – its performance behavior is unique to id’s execution of the engine.

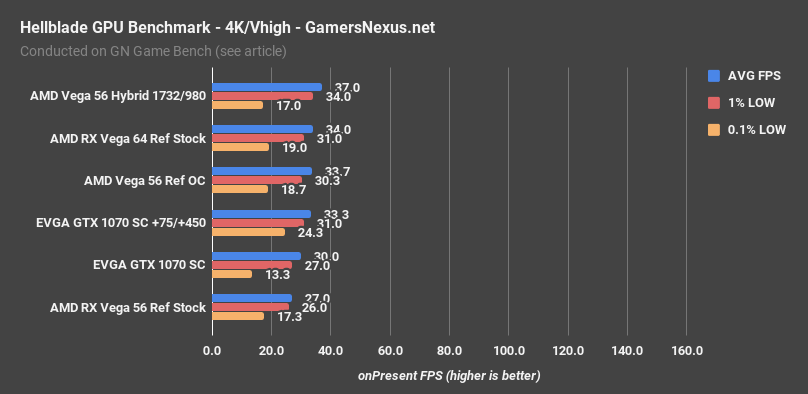

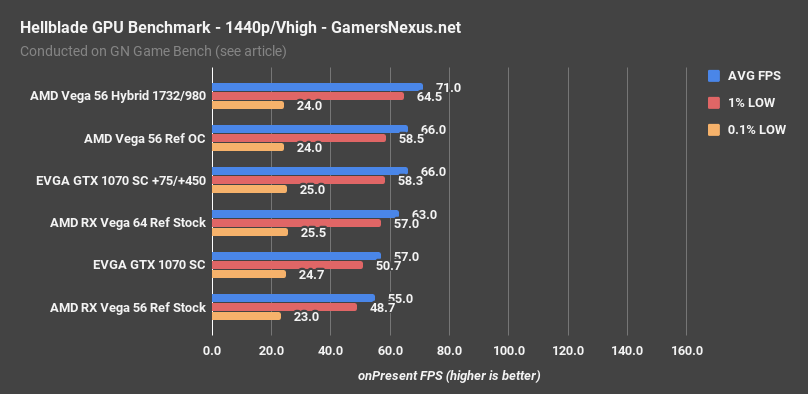

Hellblade GPU Benchmark – Vega 56 Hybrid OC vs. Vega 64

Hellblade is last, and is our least populated chart. We only just started testing this game, so please excuse the lack of data. Let’s just focus on Vega 56’s scaling.

At 4K, the reference V56 operates a 27FPS AVG and boosts to 34FPS with the reference OC. The Hybrid OC unit gets us to 37FPS AVG for massive gains. We were clearly starved for power and clocks in the reference testing. We’re not sure what specific piece of hardware caused the choke point at 4K, but the overclock and over-power helps significantly in addressing it.

1440p scales a lot less impressively, but there’s also less ground to gain. The Hybrid OC card pushes 71FPS AVG, outperforming the overclocked reference card by 8% and the stock card by 13%. Again, an 8% gain is hard to get excited about, particularly given all the effort and power that went into the card.

Conclusion: Lessons from the V56 Hybrid Mod

The primary takeaway is that CUs are far less impacting to performance on Vega than raw clocks. In some tests, we didn’t even have to bypass the power limit in order to surpass stock V64 – but doing so helps keep up as V64 becomes overclocked, something we’ll look into more later. A 50% offset and modest 9% / 980MHz OC gets us to V64 performance levels. In some games, the extra 50% power (going to 100% offset, increasing current to 30-33A at 12.3V) pushes us toward and into double-digit percentage gains over the V56 OC, while other games give us ~7-8%. It just depends on the game, turns out, but results are promising in some instances.

One thing we’ve learned for sure is that V64 is hardly worth a consideration. Fifteen to twenty minutes overclocking even a reference V56 – let alone a partner model – gets us to V64 stock performance. The power mods just make it that much better. They’re probably not worth it in 95% of use cases, but the fact that AMD provided an insanely over-built VRM really does invite the play. Might as well make use of it.

We won’t try to recap the rest here -- the content above handles that in detail.

Editorial, Testing: Steve Burke

Video: Andrew Coleman

Consulting: ‘Buildzoid’