Finding something to actually leverage the increased memory bandwidth of Radeon VII is a challenge. Few games will genuinely use more memory than what’s found on an RTX 2080, let alone 16GB on the Radeon VII, and most VRAM capacity utilization reporting is wildly inaccurate as it only reports allocated memory and not necessarily used memory. To best benchmark the potential advantages of Radeon VII, which would primarily be relegated to memory bandwidth, we set up a targeted feature test to look at anti-aliasing and high-resolution benchmarks. Consider this an academic exercise on Radeon VII’s capabilities.

This content piece is functionally an academic exercise, meaning we will at times exit “real-world” use cases to instead study how the card behaves and scales at a fundamental level. This means looking at unplayable scenarios, like 8K resolution, just to see if scaling improves on AMD over NVIDIA; in theory, based on what AMD has told us about performance, it should, so we just need to validate that. Anti-aliasing takes multiple samples per pixel, often 2x, 4x, 8x, or 16x, and that has a very similar performance impact to increasing resolution. It hits the same part of the pipeline.

If AMD’s memory bandwidth really benefits it in games, what we hypothesize would happen would be a diminishing or reversing performance delta between Radeon VII and the RTX 2080 as resolution and anti-aliasing increase, with a worsening or widening gap, favoring RTX, as the resolution decreases. We already demonstrated how Radeon VII can sometimes close the gap at higher resolutions when we posted our review, and talked about how the gap – like in F1 2018 – would widen at 1080p. Now it’s time to see if it reverses or closes at high resolutions; however, keep in mind that the obvious downside is we enter territory where the content is unplayable at the framerate, hence calling this an academic exercise.

Superposition AA Testing

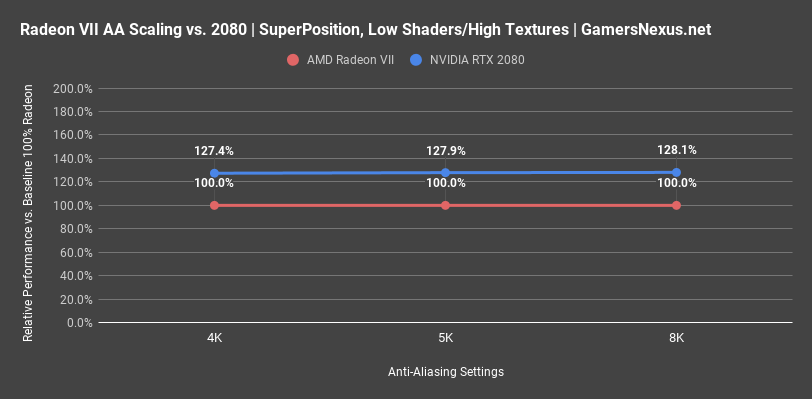

Starting with shaders set to “Low,” textures set to “High,” and resolution changes at 4K, 5K, and 8K, we saw that NVIDIA held a lead of 27.4% over baseline, moving to a 27.9% lead – within error – over baseline at 5K, and then a 28.1% lead at 8K. There’s really no change here from 4K to 8K, at least with regard to scaling, although obviously these resolutions become decreasingly playable as they increase.

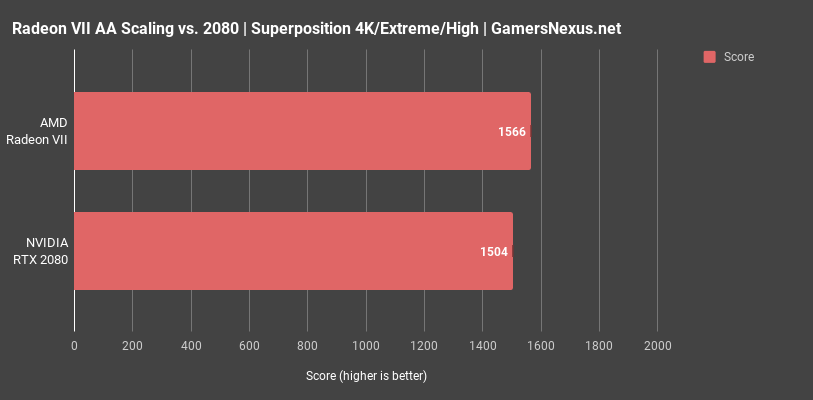

We ran a one-off test next with “Extreme” shaders, finding that AMD actually gained ground in this scenario. AMD operated at 1566 points on average, with NVIDIA at 1504 points. We reran this test 4 times and averaged it, determining that this delta is outside of standard deviation.

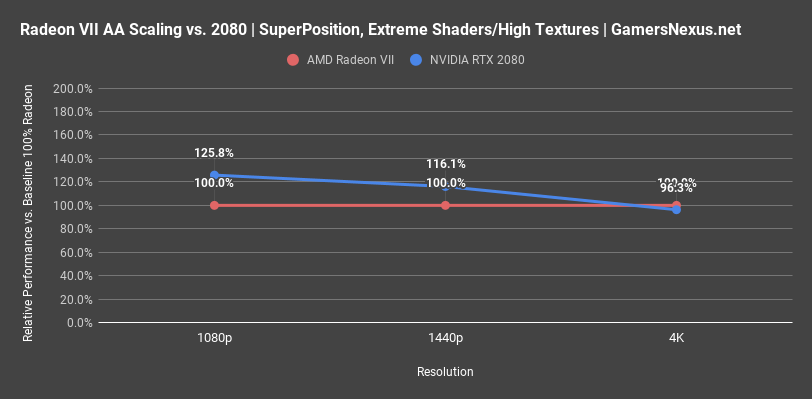

After learning that bigger differences emerge with Extreme shaders, we reran the tests from 1080p to 4K. At 1080p, NVIDIA’s RTX 2080 performed about 25.8% better than the Radeon VII card at 100% baseline. At 1440p, the RTX 2080’s advantage rapidly decayed to 16.1%. 4K results are too close and create a messy chart, but the RTX 2080 ends up actually outperformed by Radeon VII here, with Radeon VII holding a couple percentage point lead. Now, in terms of “real” framerate, the benchmark was operating at about 11-12FPS AVG. This isn’t playable, clearly, and that advantage isn’t really realized since no one would realistically use these settings, but it is interesting to see how two cards can flip positions in different testing scenarios.

GTA V AA Testing

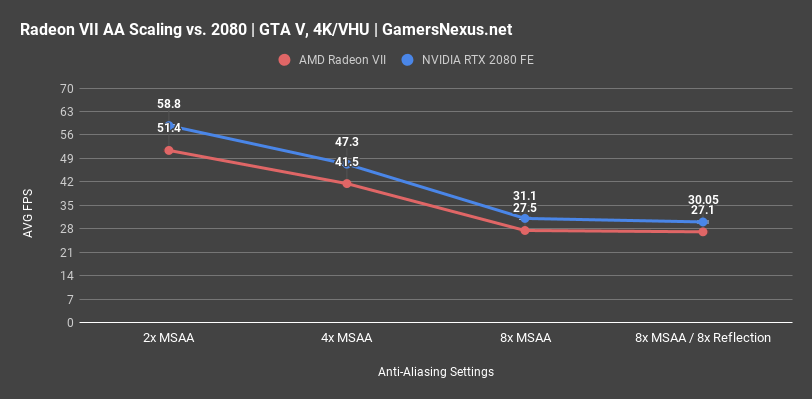

GTA V has a lot of anti-aliasing options, so we’ll exercise both MSAA from 2x to 8x and then enable 8x reflections MSAA. We’ll start this with just an AVG FPS chart at the different settings, which will best illustrate how the gap changes with each test.

At 4K and Very High and Ultra settings for everything, 2x MSAA puts us at 58.8FPS AVG for the 2080 and 51.4FPS AVG for the Radeon VII, but the more important indicator is that the 2080 holds a 14.4% lead over the Radeon VII card. At 4x MSAA, that lead doesn’t change much and scales down to 14.1%, effectively within error. At 8x MSAA, we start to see some movement. The AVG FPS is 27.5 on Radeon VII and 31FPS on the RTX 2080, with the RTX 2080’s improvement defraying to 13%, down from an initial 14.4%. By then also adding 8x MSAA reflections anti-aliasing, we see the difference change to a 10.8% advantage for the 2080 FE. Looking at the line graph in total, we can see that AMD does start recovering some losses toward the extreme end of the scale, it’s just that we’re in unplayable territory for other reasons.

For reference, with the amount of test passes we ran, standard deviation run-to-run was about 0.3FPS AVG. These results are very accurate.

Firestrike AA Testing

Firestrike remains one of the best tools for this type of synthetic workload. For this, we’ll be looking at GT1 and GT2 separately. GT1 heavily loads the GPU with polygons and tessellation, performs shadow and illumination crunching, and uses compute shaders for post processing and particle physics. GT2 is very heavy on compute shader workload and greatly increases pixels processed per frame, but reduces tessellation workload by more than 50%. GT2 should therefore run better on Radeon VII than GT1 would, relatively speaking.

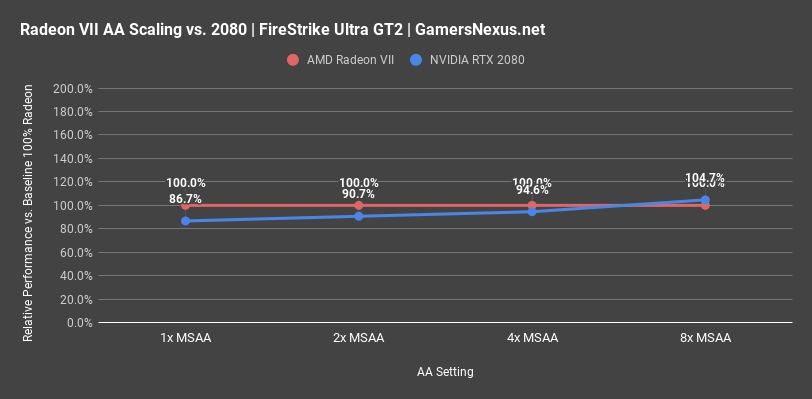

Starting with GT2, we see that the stock Firestrike Ultra settings have NVIDIA below AMD’s performance, at 86.7% of the baseline Radeon VII 100% performance. This is unique to this benchmark, thus far. At 2x MSAA, that gap shrinks to 90.7% of baseline performance. 4x MSAA brings us to 94.6% of baseline, with 8x MSAA finally allowing the RTX 2080 to surpass AMD and hold at 104.7% of baseline performance.

It makes sense that AMD would generally be most competitive in GT2, where compute workload and memory are both stressed. As for why NVIDIA starts to pull ahead once MSAA is brought to 8x, we are working with 3DMark’s team and others to try and better understand the specific behavior. Our estimation is that this may have to do with memory compression on NVIDIA, or maybe edge detection in anti-aliasing with this specific software implementation, or some other implementation-level advantage.

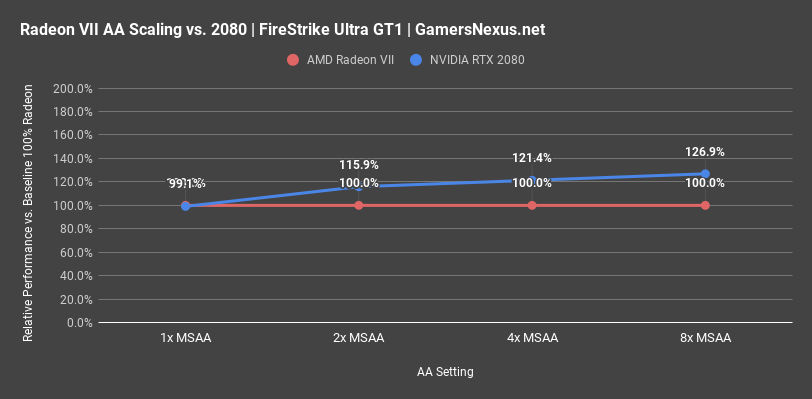

For GT1, where tessellation and polygons are heavier, we see the two cards start functionally equal under stock Firestrike Ultra settings, with NVIDIA gaining a notable 16% at 2xMSAA, 21% at 4x MSAA, and 27% at 8x MSAA.

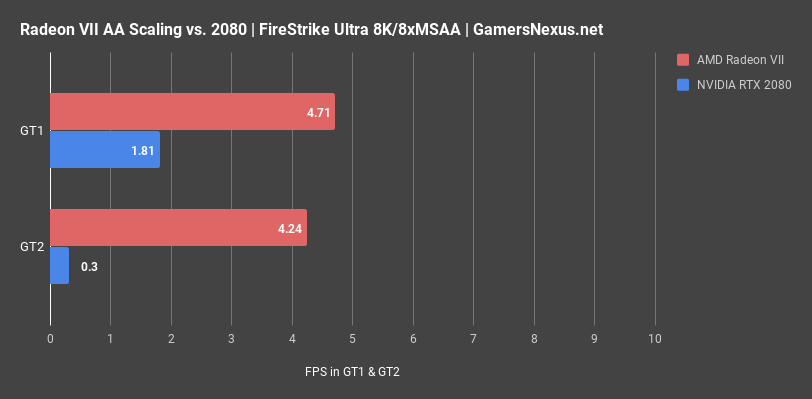

At 8K with 8x MSAA, we reach truly unusable levels of framerate, but do finally see Radeon VII pull far ahead of the RTX 2080. This is what it took to fully exhaust the memory of the RTX 2080, at which point it began choking as it fetched and dumped memory.

Far Cry 5 HD Textures + AA Testing

Far Cry 5 is next. For this one, we tested with SMAA, TAA, and HD Textures under different scenarios.

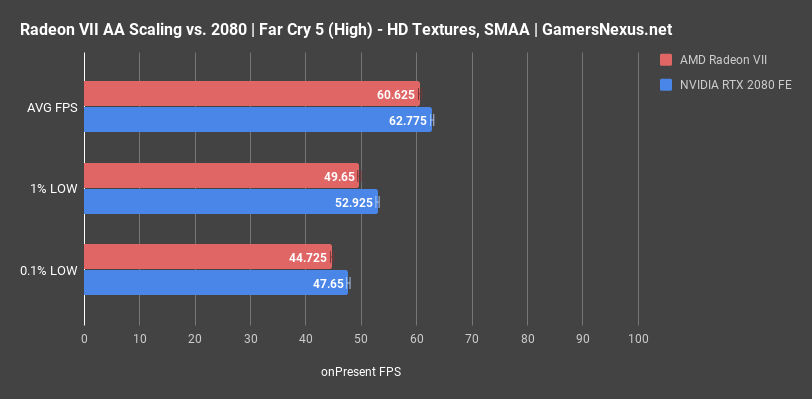

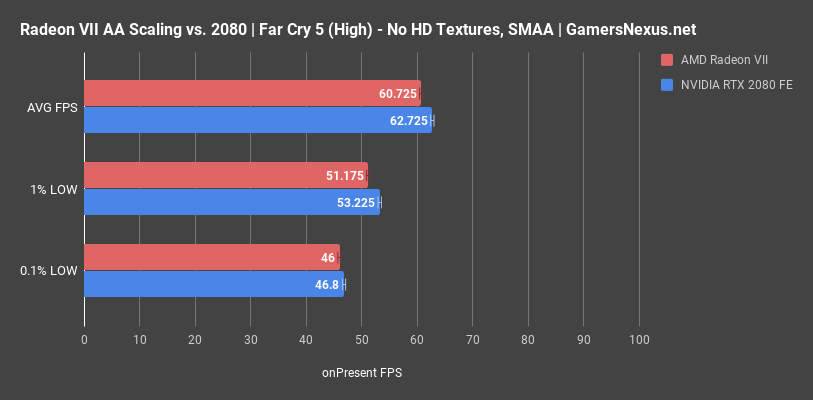

In the first test at 4K and using HD Textures with SMAA, we measured NVIDIA at 62.8FPS AVG and AMD at 60.6FPS AVG, putting NVIDIA as about 3.5% ahead. 1% lows were similarly spaced, as were 0.1% lows.

To test scaling with different settings, we also ran a test with reduced VRAM consumption but otherwise identical settings. We can switch to that chart now. The results, disabling HD Textures, were functionally identical to the results with HD textures. It’s no surprise that we’re within margin of error, as textures don’t really impact performance unless VRAM becomes a limitation. On both devices, quantitatively, the experience is unchanged from the previous chart. Qualitatively, the output is the same on each device. Again, NVIDIA is about 3.3% faster here.

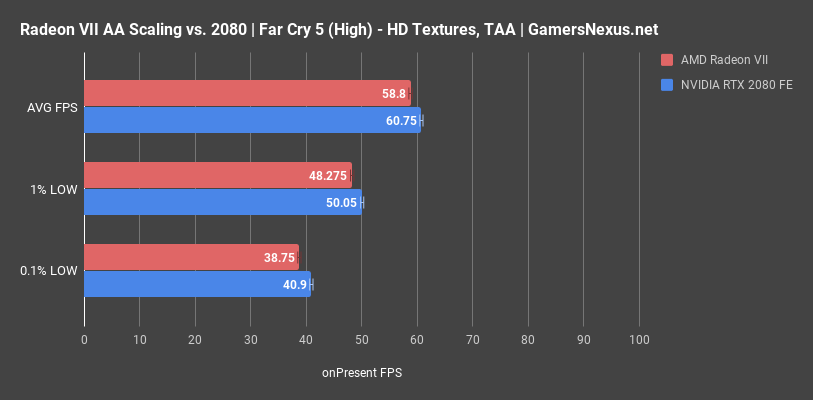

Switching instead to TAA, performance falls off a little bit for each device, but NVIDIA still maintains a 3.3% advantage in AVG FPS. HD Textures and anti-aliasing changes in Far Cry 5 aren’t enough to reduce or change the performance delta from card-to-card.

Conclusion: Not Entirely What We Expected

Our hypothesis wasn’t entirely right. It looks like we nailed it in a few instances, but Firestrike threw us off the trail. We have reached-out to 3DMark’s team and NVIDIA’s engineers to try and better understand that specific performance scenario. The one instance where we saw massive uplift for Radeon VII was when we exhausted the VRAM on the RTX 2080, which was done by operating Firestrike Ultra at 8K resolution and 8x MSAA. This is, of course, entirely unplayable, and so we point again toward the phrasing that this is really just an exercise in research, not one which particularly practical. At 8K/8xMSAA, we did see that Radeon VII operated around 4.7-4.8FPS AVG to the 2080’s ~1-1.7FPS AVG. This is a “large” improvement in percent, and is because we exhausted the framebuffer on the 2080, but is clearly not playable on either device. Regardless, it is interesting data, and could help to better understand other features of the card in future tests.

Editorial, Testing: Steve Burke

Video: Andrew Coleman