Elgato’s 4K60 Pro capture card is an internal PCIe x4 capture card capable of handling resolutions up to 3840x2160 at 60 frames per second, as the name implies. It launched in November with an MSRP of $400, and has remained around that price since.

The Amazon reviews for the 4K60 Pro are almost worthless, because Amazon considers the 4K60 Pro and Elgato’s 1080p-capable HD60 Pro to be varieties of the same product and groups their reviews together. There are only twenty-something reviews of the 4K60 compared to nearly two thousand for the HD60, so that may skew the results slightly. Of the three single-star reviews that are actually for the 4K60, one is from a gentleman who was expecting a seven-inch-long PCIe card to work in a laptop. As of this writing, nobody at all has reviewed it on Newegg, and it’s on sale for $12 off in both locations.

It doesn’t seem like these are flying off the shelves, which probably speaks more to the current demand for 4K 60FPS streaming than the product itself--it’s the cheapest of a very small number of 4K60-capable capture cards, and there’s not any consumer-level competition to speak of. $400 may seem like a lot, but the existing alternatives are much more expensive, like the Magewell Pro Capture HDMI 4K Plus, which (besides having an awful name) costs around $800-$900. The Magewell does have a heatsink and a fan, though, which the 4K60 Pro does not--more on that later.

This Elgato 4K60 Pro review looks at the capture card’s quality and capabilities for both console and PC capture, and also walks through some thermal and temperature measurements taken with thermocouples.

Sample Footage

Capabilities/Software

Elgato’s standard “Game Capture for Windows” software does not work for 4K capture. It will recognize 4K60 capture cards and allow 720p or 1080p capture, but won’t accept a 2160p signal. For that, Elgato provides a separate bare-bones “4K Capture Utility for Windows.” This is a Windows 10 64-bit only software (we even had some driver issues on an outdated version of 10, solved by updating). There is no internal encoder on the 4K60 Pro, but Elgato’s software allows a choice between pure CPU or GPU-accelerated encoding, as well as a simple Mbps slider that goes up to 140 (at 2160p). This is the software we’ve used with our console tests because it’s so simple, but it is possible to apply finer adjustments through third-party utilities like OBS or Virtualdub, which have no problems hooking in to the card. The 4K60 doesn’t handle HDR at all, but neither do many 4K monitors, including the one we use for console testing.

Hardware

Before discussing the card itself, it’s important to repeat that it doesn’t do encoding. Elgato recommends a 6th gen i7 or an R7 in conjunction with a GTX 10XX or RX Vega card, and although we’ve used a i7-4790K and GTX 960 with no problems, the card definitely needs decent hardware to back it up. NVENC encoding is an especially good choice for GTX 1080 and 1080 Ti graphics cards, which have two NVENC engines. Using NVENC should keep the encoding workload from affecting other GPU-reliant tasks.

I/O on the back of the card is limited to one input and one output, both HDMI. We prefer DisplayPort, but HDMI is the most logical choice for a device designed to connect to consoles and TVs. The “out” simply passes through the input, which Elgato claims is lag-free. Whether that’s true or not, it’s close enough that we didn’t notice any delay, and it makes it easy to play the game normally on one screen with the capture software open on another.

As a piece of hardware, it’s been completely idiot-proofed. There’s a full-coverage backplate and a metal cover over the face of the card, leaving only a sliver of PCB and the PCIe pins exposed. It’d take conscious effort to damage it with ESD, or with a hammer for that matter. Everything about Elgato’s advertising and the card itself implies that it’s meant for an audience that doesn’t have much experience with building computers, i.e. console gamers and amateur streamers.

The backplate would also be great for sinking heat away from hot areas on the PCB, except that there are no thermal pads or any contact points at all with the metal shell. There are some small vent holes cut into the front, but it’s otherwise sealed, even at the ends. In the course of using the 4K60 we’ve noticed that it gets pretty warm without active airflow, even on an open test bench, to the point of getting unpleasantly hot to touch. Again, that’s without any thermal pads or direct contact with the metal plates. We use a 140mm NZXT radiator fan to blow air over the bench during capture, which seems to help a lot, but we’ve seen multiple pictures of builds where 4K60 or HD60 Pro cards are jammed in next to the GPU backplate with no room for ventilation. We’d be interested to hear whether the HD60 Pro also has problems keeping cool, since it uses the same cover design but with additional heat from an internal encoder.

Thermal Testing

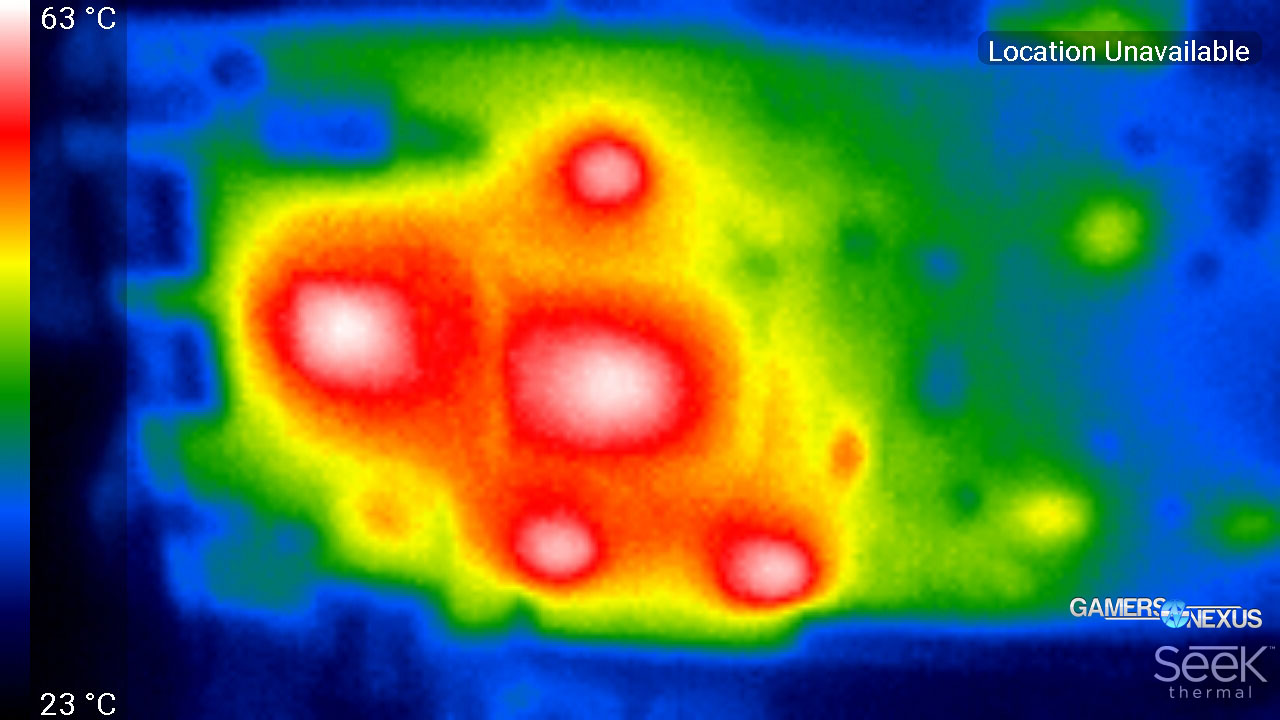

We’ve been curious for some time about just how hot the 4K60 can actually get during use. The first step to answering this was taking the metal cover off and pointing a thermal camera at the card to identify hot spots. The thermal camera we use is by no means a precision instrument, but it indicated temperatures of 50 to 60 Celsius on specific chips right away, even without the covers on. One chip on the back heated up, apparently a “dual port HDMI 1.4 receiver.” On the opposite side, a large Silicon Image/Lattice Semiconducter chip and four other chips surrounding it heated up. The hottest chips on both sides of the PCB are almost directly opposite each other.

We decided to see how much the cover contributes to insulating the card and raising temperatures. This was just a quick-and-dirty comparison, so temperatures are expressed as unmodified readings rather than dT and there are plenty of uncontrolled variables. The results are conclusive enough that it doesn’t matter.

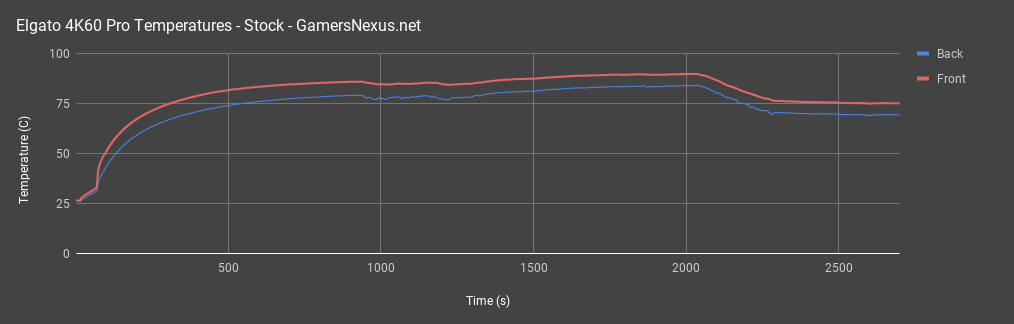

Cover On

We ran our first test with the cover on. One probe was placed on the backplate side over the HDMI receiver chip, and one was placed on the large Silicon Image chip on the front. The test was started with the system off, so there were a few seconds of near-ambient temperatures at the beginning (it was still a little warm from earlier use). After that, there was a relatively slow increase in temperature as the system was turned on. The Xbox was off at this point, all software was closed, and the card wasn’t doing any work, but it did still generate some warmth while idling.

Once the Xbox was turned on and the card began passing through an HDMI signal, temperatures rose abruptly. We had the capture utility open, which doesn’t seem to matter, but were not recording. The card was just passing signal from the card to our monitor during this part of the test. We waited for the temperatures to reach steady-ish state, which happened around 86C on the front and 79C on the back, and then began to record.

Once recording started (Elgato 4K capture utility, GPU accelerated), the temperature initially went down and began to fluctuate more. That’s because our GTX 1080 FTW had been idling until that point and the fans were not spinning, but the NVENC load caused them to activate and begin moving air across our test bench. We could control for this, but it’s a good example of the kind of behavior that might be seen in a real use case. Temperatures continued to climb, and then levelled out around 90C on the front and 84 on the back. We experimented with switching to OBS at higher settings during this part of the test, but there wasn’t a noticeable effect.

We then placed a 140mm fan on top of the test bench, which is what we do when we actually need to capture footage. Instantly the PCB temperatures began to drop, but the ambient temperature rose slightly as hot air was dumped off the test bench onto our probe, which is part of why it’s not included on these charts. Temperatures finally levelled out around 75C on the front and 69C on the back using the fan. We stopped logging here, but the card retained heat in the metal plates for quite a long time after being turned off.

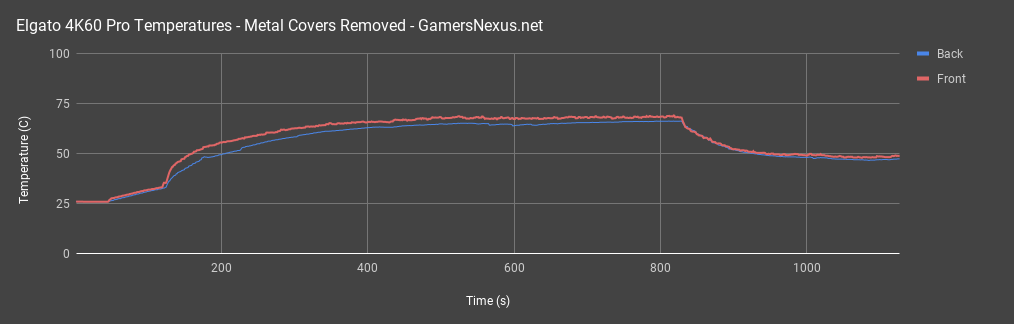

Cover Off

We began the second test with the cover off and the probes unmoved. Again, there was a period of near-ambient temperatures followed by an increase in temperature as the system was turned on. We didn’t graph this, but the card idled around 35C with the cover off and no HDMI signal going through it. 35C (or a bit more with the cover on) is fine--it’s not going to overheat just by idling. Once the Xbox was turned on and the card began passing a signal, temperatures rapidly rose without the benefit of a metal case to soak up heat. It was also less insulated, and reached steady state around 69C on the front and 66C on the back, even once recording started.

Again, adding a fan to the bench raised ambient temperatures briefly, but the card’s temperature lowered dramatically. The test finished up around 47C on the back and 49C on the front. The duration of this whole test was much shorter than the first one (note the time scale), as the lack of metal to soak up and retain heat made temperature changes much more rapid.

Conclusion

We never saw any performance degradation even at 86C, but there’s no telling how sustained high temperatures will affect the lifespan of the card. Active airflow is a must, and taking the cover off of the card is an optional but even more helpful upgrade. There are just four Philips-head screws and an LED cable holding the plates on, so it hardly even counts as a mod. We’ll be testing some more cooling options in a future piece, but putting a thermal pad between the PCB and the backplate and sticking some little heatsinks onto the front-side chips could be a good way to keep the fancy cover on while also cooling the card.

It’s weird reviewing a product that doesn’t yet have competition, or even much demand. Elgato’s products cater to streamers and YouTubers, but the number of people producing and consuming 4K video is still relatively small, and even then a capture card is only necessary for external devices. For Elgato’s target audience, that boils down to the PS4 Pro or Xbox One X, which often don’t run above 30FPS even at standard definition. The specs page for the card lists inputs as “PlayStation 4, Xbox One, unencrypted HDMI.” For PC gamers, it could be used to stream >1080p games running on a second PC to free up system resources.

There’s no point in buying this model unless it’s specifically going to be used for 4K, 60 frames per second capture. We saw nothing to suggest that it would do 1080p/60FPS capture better than another card like the HD60 Pro, and the lack of an internal encoder means it will actually demand more of the system it’s installed in. There are also other cards which can capture at 4K 30 FPS, like the Datapath card we’ve used in the past for VR benchmarks, or the $200 Blackmagic Intensity Pro 4K (we can’t vouch for its quality). We specifically needed a method to capture 3840x2160 console footage at a minimum of 60 FPS in order to calculate framerates, and for that purpose the 4K60 is a bargain and works great.

Editorial, Testing: Patrick Lathan

Video: Andrew Coleman

Host: Steve Burke