UltraWide GPU Gaming Benchmark - Graphics Card Performance at 3440x1440

Posted on

Monitors have undergone a revolution over the past few years. 1080p lost its luster as 1440p emerged, and later 4K – which still hasn't quite caught on – and that's to say nothing of the frequency battles. 144Hz has subsumed 120Hz, both sort-of “premium” frequencies, and adaptive synchronization technologies G-Sync and FreeSync have further complicated the monitor buying argument.

But we think the most interesting, recent trend is to do with aspect ratios. Modern displays don't really get called “widescreen” anymore; there's no point – they're all wide. Today, we've got “UltraWides,” much like we've got “Ultra HD” – and whatever else has the U-word thrown in front of it – and they're no gimmick. UltraWide displays run a 21:9 aspect ratio (21 pixels across for every 9 pixels down, think of it like run/rise), a noticeable difference from the 16:9 of normal widescreens. These UltraWide displays afford greater production capabilities by effectively serving the role of two side-by-side displays, just with no bezel; they also offer greater desk compatibility, more easily centered atop smaller real-estate.

For gaming, the UltraWide argument is two-fold: Greater immersion with a wider, more “full” space, and greater peripheral vision in games which may benefit from a wider field of view. Increased pixel throughput more heavily saturates the pipeline, of course, which means that standard 1080p and 1440p benchmarks won't reflect the true video card requirements of a 3440x1440 UltraWide display. Today, we're benchmarking graphics card FPS on a 3440x1440 Acer Predator 34” UltraWide monitor. The UltraWide GPU performance benchmark includes the GTX 980 Ti, 980, 970, and 960 from nVidia and the R9 390X, 380X, 290X, and 285 from AMD.

3440x1440 isn't the only UltraWide resolution out there. Some $300 options run 2560x1080 to help reduce cost. This isn't a resolution we test, but it does offer a middle-ground of affordability for folks who really can't run two side-by-side, higher resolution displays. Production users are most obviously benefited by such a display. For anyone considering 2560x1080 GPU performance, check out our Game Benchmarks page and note that your performance will be slightly better than our 1440p charts (2560x1440).

Pixel count for a 3440x1440 resolution display is ~4.9 million. Comparatively, 1080p (1920x1080) produces about 2 million pixels, with 1440p (2560x1440) producing ~3.7 million pixels. That plants the 3440x1440 UW resolution noticeably higher in pixel count than both these 16:9 go-to resolutions, meaning gaming performance will be reduced by nature of increased pixel throughput. The most common 4K resolution (3840x2160) pushes ~8.3 million pixels, making it more intensive on the GPU than lower-resolution 3440x1440 UltraWides.

That should give some perspective on performance for various display formats.

Some Notes & Impressions on UltraWide Monitors

We've included a few screenshots throughout this benchmark that provide a better understanding of how an UW display impacts the gaming experience. Some games are not supported on UltraWide resolutions. Older titles and indie titles are the two most common detractors – understandably, given (1) old or (2) smaller teams which may overlook the resolutions – but competitive games can sometimes intentionally forbid UltraWide resolutions. Dirty Bomb is a good example of a game that didn't seem to support 3440x1440 when we tested its beta. CSGO has HUD issues. Shadow of Mordor has an odd, slight stretch that adds some weight to Talion.

So it's not perfect – but a lot of games do work well with UltraWides. Racing games and flight games, like DiRT and Star Citizen, offer greater peripheral vision that is both immersive and mechanically advantageous; shooters like Black Ops III and Battlefront (where FOV is user-controlled) reveal more of the player's flanks without the introduction of a bezel, as experienced with multi-monitor setups.

There are few technologies that really impress us, and as simple as it seems, just growing the display to 21:9 is one of those experience-improving changes. A full review of the 34” Acer Predator UltraWide (curved) is forthcoming, but for now, let's get to the benchmarks.

Test Methodology

We tested using our 2015 multi-GPU test bench. Our thanks to supporting hardware vendors for supplying some of the test components.

The latest AMD drivers (16.1.1 hotfix) were used for testing. NVidia's 361.75 drivers were used for testing the latest games. Game settings were manually controlled for the DUT. All games were run at presets defined in their respective charts. We disable brand-supported technologies in games, like The Witcher 3's HairWorks and HBAO+. All other game settings are defined in respective game benchmarks, which we publish separately from GPU reviews. Our test courses, in the event manual testing is executed, are also uploaded within that content. This allows others to replicate our results by studying our bench courses.

Each game was tested for 30 seconds in an identical scenario, then repeated three times for parity. The results in the tables are averages of these three runs. This produces several thousand frames per test pass and ensures absolute confidence in our ability to reproduce results, as the limited run is less likely to introduce variance in technician input. It's also easier to run multi-pass testing in this regard, which sets our benchmarks apart for their dedication to validation of results.

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | - | - |

| CPU | Intel i7-5930K CPU | iBUYPOWER | $580 |

| Memory | Kingston 16GB DDR4 Predator | Kingston Tech. | $245 |

| Motherboard | EVGA X99 Classified | GamersNexus | $365 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Savage SSD | Kingston Tech. | $130 |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | NZXT Kraken X41 CLC | NZXT | $110 |

Average FPS, 1% low, and 0.1% low times are measured. We do not measure maximum or minimum FPS results as we consider these numbers to be pure outliers. Instead, we take an average of the lowest 1% of results (1% low) to show real-world, noticeable dips; we then take an average of the lowest 0.1% of results for severe spikes. Charts list the core clock of each card as advertised by vendors.

Adaptive synchronization technologies are always disabled for our GPU benchmarks.

Video Cards Tested

- $650 nVidia GTX 980 Ti Reference (x2 for SLI)

- $480 MSI GTX 980 Gaming 4G

- $330 EVGA GTX 970 SSC

- $230 EVGA GTX 960 SSC 4GB

- $408 MSI R9 390X Gaming 8G

- PowerColor R9 290X 4GB (deprecated)

- $250 Sapphire Nitro R9 380X 4GB

- XFX R9 285 DD 2GB (replaced by R9 380)

Game Testing Methodologies

Game patches often impact individual card performance post-launch, but our methodologies remain more-or-less the same:

- Call of Duty: Black Ops III Performance Research

- Call of Duty: Black Ops III GPU Benchmark

- Grand Theft Auto V Graphics Optimization Guide

- Grand Theft Auto V GPU Benchmark

- The Witcher 3 Graphics Optimization Guide

- The Witcher 3 GPU Benchmark

- Just Cause 3 GPU Benchmark

- Fallout 4 GPU Benchmark

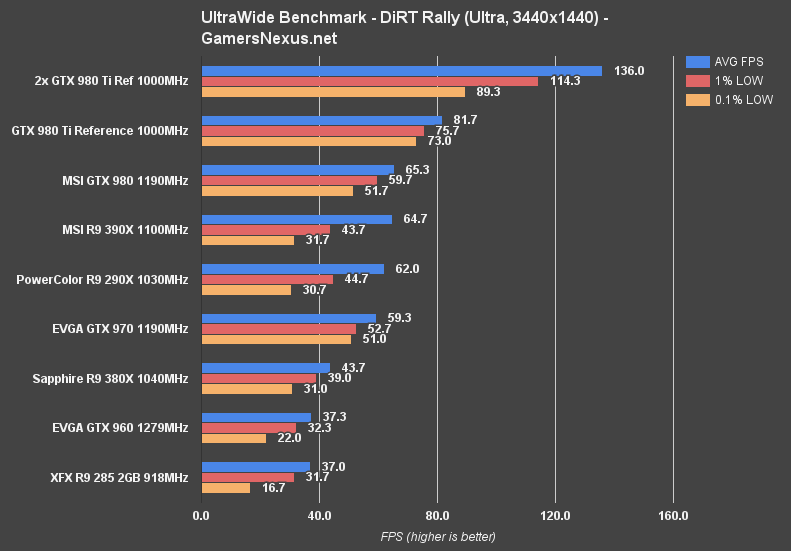

UltraWide 3440x1440 Benchmark – DiRT Rally – GTX 980 Ti vs. 970, 390X, 380X

DiRT Rally exceeds as an UltraWide game. Its cockpit-oriented gameplay makes the additional viewport width useful for both immersion and mechanics, and the game's natively suited to UltraWide displays.

DiRT Rally is more-or-less playable above 30FPS, though we prefer to be in the 40s as a baseline for acceptability during gameplay. This isn't the kind of title where a constant, fluid 60FPS is necessary for survival; it's more arcadey and, although fluidity is certainly better as the framerate nears 60, something in the 40-45 range is acceptable.

The 1% and 0.1% low dips are bad enough with the GTX 960 that it becomes a tough sell for Ultra at 3440x1440, though tanking the settings to 'medium' would allow a reasonable experience on even a 960. The 380X and up, in our above chart, are about where you'd want to be for the best experience.

The GTX 980 Ti outperforms the GTX 980 by 22.3%; the GTX 980 outperforms the R9 390X by 0.9%, but the 980's got significantly tighter low metrics; the R9 390X outperforms the GTX 970 by 8.71%.

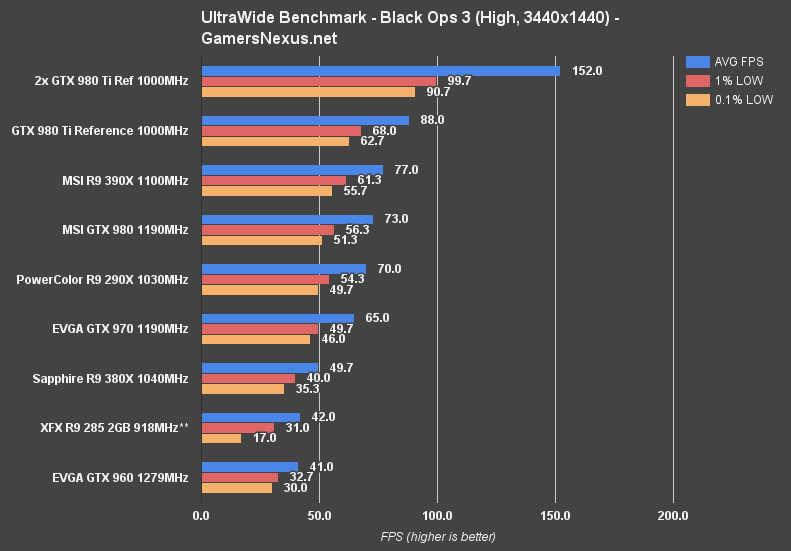

UltraWide 3440x1440 Benchmark – Black Ops III – GTX 980 Ti vs. 970, 390X, etc.

Black Ops III has proven to be exceptionally well-optimized as the devs have rolled-out performance patches. SLI performance in particular – more so in Black Ops than any other game we're currently testing – produces a reasonably valued gain over single-GPU solutions. We increased FOV to 110* for Black Ops III testing at UltraWide. The maximum FOV is 120, but it starts to look a little distorted.

Note that the R9 285 carries some asterisks. This is because we noticed a visual degradation in quality on the 2GB 285, though the game's graphics settings were still configured to 'high' across the board. Texture qualities were substantially worse and looked as if they'd been severely and silently downgraded, but performance was bolstered as a result. We starred these results because we don't think they're a linear comparison to the rest of the chart, which tests all the cards at fixed 'high' settings. It seems as if the game attempts to intelligently down-class settings as deemed appropriate.

BLOPS III demands a higher framerate. On SLI, the impressively high AVG FPS isn't nearly as interesting as its tightly timed 1% and 0.1% low metrics, a consistency we rarely observe in other games. Everything from the GTX 970 and up is capable of hitting 60FPS averages using our 'high' settings at 3440x1440. “Extra” is a bit overly abusive, as we've discussed, and so was excluded from these tests.

The 980 Ti outperforms the 390X by 13.3% and the 390X outperforms the GTX 980 by a close 5.3%.

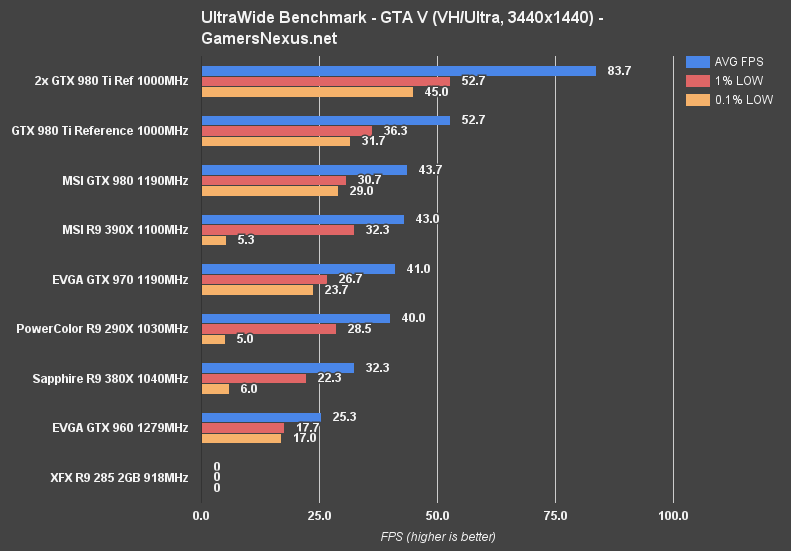

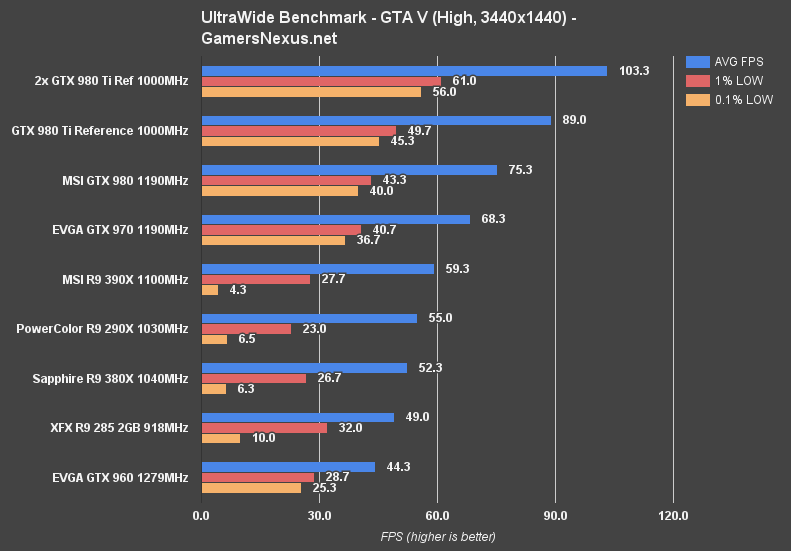

UltraWide 3440x1440 Benchmark – GTA V – GTX 980 Ti vs. 970, 390X, etc.

(XFX 285 did not finish above bench. The card couldn't handle the workload and crashed each time)

GTA V at Ultra boosts SLI just above 60FPS, and that's where the >60FPS configs stop. AMD experienced severe frame dropping issues during our GTA V tests that were consistent across all benchmark scenarios. We were not able to work around these and will be speaking with AMD to try and verify the issue. The frametime issues were only this severe in GTA V for AMD.

Anything from the GTX 970 and up remains fairly playable at 'high' with GTA V on our UltraWide resolution. Lower spec cards, like the R9 380X, would support 'high' at 3440x1440 somewhat reasonably if the 1% and 0.1% low metrics are resolved.

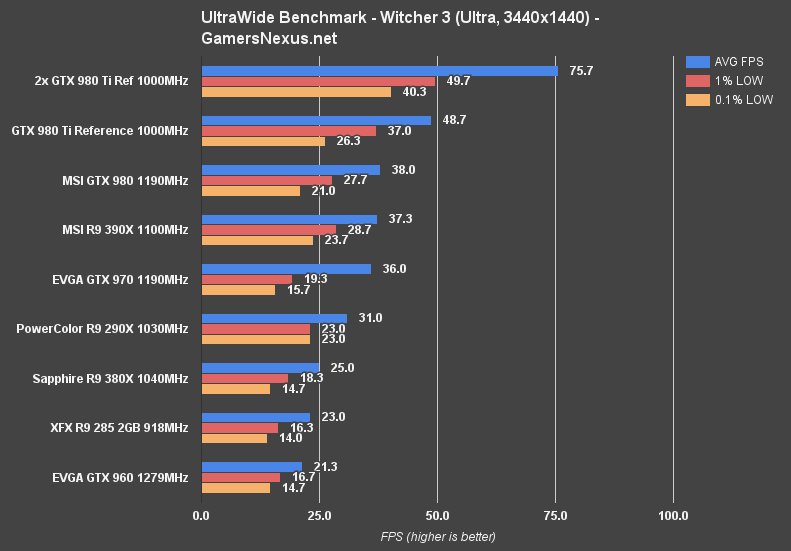

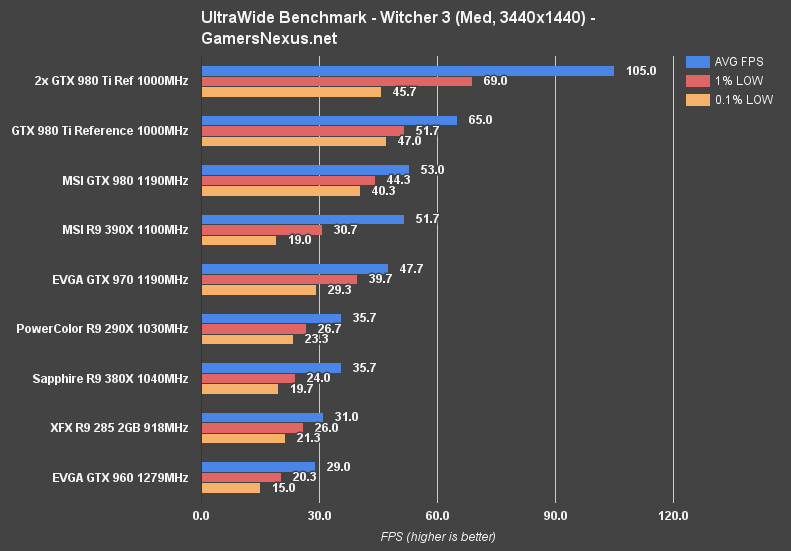

UltraWide 3440x1440 Benchmark – The Witcher 3 – GTX 980 Ti vs. 970, 390X, etc.

The Witcher 3 remains one of the most GPU-intensive games presently out, and it's got the visual fidelity to justify that workload. We disable AA, HBAO, and HairWorks with the Witcher 3 to produce a more linearly comparable test platform. SSAO is used instead.

The Witcher 3 shows anything from the 970 and 390X upward producing smooth framerates at 'medium' settings. 'Ultra' is somewhat unattainable on some devices, but somewhat do-able on a 980 Ti. Settings tuning would be required for more consistent framerates at this resolution.

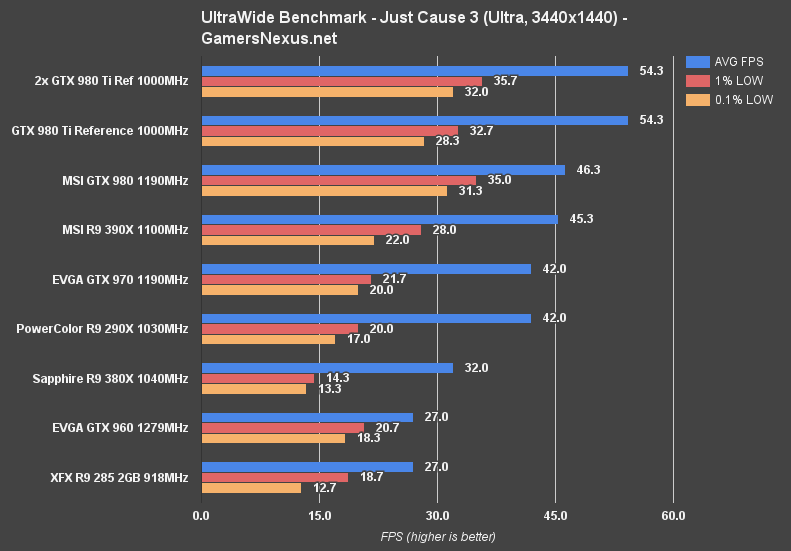

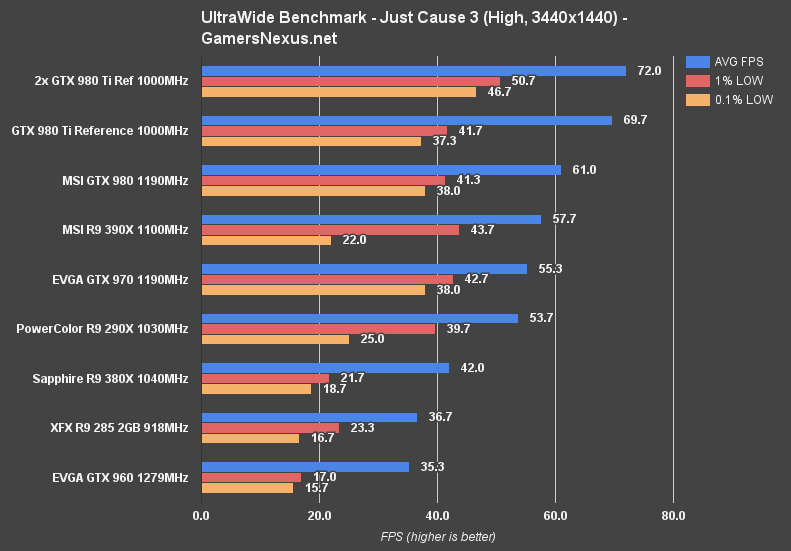

UltraWide 3440x1440 Benchmark – Just Cause 3 – GTX 980 Ti vs. 970, 390X, etc.

Just Cause 3, as we've said a few times now, has absolutely horrid multi-GPU scaling. The value for multi-GPU configurations is effectively zero with Just Cause 3; this was a major contributor to our remaining hesitance with SLI and CrossFire in our recent articles analyzing each.

On 'Ultra' settings, Just Cause 3 runs at about 54FPS on a 980 Ti reference card, ~46 on our MSI GTX 980, and ~45 on our MSI R9 390X. Just Cause 3 gets a little blurry and choppy once entering this region of framerates, a matter exacerbated by highly varied FPS depending upon combat engagement and present location. To get more playable framerates, we look toward 'high' settings with 3440x1440 and find that the 290X and up generally produce agreeable framerates.

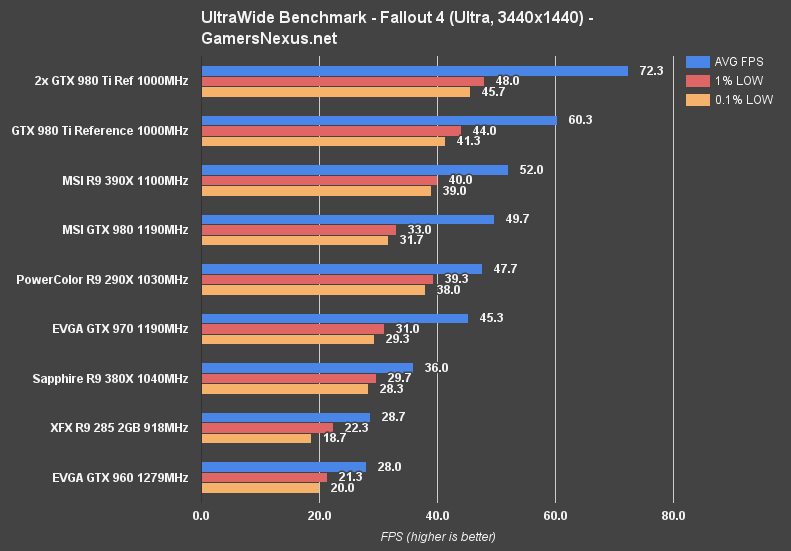

UltraWide 3440x1440 Benchmark – Fallout 4 – GTX 980 Ti vs. 970, 390X, etc.

As we've said a few times, Fallout 4 is one of the most shamefully built PC games in recent history. Its optimization is probably the worst we've seen from a triple-A developer in some time, and that hasn't much changed with the patches. Still, it's a popular title that seems a good fit for UltraWide, so we ran it through the bench with the latest patch (1.3).

Note that Fallout 4 locks itself to 60FPS (regardless of display refresh) as a result of Bethesda's odd decision to tie game physics to game time, which is all connected to framerate. We unlock FPS for absolute performance comparisons, which show us which cards would pull ahead in intensive load environments.

Devices from the GTX 970 and up are reasonably playable at 'ultra' in Fallout 4. SLI performance is poor enough that we would again advise against dual-GPU from a value standpoint, but a single 980 Ti or R9 390X both perform exceedingly well for Fallout 4's standards.

Another note: We ran a limited test run (meant for internal-only analysis) with 'high' settings and observed approximately a 6% performance improvement.

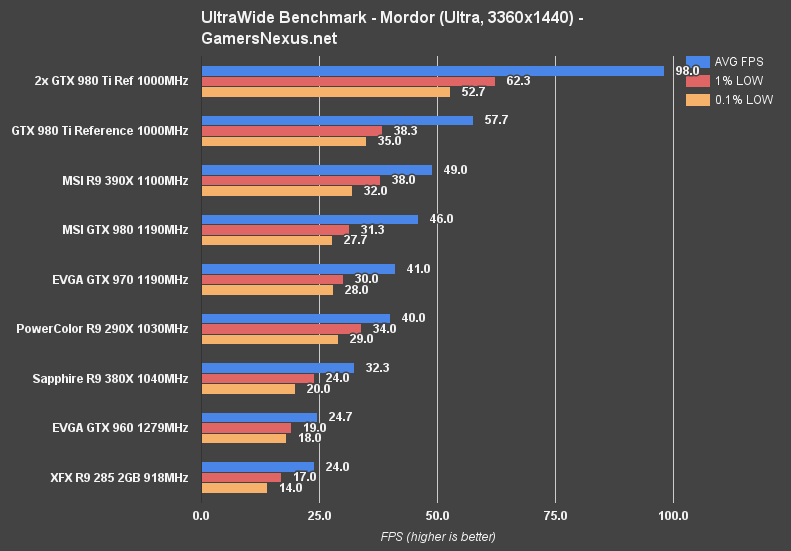

UltraWide 3440x1440 Benchmark – Shadow of Mordor – GTX 980 Ti vs. 970, 390X, etc.

Shadow of Mordor is the odd-man-out. We've always observed odd resolution issues with the way Mordor attempts to scale native desktop resolution rather than run a user-defined input (properly, how it should), but UltraWide seems a bit anomalous. Our desktop was set to 3440x1440 – and Mordor does talk to Windows for the resolution – but the game maxed-out at 3360x1440. Odd. There was also a slight 'stretching' effect in-game, but we'll talk about that more in our Acer Predator review.

As for performance, 'Ultra' settings plant the SLI 980 Tis just below 100FPS (pretty good scaling, actually), a marked gain over the 57FPS of the single card solution. 1% lows and 0.1% lows don't scale quite as well, but are high enough to be more-or-less irrelevant.

Conclusion: Best Graphics Card for UltraWide Gaming?

What is the minimum graphics card for UltraWide gaming?

For those willing to sacrifice higher settings in exchange for a wider display resolution, a lot of games can still be managed at 3440x1440 with GTX 960 and R9 380X cards. Games like DiRT Rally and the Total War series (not benchmarked here – but it's been played off-hours in the office) scale well on mid-range cards at higher resolutions, it's just a matter of turning off some features, often disabling AA entirely, and coping with a reduced overall fidelity.

Anything else will require higher-end hardware. The GTX 970 and R9 390X perform reasonably well across most of our above-tested titles, but even they struggle with games like The Witcher 3, where only the top-end can consistently approach 60FPS.

As with most of these questions, the ultimate answer is “it depends.” If most of your day-to-day tasks involve production, then an UltraWide with a less powerful GPU may still be acceptable – dropping settings or resolution for gaming can be agreeable options if work comprises the primary use case for the display. Less demanding games or reduced game graphics options also make the 3440x1440 resolution more generally usable. Anything high-end or of triple-A production quality and with poor or limited scaling across lower settings, as found in Fallout 4 and AC: Syndicate, will force high-end GPU purchases.

UltraWides like our test unit have grown on us enormously over the past two months of use. Gaming or not, we'd strongly recommend a consideration for any users embedded deeply within day-to-day production environments.

Editorial & Testing: Steve “Lelldorianx” Burke

Video & Video Editing: Andrew “ColossalCake” Coleman