One video card to the next. We just reviewed MSI's R9 390X Gaming 8GB card at the mid-to-high range, the A10-7870K APU at the low-end, and now we're moving on to nVidia's newest product: The GeForce GTX 950.

NVidia's new GTX 950 is priced at $160, but scales up to $180 for some pre-overclocked models. The ASUS Strix GTX 950 that we received for testing is a $170 unit. These prices, then, land the GTX 950 in an awkward bracket; the GTX 750 Ti holds the budget class firmly below it and the R9 380 & GTX 960 hold the mid-range market above it.

The new GeForce GTX 950 graphics card hosts Maxwell architecture – the same GM206 found in the GTX 960 – and hosts 2GB of GDDR5 memory on a 128-bit interface. More on that momentarily. The big marketing point for nVidia has been reduced input latency for MOBA games, something that's being pushed through GeForce Experience (GFE) in the immediate future.

This review benchmarks nVidia's new GeForce GTX 950 graphics card in the Witcher 3, GTA V, and other games, ranking it against the R9 380, GTX 960, 750 Ti, and others.

NVidia GTX 950 Specs & ASUS Strix 950 Specs

| GTX 950 | GTX 950 Strix | GTX 960 | GTX 750 Ti | |

| Fab Process | 28nm | 28nm | 28nm | 28nm |

| GPU | GM206 | GM206 | GM206 | GM107 |

| Transistor Count | 2.94B | 2.94B | 2.94B | 1.87B |

| Graphics Processing Clusters | 2 | 2 | 2 | 1 |

| Streaming Multiprocessors | 6 | 6 | 8 | 5 |

| CUDA Cores | 768 | 768 | 1024 | 640 |

| TMUs | 48 | 48 | 64 | 40 |

| ROPs | 32 | 32 | 32 | 16 |

| Base Clock (GPU) | 1024MHz | 1165MHz | 1126MHz | 1020MHz |

| Boost Clock (GPU) | 1188MHz | 1355MHz | 1178MHz | 1085MHz |

| Memory Speed | 6.6Gbps | 6.6Gbps | 7.0Gbps | 5.4Gbps |

| L2 Cache Size | 1024K | 1024K | 1024K | 2048K |

| VRAM | 2GB GDDR5 | 2GB GDDR5 | 2GB, 4GB | 2GB |

| Memory Interface | 128-bit | 128-bit | 128-bit | 128-bit |

| Memory Bandwidth | 105.6GB/s | 105.6GB/s | 112.16GB/s | 86.4GB/s |

| Texture Filter Rate | 49.2GT/s | 49.2GT/s | 72.1GT/s | 43.4GT/s |

| Power Connectors | 1 x 6-pin | 1 x 6-pin | 1 x 6-pin | None |

| TDP | 90W | 90W | 120W | 60W |

| Display Connectors | 3 x DP 1.2 1 x HDMI 2.0 1 x DL DVI | 3 x DP 1.2 1 x HDMI 2.0 1 x DL DVI | 3 x DP 1.2 1 x HDMI 2.0 1 x DL DVI | 1 x DP 1.2 1 x HDMI 1 x DVI-I |

| Form Factor | Dual Slot | Dual Slot | Dual Slot | Dual Slot |

| Price | $160.00 | $170.00 | $200 (2GB) $240 (4GB) | $120.00 |

The reference design for nVidia's GTX 950 calls for a 1024MHz base clock and 1188MHz boost clock, but pre-overclocked options will be available in abundance at launch. We'll discuss one of those below.

At $160, the GTX 950 is priced most directly against AMD's $150 R7 370 (which we don't have – but is effectively identical to the R9 270X in performance, a card we do have). The next closest devices of note are the GTX 960 2GB and R9 380 2GB for an extra $40 or the GTX 750 Ti for a $30-$40 price reduction. The 950 rests firmly between the two 'sweet spot' price-points, making for awkward market positioning.

ASUS Strix Differences

There's only so much that a board partner can do to differentiate from other options. They're all sourcing the GPU from the same manufacturer, after all.

For ASUS' Strix video cards, the difference is made in the form of cooling and noise focus. The Strix deploys two quiet fans atop a large, aluminum fin heatsink. Two large, 10mm copper heatpipes run through the sink and to the silicon. High-quality chokes and capacitors reinforce the VRM and power design, mitigating the chance for coil whine or choke 'hum' when under load. ASUS rates its capacitors for a 50k hour lifespan.

More noteworthy, though, is the substantial pre-overclock over the reference GTX 950. The reference clock of 1024 / 1188MHz (boost) is quickly outpaced by ASUS' 1165 / 1355MHz (boost) pre-OC. This aggressive pre-overclock increases thermals, but the Strix & DirectCU cooler should take care of that. We'll look into this in the thermal benchmarking.

GM206 Maxwell Architecture

GM206 is old, as is Maxwell, at this point. We discussed Maxwell in greater depth in our GTX 980 review. We'll recap a few key features and republish some of our previous content.

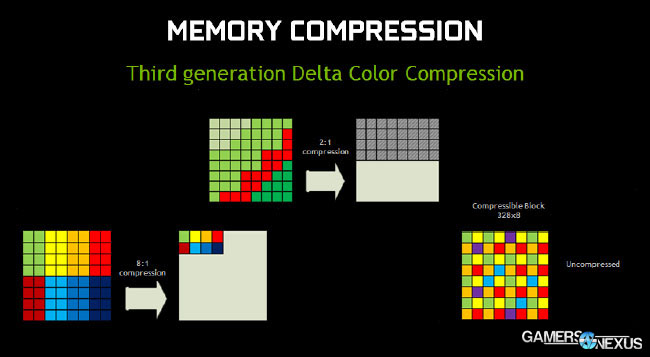

Maxwell focuses on power efficiency and core efficiency, something nVidia was criticized for on its Fermi GPUs. Maxwell's primary method for increasing efficiency is to perform more on-card compression and minify operations, like Delta Color Compression (below); Maxwell cores are approximately 40% more efficient than Kepler cores when it comes strictly to gaming tasks, accounting for the smaller memory interface by more intelligently and heavily compressing data.

This gaming efficiency gain comes at a reduction to production performance, though, as the raw core count and memory interfaces have diminished against Kepler heavyweights. For gamers, the optimization is nothing but good.

Pasted from our GTX 980 review:

Delta color compression is a specific process used when compressing data transferred to and from the framebuffer (GPU memory). Bandwidth is not “free;” as with all components, it’s critical to performance to ensure data is compressed to make the best use of buses, hopefully in a lossless fashion so that quality is not degraded.

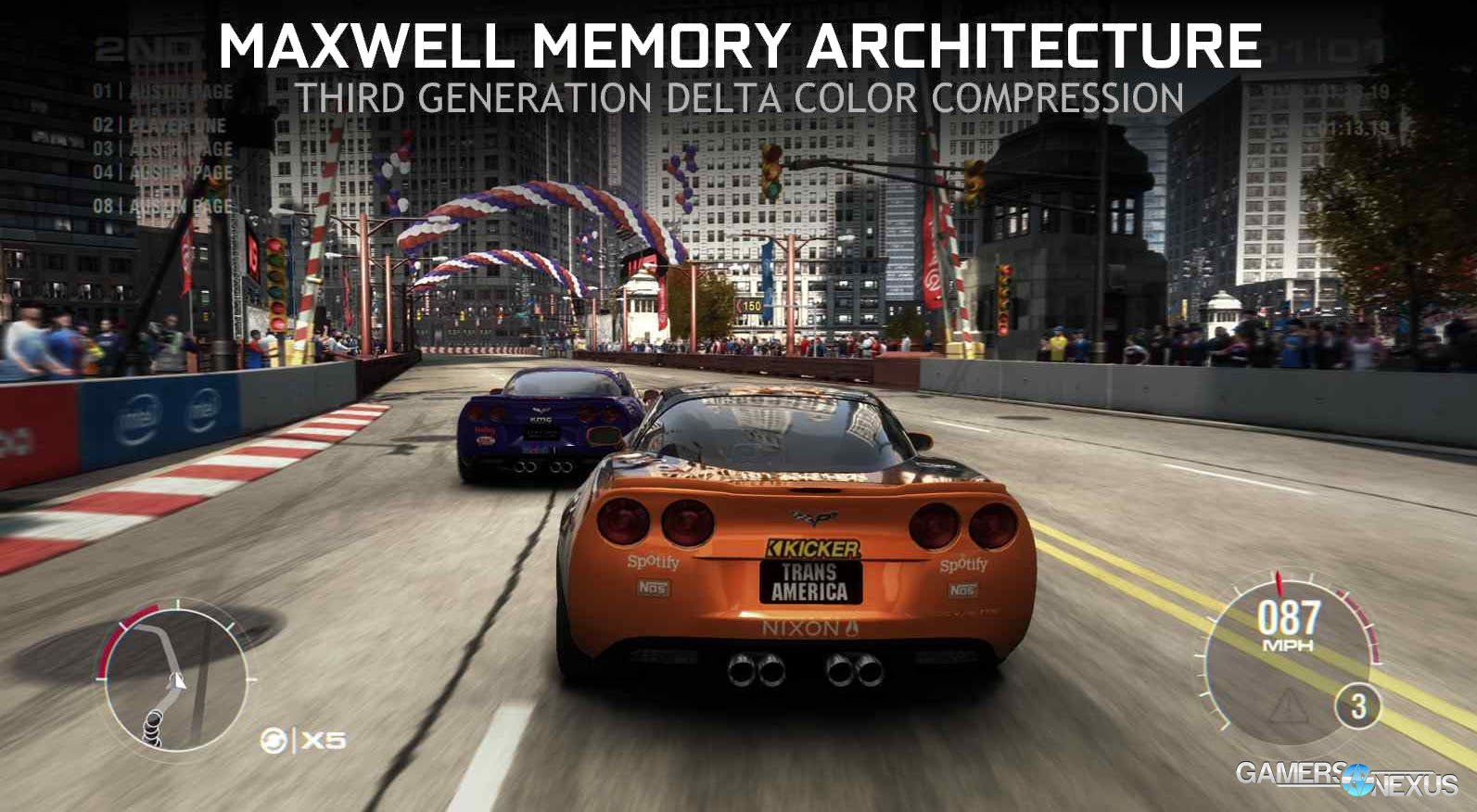

Delta color compression improves memory efficiency through new compression technology. By compressing the data more heavily, less bandwidth is required and more data can be crammed down the pipe, ultimately resulting in a better-looking experience. Let’s take an example from GRID: Autosport, which we’ve benchmarked in the past:

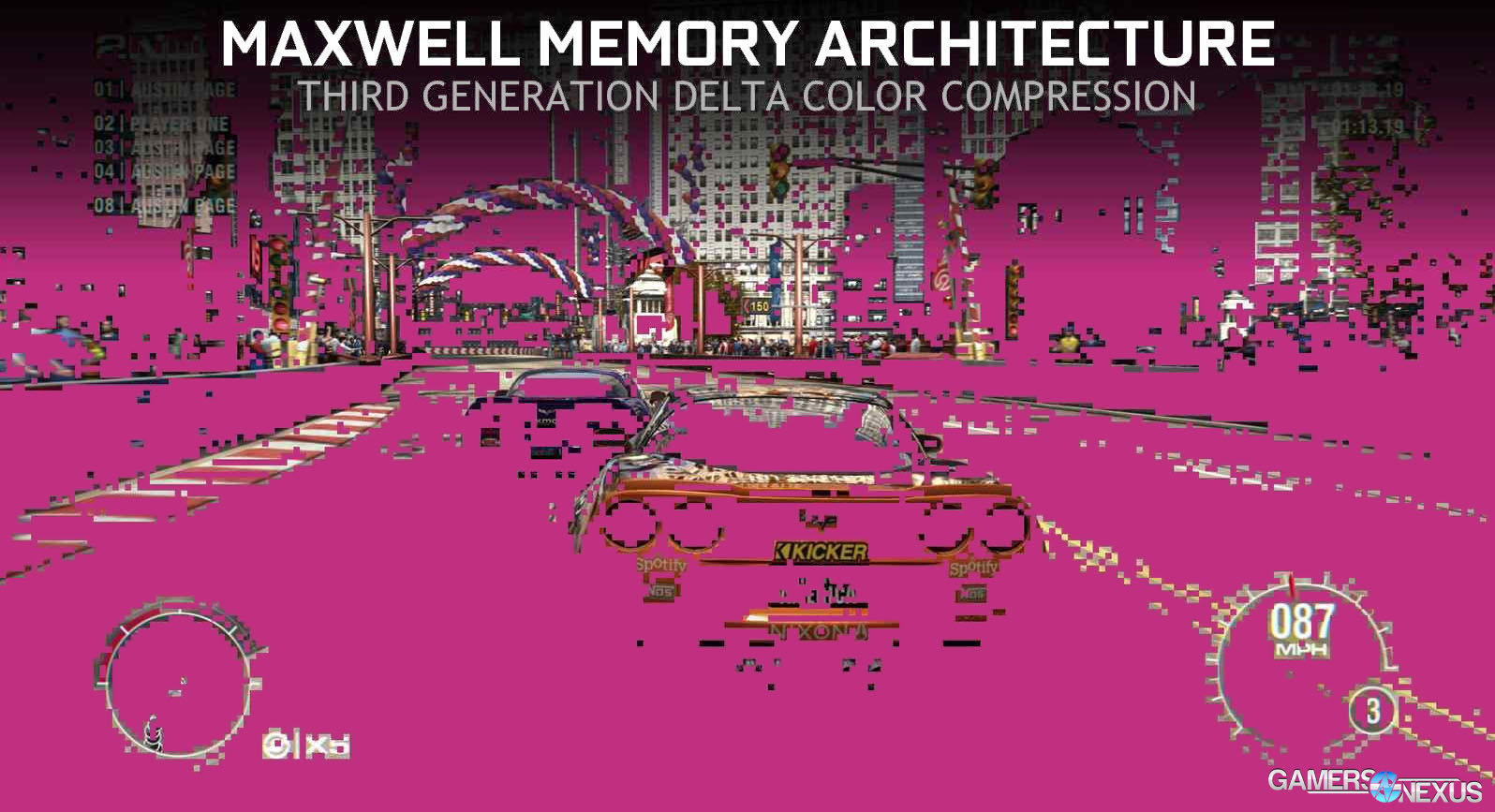

This frame (n) has already been rendered by the GPU. The GPU already knows what’s present in the frame (having just drawn it) and can use this information when analyzing the next frame (n+1) in the gameplay sequence. Instead of drawing the absolute color values all over again in the next frame, we can use color compression to look at the delta (change) between values in each frame. The GPU looks at the next frame in the sequence and, instead of seeing our above image, sees something more like this:

The pink highlight shows the change in color between frames – either an object was moving too much, visually changed in appearance, or was granular enough to require additional work by the GPU, with each of these showing in non-highlighted appearance.

To recap: Analyzing the color change between successive frames minimizes demand on the GPU by avoiding exact (absolute) color value draws, instead opting for value change from base. NVidia’s whitepaper indicates that this process reduces bandwidth saturation by 17-18% on average, meaning we’ve got more memory bandwidth freed-up for other tasks. This also contributes to the effective memory speed: Although specified at 7Gbps GDDR5 memory speed, the GTX 980, 970, and other similarly-equipped Maxwell devices will perform equivalently to 9Gbps effective throughput, from what we’re told by nVidia.

In shorter form, you’d need to run DRAM at 9Gbps on Kepler in order to achieve the same effective bandwidth as 7Gbps on Maxwell.

(End re-published content).

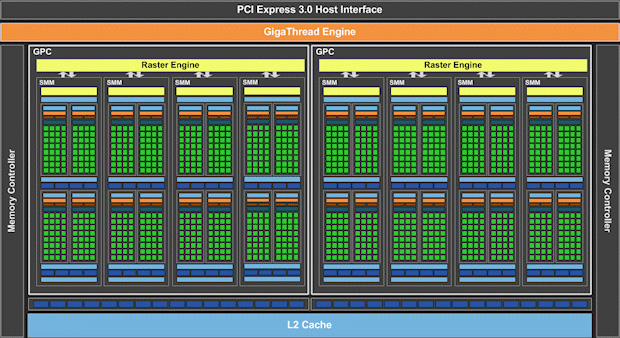

The original GM206 (GTX 960) was divided into two GPCs with eight streaming multiprocessors (SMs). Each SM is home to 128 CUDA cores. This netted 1024 CUDA cores on the GTX 960. For the GTX 950, GM206 has been simplified for cost and power reasons – the GTX 950 GM206 hosts just six SMs (still two GPCs), bringing core count down to 768.

For comparison purposes, the first generation Maxwell card – the GTX 750 Ti – shipped with one GPC and five SMs, resulting in just 640 CUDA cores. To this day, the GTX 750 Ti is still a strong performer for gamers interested in titles that may not be as graphics intensive as, say, the Witcher 3 or GTA V.

MOBA Input Latency on the GTX 950 – A New Marketing Direction

This is nVidia's co-marketing attempt with the GTX 950. Although diminishing MOBA input latency is going to be something you read about a lot today, it is critical to note that the advancements made with input latency are not mutually exclusive with the GTX 950. Everything you're about to read is being pushed through GFE, meaning all modern Maxwell cards will benefit from the improvements. You will not have to “downgrade” a higher-end Maxwell card to the GTX 950 in order to reduce input latency.

Here's what we're talking about:

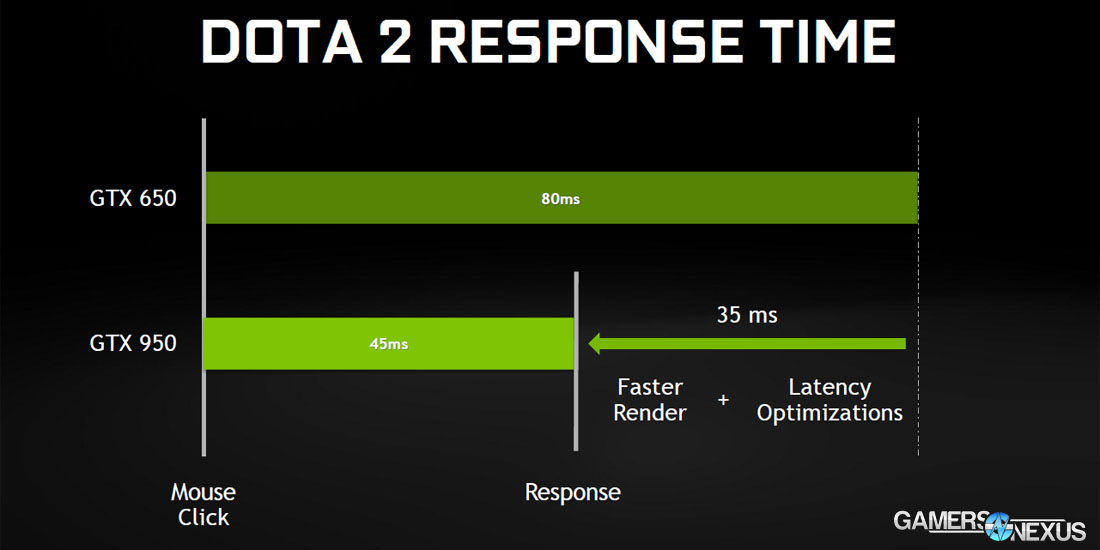

The GTX 650 card, a relative old-timer, exhibited an input latency of approximately 80ms in DOTA2. When we say “input latency,” we're talking about the delay between a physical button press and the execution of that action within the game engine. Clicking the mouse, then, has an 80ms delay between its in-game impact in DOTA2 with a GTX 650. Note that we specify in-game impact as opposed to observed impact, which would add additional human processing time.

Specifically looking at the GTX 950, we're told that input latency has been reduced to 45ms by way of driver and pipeline optimizations.

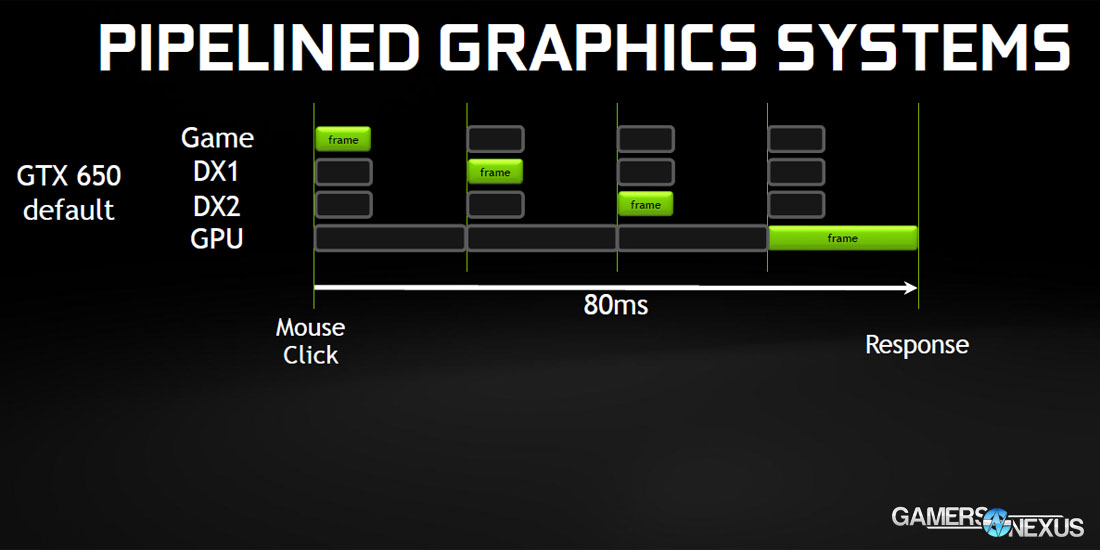

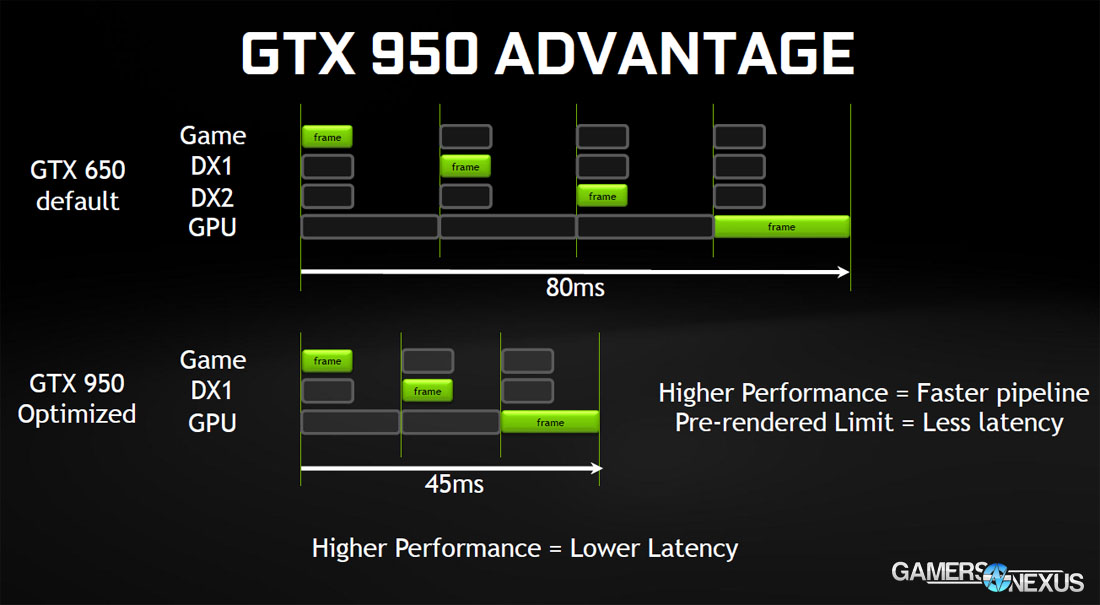

The simplified graphics pipeline is limited in its simultaneous actions. Buffers get filled and must be cleared before progress can be made. The game engine passes its active frame to the API – DirectX 11 or 12, in this case – which performs further scene processing before sending the frame along to the GPU. The GPU is generally the longest poll in the pipe, but if you look closely at the pipeline, you'll see that there are two frames simultaneously in transit to the GPU – DX1 and DX2. These frames are prebuffered (or double-buffered) for output and improved smoothness and performance of some games, but aren't necessary in all games.

By halving the prebuffer to just one frame, input latency can be reduced substantially without a noticeable detriment to gaming performance for some games. Those games, nVidia tells us, just happen to be the most competitive on the market: DOTA2, League of Legends, and Heroes of the Storm. In more engrossing games that offer more gemoetric complexity and demand more of the GPU, cutting the prebuffered frames in half could reduce smoothness and diminish depth of experience. For a MOBA, though, the latency reduction outweighs any marginal differences in the (already simplistic) visual department.

We previously discussed prebuffered frames in Watch Dogs, suggesting that readers with severe performance and mouse input issues drop the setting to '1.'

Continue to Page 2 for the gaming benchmarks.

Test Methodology

We tested using our updated 2015 Single-GPU test bench, detailed in the table below. Our thanks to supporting hardware vendors for supplying some of the test components.

The latest AMD Catalyst drivers (15.7.1) were used for testing. NVidia's 355.65 drivers were used for testing. Game settings were manually controlled for the DUT. All games were run at 'ultra' presets, with the exception of The Witcher 3, where we disabled HairWorks completely, disabled AA, and left SSAO on. GRID: Autosport saw custom settings with all lighting enabled. GTA V used two types of settings: Those with Advanced Graphics ("AG") on and those with them off, acting as a VRAM stress test.

Each game was tested for 30 seconds in an identical scenario, then repeated three times for parity.

Average FPS, 1% low, and 0.1% low times are measured. We do not measure maximum or minimum FPS results as we consider these numbers to be pure outliers. Instead, we take an average of the lowest 1% of results (1% low) to show real-world, noticeable dips; we then take an average of the lowest 0.1% of results for severe spikes. Anti-Aliasing was disabled in all tests except GRID: Autosport, which looks significantly better with its default 4xMSAA. HairWorks was disabled where prevalent. Manufacturer-specific technologies were used when present (CHS, PCSS).

Thermals and power draw were both measured using our secondary test bench, which we reserve for this purpose. The bench uses the below components. Thermals are measured using AIDA64. We execute an in-house automated script to ensure identical start and end times for the test. 3DMark FireStrike Extreme is executed on loop for 25 minutes and logged. Parity is checked with GPU-Z.

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | Various | - |

| CPU | Intel i7-4790K CPU | CyberPower | $340 |

| Memory | 32GB 2133MHz HyperX Savage RAM | Kingston Tech. | $300 |

| Motherboard | Gigabyte Z97X Gaming G1 | GamersNexus | $285 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Predator PCI-e SSD | Kingston Tech. | TBD |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | Be Quiet! Dark Rock 3 | Be Quiet! | ~$60 |

A Note on Validating Input Latency Metrics

Testing input latency on the GTX 950 would be a fun project – but we can't do it right now. Input latency testing requires a hardware middle solution (like an Arduino computer) to sit between the input simulation and the receiving software.

This is something we're working on developing in the immediate future and hope to deploy for GPU benchmarking. As of now, we cannot independently validate nVidia's claims of latency reduction, nor can we test the competing hardware's own input latencies.

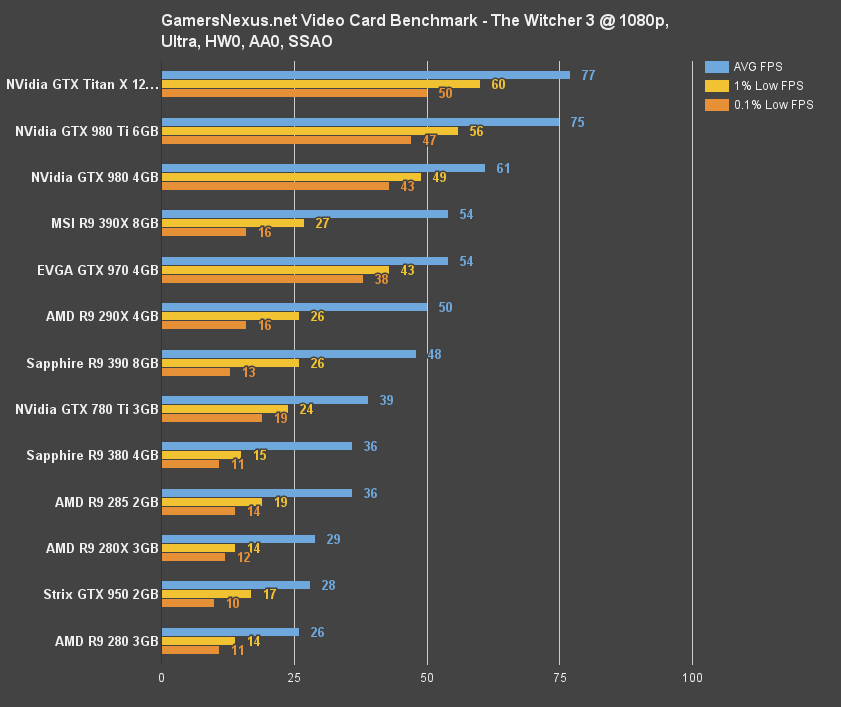

GTX 950 vs. 960, 380, 750 Ti Benchmark – The Witcher 3

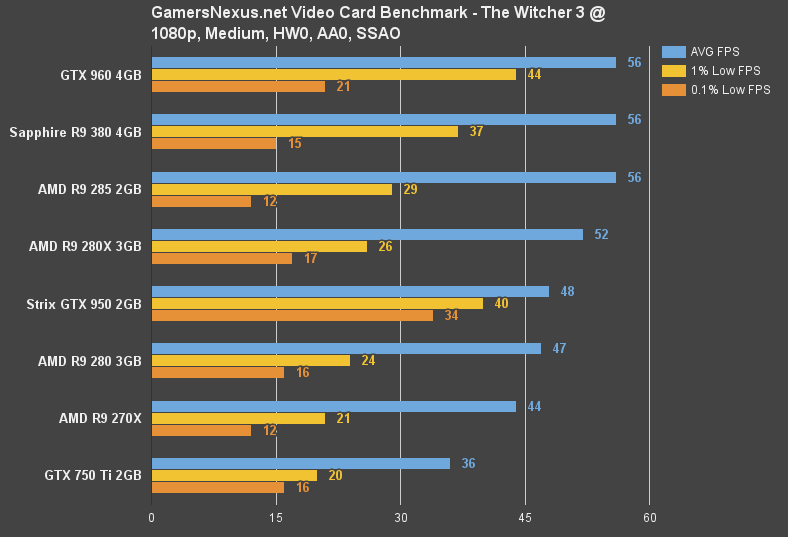

The GTX 950 isn't particularly playable in the Witcher 3 unless we drop to 'medium' settings, at which point its 48FPS average is barely passable. Further tweaks – follow our optimization guide for that – would allow a higher average FPS.

At medium settings, the GTX 950 is comparable to the R9 280 in average FPS, but outperforms the R9 280 handily in 1% and 0.1% low frame metrics. We see the R9 270X (about where the R7 370 would rest) fall behind the GTX 950 substantially in 1% and 0.1% low metrics; the average FPS delta is ~8.7%, favoring the GTX 950.

The GTX 960, R9 285, and AMD R9 380 both perform at an arguably playable 56FPS average, a ~15% gain over the GTX 950.

Against the GTX 750 Ti, the gap widens (28.5%) such that settings would have to be driven into the floor to conjure fluid frame delivery.

The Witcher 3 is effectively unplayable at higher settings.

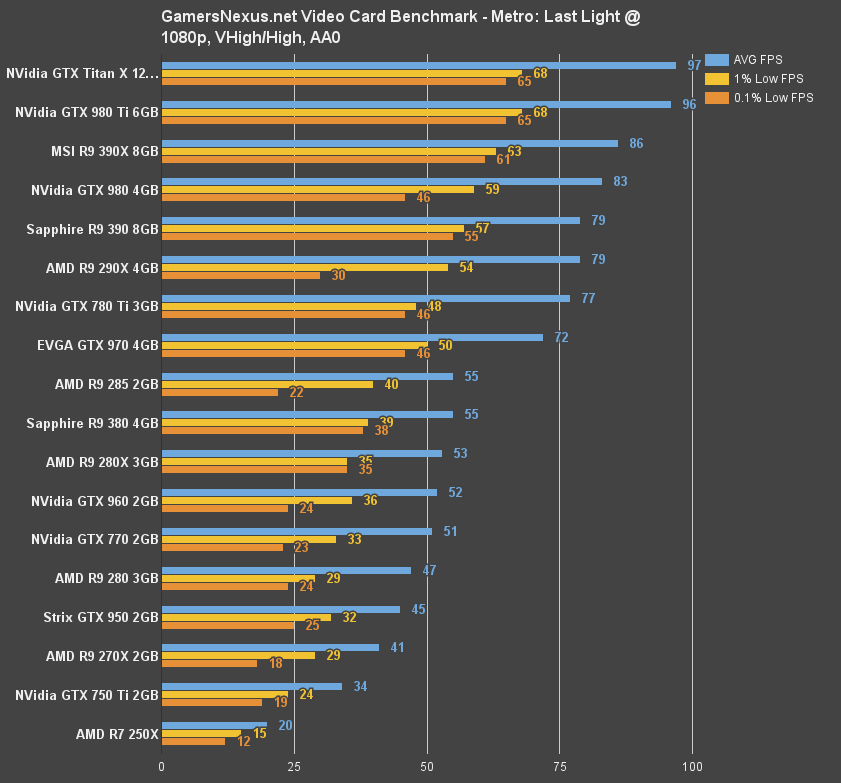

GTX 950 vs. 960, 380, 750 Ti Benchmark – Metro: Last Light

We ran Metro at the usual Very High / High configuration (1080p), finding the GTX 950 to hold a 9% advantage over the R9 270X and 27.8% advantage over the GTX 750 Ti. Looking to the +$40 range, the GTX 960 and R9 280X experience a rough ~16% gain.

Again, the GTX 950 lands squarely between its two flanking price categories – not wholly surprising. One place the GTX 950 does continually excel, though, is its low frametime performance.

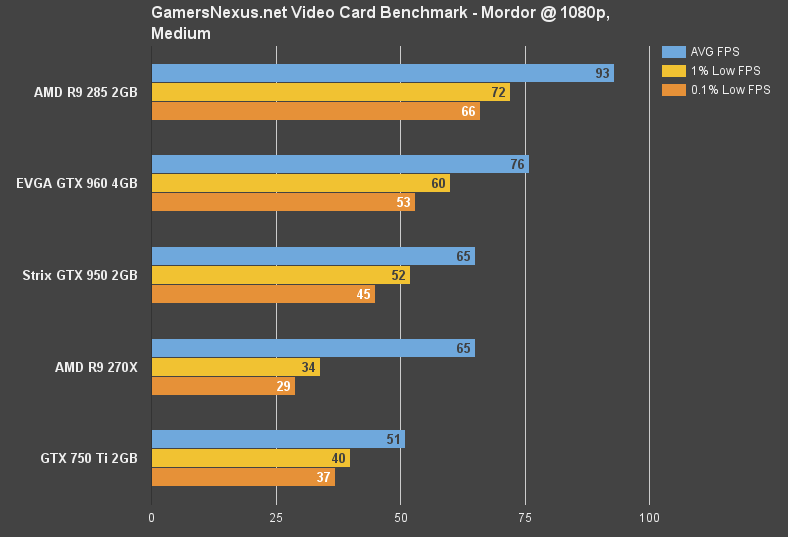

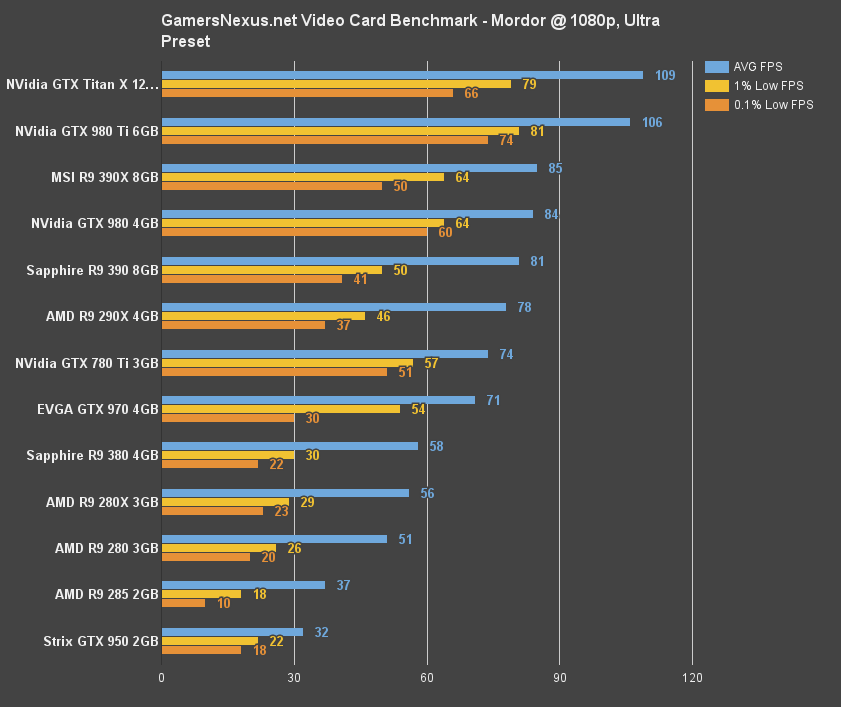

GTX 950 vs. 960, 380, 750 Ti Benchmark – Shadow of Mordor

Shadow of Mordor is another game that we had to tweak for the bench. We normally run Mordor at the “Ultra” preset for GPU benchmarking, but found the GTX 950's 32FPS average performance to be unsatisfactory. Hoping to more accurately create a user scenario, we dropped the settings to 'medium' and retested a suite of similarly-priced cards.

The R9 285 flies past everyone at 93FPS average, but that's not to say the GTX 960 doesn't make its own efforts to leave the 950 in the dust (+15%). The GTX 950 is capable of playing Shadow of Mordor at medium / 1080p without sacrificing fluid gameplay. The R9 270X, although similarly performant in average metrics, dips and drags with its 29FPS 0.1% low.

GTX 950 vs. 960, 380, 750 Ti Benchmark – Far Cry 4

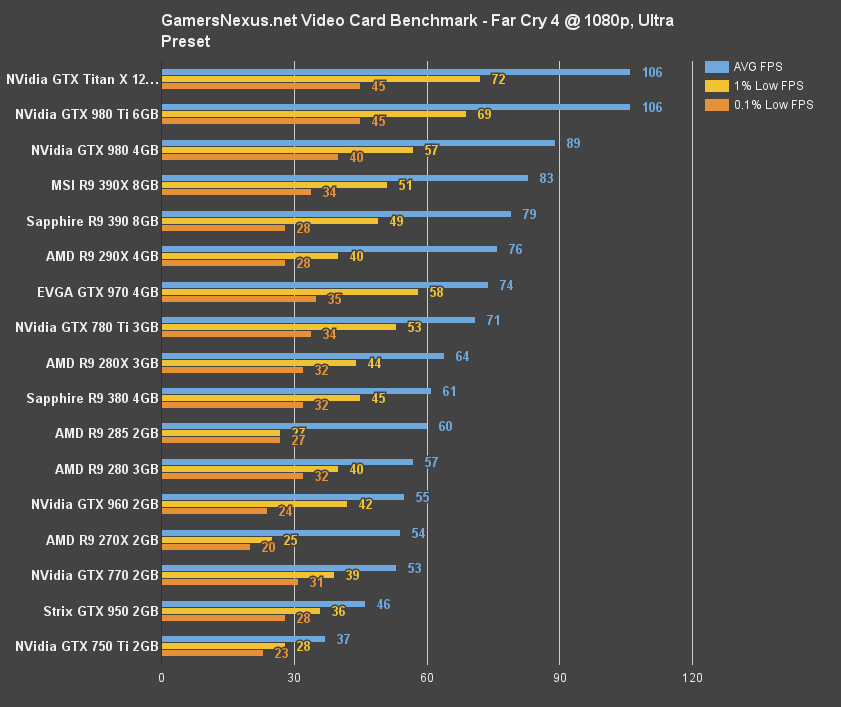

Far Cry 4 fronts similar performance differentials between the 960, 750 Ti, and new 950, but the R9 270X outperforms the GTX 950 admirably in this instance.

GTX 950 vs. 960, 380, 750 Ti Benchmark – GTA V

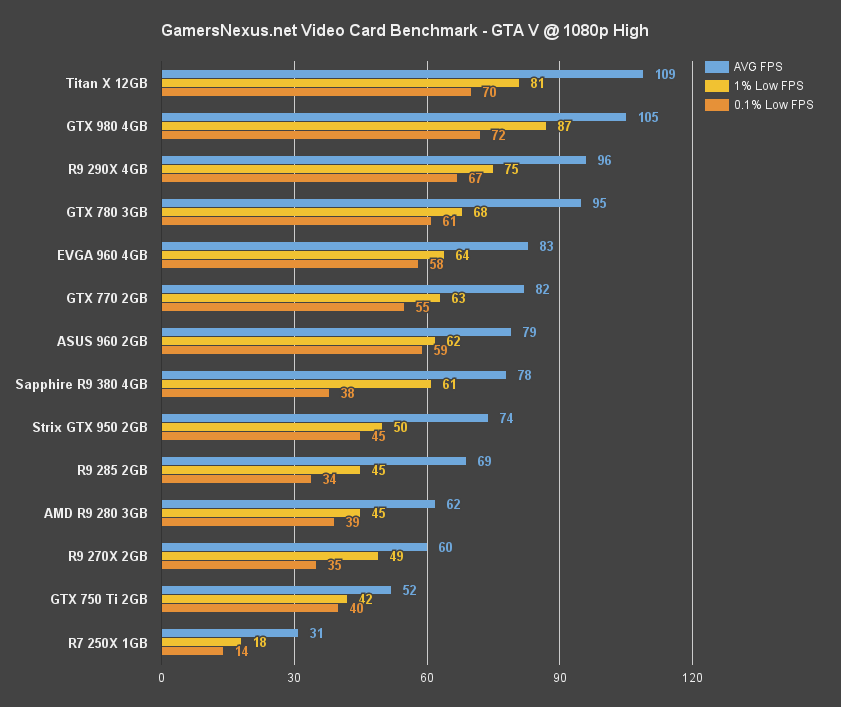

“High” settings permit fluid gameplay on the GTX 950 in GTA V. We see an FPS output of 79 (average) against the 960's 83 and 380's 78. Not a bad delta. The GTX 750 Ti pushes 52FPS – just shy of the golden '60' metric, but passable for most players – and provides the GTX 950 a proving ground for its market positioning.

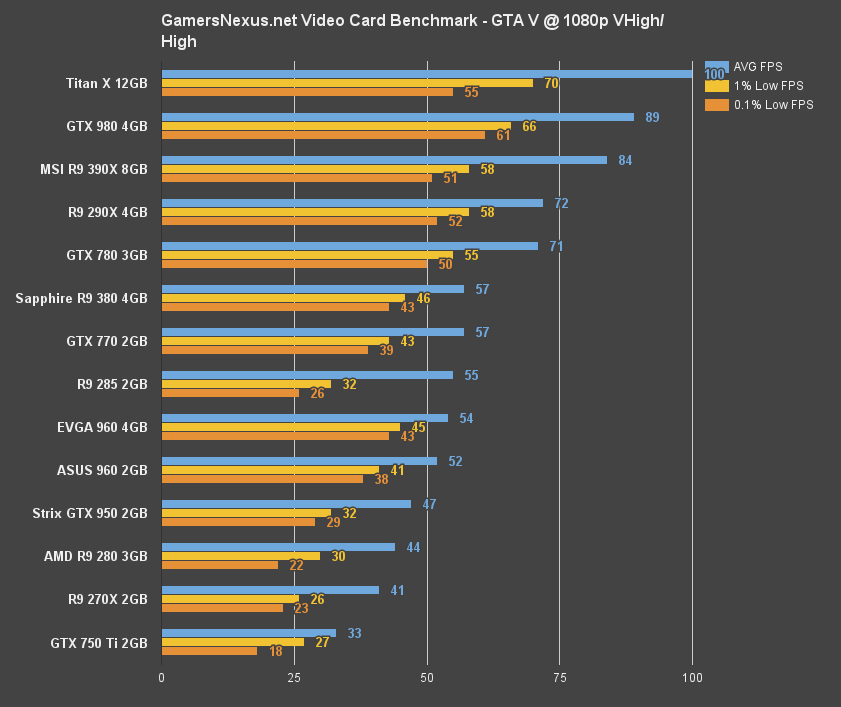

Moving to the usual 'very high' and 'high' mix (maxed-out settings, aside from AA and advanced graphics), the GTX 950 falls to 47FPS average, but still retains its high “low” metrics. The GTX 960 now sits at the passably playable 52FPS range, illustrating that the gap between the GTX 960 & R9 380 vs. the GTX 950 is wide enough that settings must be lowered.

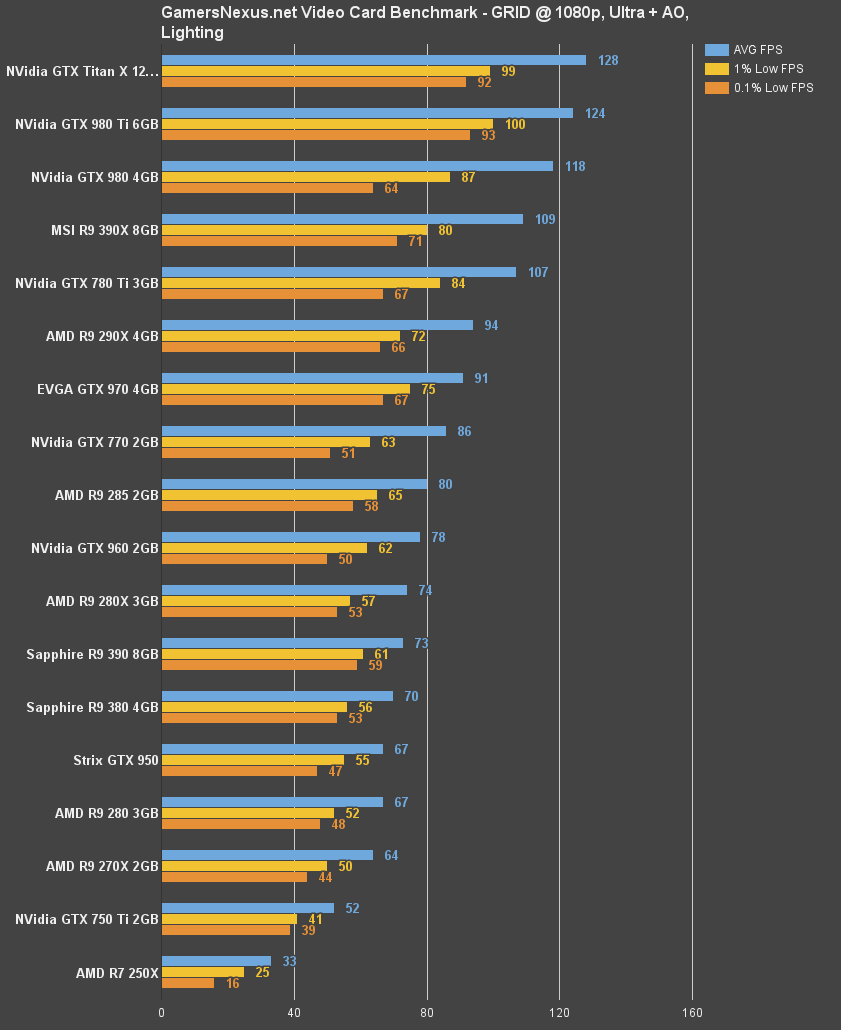

GTX 950 vs. 960, 380, 750 Ti Benchmark – GRID: Autosport

GRID plays on the GTX 950 (and 750 Ti, for the most part) readily at 1080p / ultra. The R9 270X / R7 370 performs only marginally worse than the GTX 950.

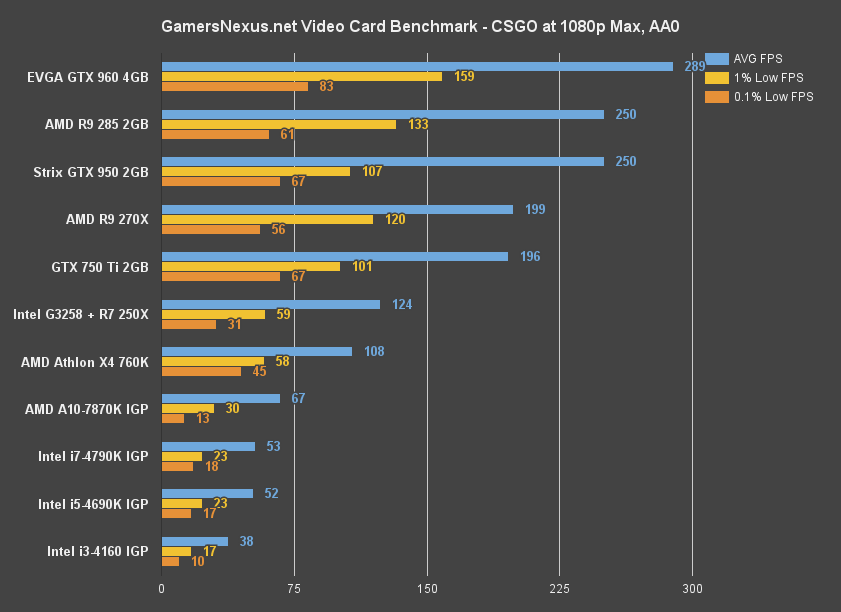

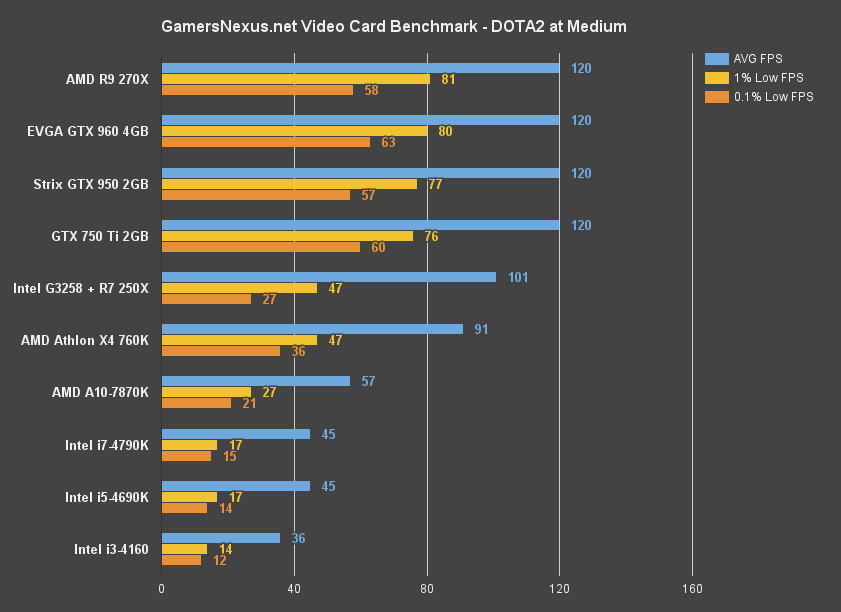

GTX 950 vs. 960, 380, 750 Ti Benchmark – CSGO & DOTA 2

These benchmarks are a little boring, but we felt inclined to include them. Above, you'll see the CSGO and DOTA2 performance results alongside IGP FPS. Note that DOTA 2's framerate locked to 120FPS.

Continue to the final page for power consumption & thermal metrics, alongside our conclusion.

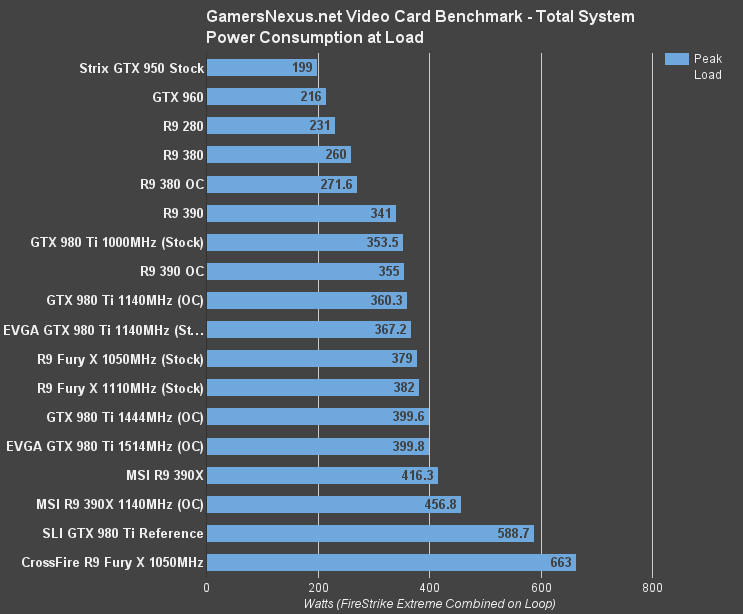

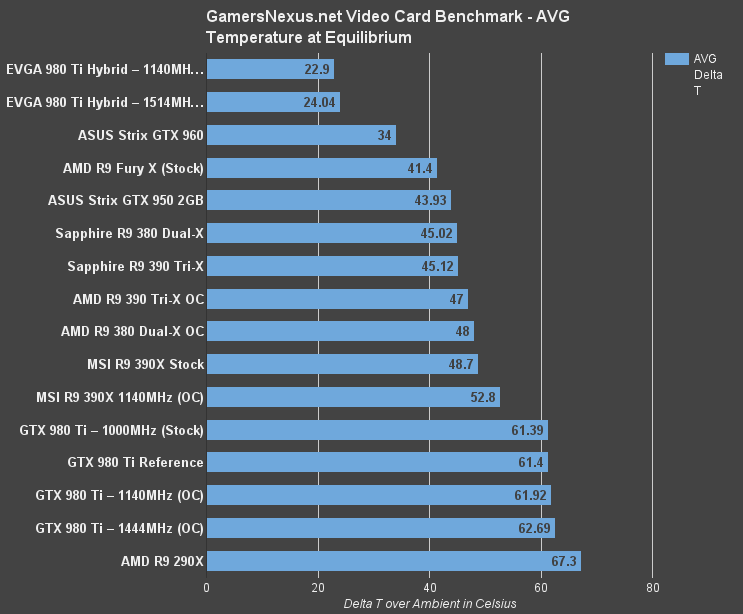

GTX 950 Temperatures & Power Consumption

Measured at equilibrium – we're still adapting our new over time metrics to GPU reviews – the GTX 950 weighs-in at 41.4C delta T over ambient. We re-tested the GTX 950 and and GTX 960 Strix cards to attempt to better understand the large disparity, ultimately chalking it up to the relatively large overclock pre-applied to our GTX 950 sample.

“Auto” fan settings were used for all tests, as we feel this is the most common way a user utilizes their GPU and presents a real-world metric.

Power consumption has become a target of nVidia's newest two architectures. AMD closed the gap significantly with Fiji's liquid implementation, but nVidia still holds the crown for raw power efficiency. We measured averaged peak system load at just 199W, low enough that a 300W PSU could be set in place if desired. A GTX 950 is more likely to be combined with lower-end hardware than the rest of our bench is using, so it is perfectly realistic that the average build could be supported on 300-350W PSUs.

The obvious flaw here, of course, is that such low wattage PSUs are presently rare. At least, they're rare while still retaining quality. We're hoping PSU manufacturers continue to lower the wattage and instead invest BOM on efficiency gains and protections.

Conclusion: Is the GTX 950 Worth It?

The GTX 950 ($160) is an unoffensive card. The unit lands squarely between its two same-brand siblings – the 750 Ti ($120) and 2GB 960 ($200) – making for an inherently uninteresting launch. There's no chart-topping going on with the GTX 950. That's not to say it's bad, just that it's not as polarizing as some other recent launches.

The GTX 950 outperforms the R9 270X in most games and, by the transitive property of device refreshing, the R7 370, while sitting shy of the +$40 R9 380 in most instances. The GTX 750 Ti is still a strong performer for gamers less interested in high-fidelity, triple-A graphics (see: Witcher 3, GTA) or who are more focused on casual and living room experiences. The 750 Ti falls to the wayside in the event a gamer has a slightly increased budget and demands more of a game's graphics; our benchmarks prove that the 950-750 Ti delta is great enough that settings could be increased if using the GTX 950. Upgrading from an existing 750 Ti to GTX 950 is not advisable.

For those on the other side of the spectrum – the side approaching $200 – it makes better sense to investigate an R9 380 or GTX 960 purchase, as the same is true there: A settings increase is in order with the upgrade.

The GTX 950 falls between all of it. If your budget just happens to be $40 shy of $200 or $40 inflated over $120, then the GTX 950 is a fair buy with little competition at its price-point. It's not exciting, but it works well and fits the target market. One thing that is remarkable, though, is the GTX 950's performance at just 90W. A 300W PSU is an option for the GTX 950, depending on the rest of the config, which is certainly a feat to be admired.

As for the MOBA focus, it just seems a little silly to us. Unless you're making money on your MOBA gaming, the GTX 750 Ti, R7 370, and similarly affordable solutions (even the R7 250X) can handle DOTA 2 just fine. The latency reduction is cool, though, and will propagate through modern Maxwell cards via GFE.

- Steve “Lelldorianx” Burke.