No reference card has impressed us this generation, insofar as usage by the enthusiast market. Primary complaints have consisted of thermal limitations or excessive heat generation, despite reasonable use cases with SIs and mITX form factor deployments. For our core audience, though, it's made more sense to recommend AIB partner models for superior cooling, pre-overclocks, and (normally) lower prices.

But that's not always the case – sometimes, as with today's review unit, the price climbs. This new child of Corsair and MSI carries on the Hydro GFX and Seahawk branding, respectively, and is posted at ~$750. The card is the construct of a partnership between the two companies, with MSI providing access to the GP104-400 chip and a reference board (FE PCB), and Corsair providing an H55 CLC and SP120L radiator fan. The companies sell their cards separately, but are selling the same product; MSI calls this the “Seahawk GTX 1080 ($750),” and Corsair sells only on its webstore as the “Hydro GFX GTX 1080.” The combination is one we first looked at with the Seahawk 980 Ti vs. the EVGA 980 Ti Hybrid, and we'll be making the EVGA FTW Hybrid vs. Hydro GFX 1080 comparison in the next few days.

For now, we're reviewing the Corsair Hydro GFX GTX 1080 liquid-cooled GPU for thermal performance, endurance throttles, noise, power, FPS, and overclocking potential. We will primarily refer to the card as the Hydro GFX, as Corsair is the company responsible for providing the loaner review sample. Know that it is the same as the Seahawk.

NVIDIA GeForce GTX 1060 Specs vs. GTX 1070, GTX 1080, GTX 960

| NVIDIA Pascal vs. Maxwell Specs Comparison | ||||||

| GTX 1080 | GTX 1070 | GTX 1060 | GTX 980 Ti | GTX 980 | GTX 960 | |

| GPU | GP104-400 Pascal | GP104-200 Pascal | GP106 Pascal | GM200 Maxwell | GM204 Maxwell | GM204 |

| Transistor Count | 7.2B | 7.2B | 4.4B | 8B | 5.2B | 2.94B |

| Fab Process | 16nm FinFET | 16nm FinFET | 16nm FinFET | 28nm | 28nm | 28nm |

| CUDA Cores | 2560 | 1920 | 1280 | 2816 | 2048 | 1024 |

| GPCs | 4 | 3 | 2 | 6 | 4 | 2 |

| SMs | 20 | 15 | 10 | 22 | 16 | 8 |

| TPCs | 20 | 15 | 10 | - | - | - |

| TMUs | 160 | 120 | 80 | 176 | 128 | 64 |

| ROPs | 64 | 64 | 48 | 96 | 64 | 32 |

| Core Clock | 1607MHz | 1506MHz | 1506MHz | 1000MHz | 1126MHz | 1126MHz |

| Boost Clock | 1733MHz | 1683MHz | 1708MHz | 1075MHz | 1216MHz | 1178MHz |

| FP32 TFLOPs | 9TFLOPs | 6.5TFLOPs | 3.85TFLOPs | 5.63TFLOPs | 5TFLOPs | 2.4TFLOPs |

| Memory Type | GDDR5X | GDDR5 | GDDR5 | GDDR5 | GDDR5 | GDDR5 |

| Memory Capacity | 8GB | 8GB | 6GB | 6GB | 4GB | 2GB, 4GB |

| Memory Clock | 10Gbps GDDR5X | 4006MHz | 8Gbps | 7Gbps GDDR5 | 7Gbps GDDR5 | 7Gbps |

| Memory Interface | 256-bit | 256-bit | 192-bit | 384-bit | 256-bit | 128-bit |

| Memory Bandwidth | 320.32GB/s | 256GB/s | 192GB/s | 336GB/s | 224GB/s | 115GB/s |

| TDP | 180W | 150W | 120W | 250W | 165W | 120W |

| Power Connectors | 1x 8-pin | 1x 8-pin | 1x 6-pin | 1x 8-pin 1x 6-pin | 2x 6-pin | 1x 6-pin |

| Release Date | 5/27/2016 | 6/10/2016 | 7/19/2016 | 6/01/2015 | 9/18/2014 | 01/22/15 |

| Release Price | Reference: $700 MSRP: $600 | Reference: $450 MSRP: $380 | Reference: $300 MSRP: $250 | $650 | $550 | $200 |

The specs for all GTX 1080s are the same, as one might expect, with variance only emerging via the cooling solution and pre-overclock values. AIB partners further differentiate themselves with software solutions, MSI and Corsair sticking with the former's Afterburner, and with warranty plans.

The Hydro GFX GTX 1080, aka Seahawk, pre-overclocks to a boosted 1847MHz in the “OC mode” that's pre-applied to our review sample. In “gaming mode,” the card boosts to 1822MHz, and “silent mode” boosts to 1733MHz – the same as the stock GTX 1080 with nVidia specs.

Corsair and MSI mostly stick to the stock memory clock for the Hydro GFX, about 10Gbps (or 10GHz) for the reference design. OC Mode pushes us to 10.1GHz (10108MHz) effective memory clock, which is distilled down to the actual clock by dividing by 2 for DDR and again by 2 for GDDR5.

Corsair and EVGA use a single 8-pin header, more than sufficient for the GTX 1080 GP104-400 GPU. MSI previously told us that they use a custom VBIOS with a marginally increased VDDC limit from the FE VBIOS, but we've yet to see any reasonable OC gain from such changes. VBIOS is thoroughly locked-down this generation, leaving little room for AIB partners to negotiate advantages.

Tearing the Hydro GFX will teach us more about what to expect:

Corsair GTX 1080 Hydro GFX Tear-Down

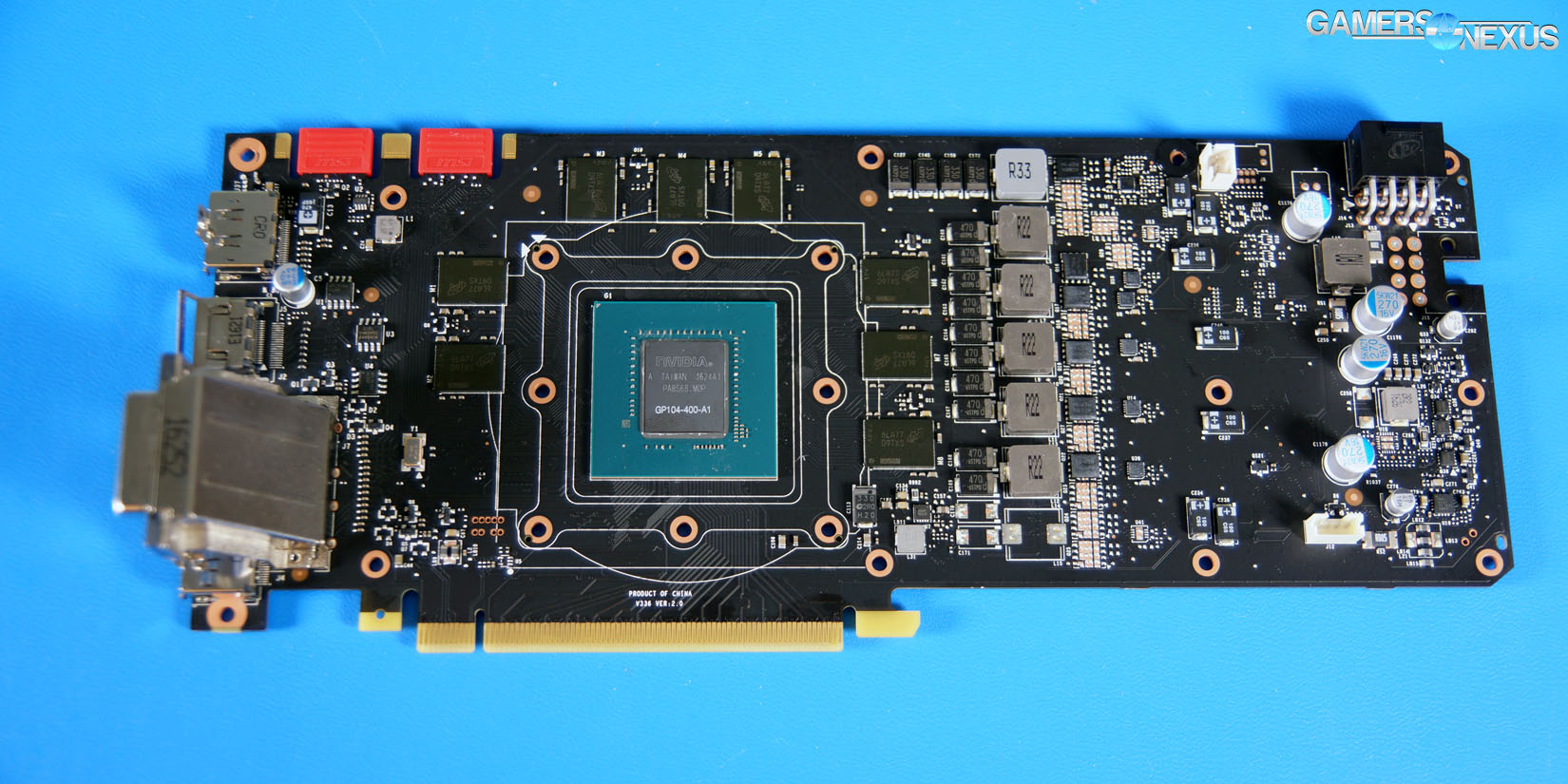

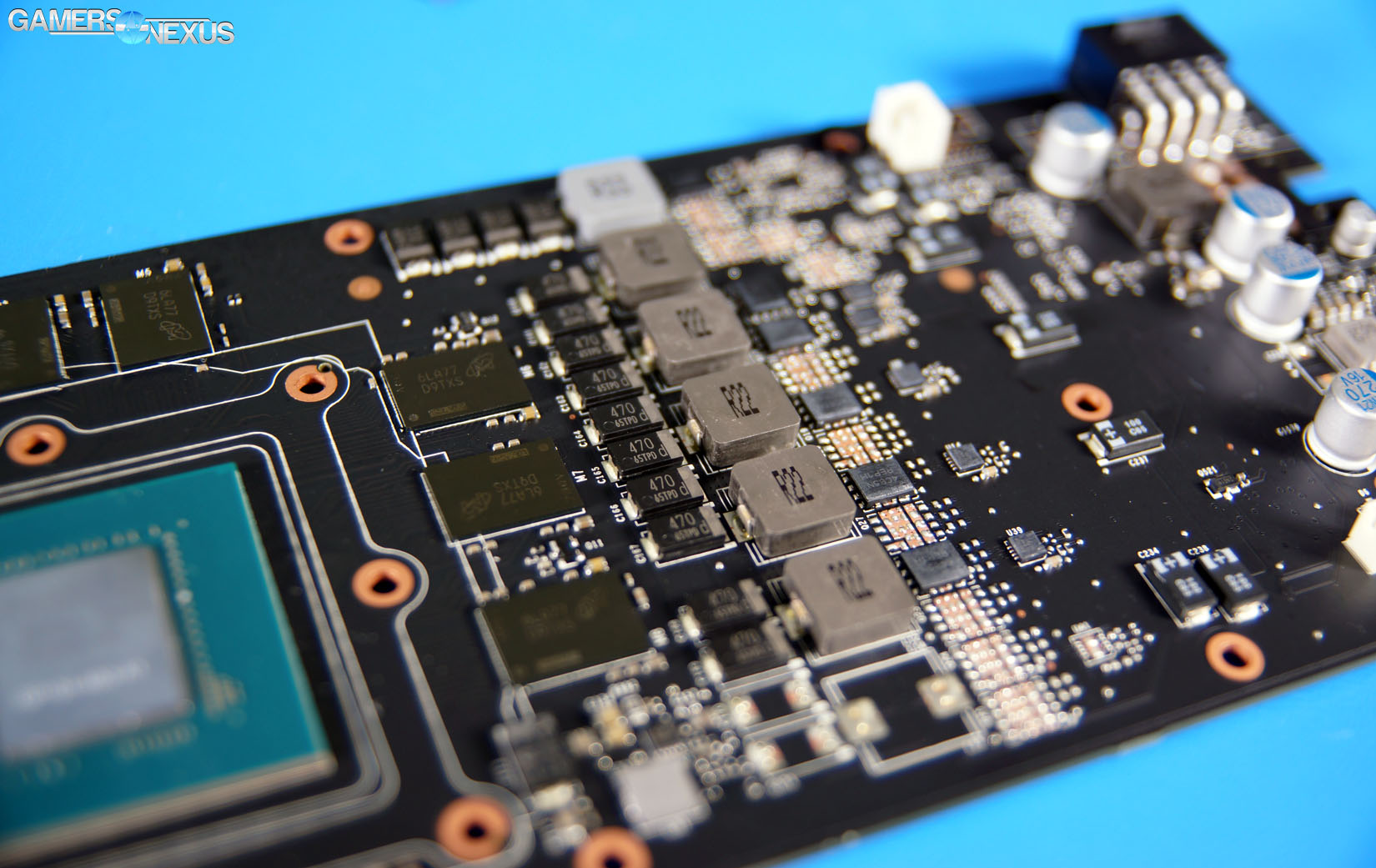

Taking apart the Corsair Hydro GFX GTX 1080 (or “MSI Seahawk 1080,” same thing) reveals that the PCB is the very same used for the reference cards by nVidia. We first showed the Founders Edition PCB in our GTX 1080 Hybrid build log, detailing lightly its 5+1 phase power design for the VRM, 8Gb Micron modules (for 8GB GDDR5X), and down-costed absentees on the board. The VRM, for instance, could add one full set of caps, FETs, and an inductor for an additional phase, but that PCB of both the FE and Hydro GFX (by extension) leaves this blank. That leaves us with the 5+1 setup, for which the initial overclocking is shown in our GTX 1080 review. We'll get to the Hydro GFX overclock momentarily.

The above video details our tear-down of the Corsair Hydro GFX / MSI Seahawk GTX 1080. Thankfully, unlike the hellish-to-disassembly Founders Edition, the Corsair and MSI amalgam sticks entirely to Phillips head screws, mostly of the A1 size. There are 8 Phillips screws securing the somewhat flimsy backplate (it's clear that this one is mostly for looks, though some structural support is provided), then 4 screws securing the pump block to the PCB. 6 Phillips screws and 2 hex screws (for DVI) secure the expansion plate to the card, with another set of 6 Phillips screws securing the shroud to the baseplate.

The baseplate is used to sink heat from the VRAM and VRM, which then sees dissipation from the blower fan. The baseplate isn't finned, but GDDR5X doesn't generate a ton of heat and the card's OC potential is fairly limited, so the baseplate + blower solution is sufficient. We'll show this later.

With the baseplate removed, the GP104-400 (rev A1) GPU is revealed, along with the expected 8x 8Gb VRAM modules, 5+1 VRM, and the rest of the PCB. A splitter cable merges the pump power and fan power into the PCB's PWM fan header. The blower fan is modulated by thermal demand, as is normal.

The Corsair H55 CLC sees deployment in the Seahawk/Hydro GFX. From quick measurements and visual inspection, it appears as if this variant of the H55 uses a flat coldplate for its cooling solution -- an improvement over the curvature found in most CPU liquid coolers. A slight concave bow is useful for dissipating heat across an IHS -- a curved surface -- and for dealing with unique CPU hotspots. These hotspots don't exist on a GPU, though; at least, not the same hotspots. A GPU is a flat piece of silicon atop a substrate, with no IHS between the GPU and its cooling solution. Making direct, perfectly flat contact will immediately improve performance over a run-of-the-mill CPU CLC, which may bow in a way that prevents full, flat contact to the surface.

Tension of the solution also matters, and imperfect mounting pressure can skew thermals negatively. The Corsair unit appears to just barely make acceptable contact with the GPU silicon, and seems to be corrected for by additional torque on the screws. The company has slotted in some o-rings between the CLC standoffs and the PCB to prevent cosmetic damage, it appears, so steps have been taken to account for this. The close contact is a result of the tall baseplate, which exceeds the z-height of the silicon, and so would require a copper protrusion for full contact or another solution -- like additional torque on the screws. We ran into this same issue when building our GTX 1060 Hybrid card, and solved it in a less-than-elegant way: By filing down the base plate.

Compared to the GTX 1080 FE, the Corsair Hydro GFX is trivial to disassemble – and that's a good thing. It'd be easy to get in there and make changes or fixes, if necessary.

Reminder on Pascal Architecture & GTX 1080 Thermal/Power Design

To learn about clock gating, power savings, Boost 3.0, and Pascal architecture (including discussion on the block diagram, datapath organization, etc.), read these posts:

- Pascal GP100 Architecture Deep-Dive

- GTX 1080 Review & Architecture

- GTX 1070 Review & Architecture

- GTX 1060 Review & Architecture

Our latest AMD RX 460 review explains Polaris 10 & 11 in great detail, if that also interests you. We'd summarily recommend visiting our Titan X GN Hybrid Results content & GTX 1080 GN Hybrid results content for further discussion on Pascal functionality under varied thermal scenarios.

Here's a quick quote of some relevant pieces, pasted from our GTX 1080 review:

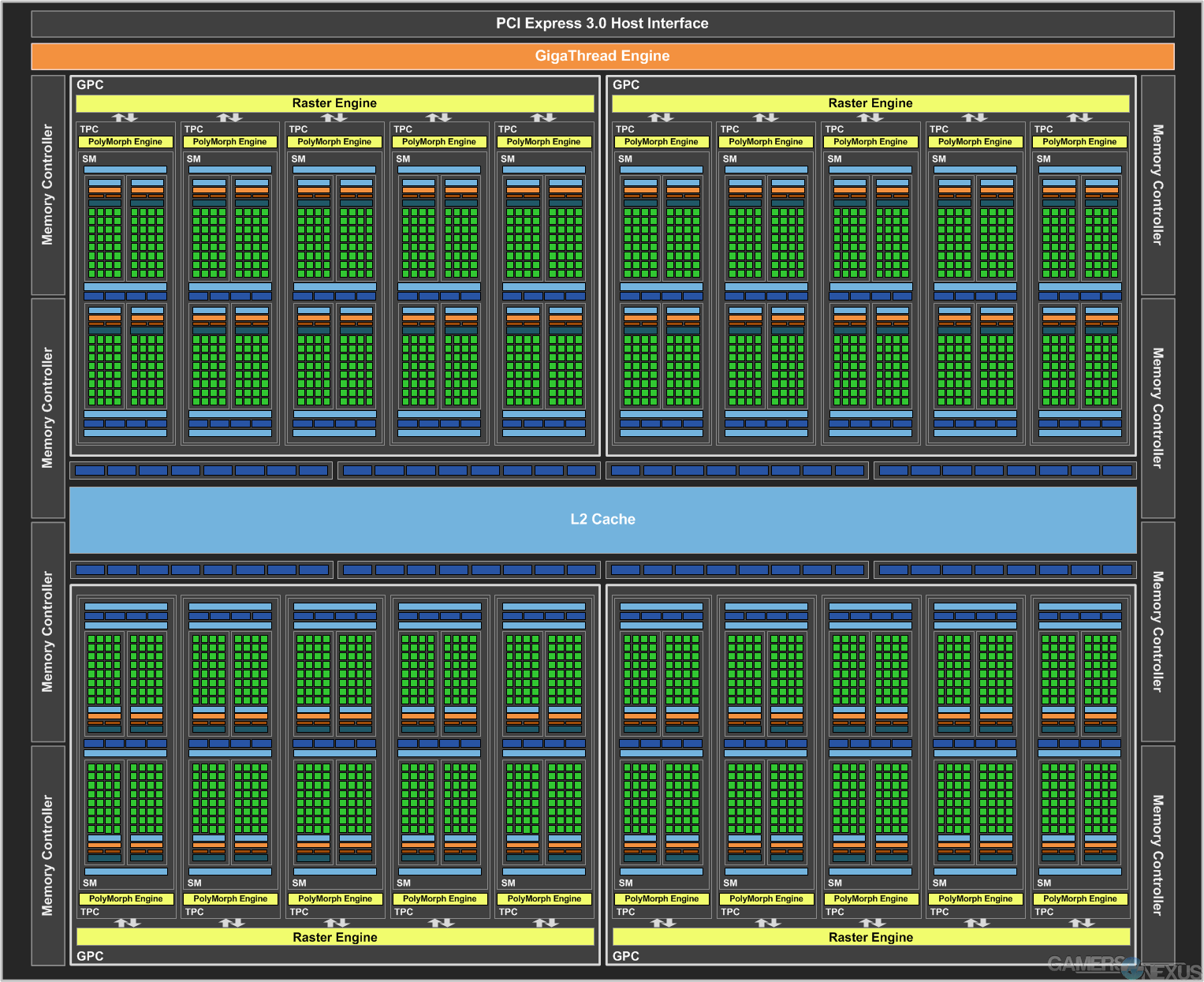

“GP104 is a gaming-grade GPU with no real focus on some of the more scientific applications of GP100. GP104 (and its host GTX 1080) is outfitted with 7.2B transistors, a marked growth over the GTX 980's 5.2B transistors (though fewer than the GTX 980 Ti's 8B – but transistor count doesn't mean much as a standalone metric; architecture matters).

GP104 hosts 20SMs. SM architecture is familiar in some ways to GM204: An instruction cache is shared between two effective “partitions,” with each of those owning dedicated instruction buffers (one each), warp schedulers (one each), and dispatch units (two each). The register file is sized at 16,384 x 32-bit, one per “partition” of the SM. The new PolyMorph Engine 4.0 sits on top of this, but we'll talk about that more within the simultaneous multi-projection section.

Each GP104 SM hosts 128 CUDA cores – a stark contrast from the layout of the GP100, which accommodates for FP64 and FP16 where GP104 does not (because neither is particularly useful for gamers). In total, the 20 SMs and 128 core-per-SM count spits out a total of 2560 CUDA cores. GP104 contains 20 geometry units, 64 ROPs (depicted as horizontally flanking the L2 Cache), and 160 TMUs (20 SMs * 8 TMUs = 160 TMUs).

Further, SMs each possess 256KB of register file capacity, 1x 96KB shared memory unit, 48KB of L1 Cache, and the 8 TMUs we already discussed. There are four total dedicated raster engines on Pascal GP104 (one per GPC). There are 8 Special Function Units (SFUs) per SM partition – 16 total per SM – and 8 Load/Store (LD/ST) units per SM partition. SFUs are utilized for low-level execution of mathematical instruction, e.g. trigonometric sin/cos math. LD/ST units transact data between cache and DRAM.

The new PolyMorph Engine 4.0 (PME - originating on Fermi) has been updated to support a new Simultaneous Multi-Projection (SMP) function, which we'll explain in greater depth below. On an architecture level, each TPC contains one SM and one PolyMorph Engine (10 total PolyMorph engines); drilling down further, each PME contains a unit specifically dedicated to SMP tasks."

Learn more about Asynchronous Compute, Preemptive Compute, and GP104 architecture here.

Reminder on GN's Previous GTX 1080 DIY “Hybrid” Build

As a reminder, we previously converted the 1080 FE into a liquid-cooled card – months ahead of availability of liquid-cooled GTX 1080s – and that content is available here:

Continue to page 2 for GPU testing methodology.

Test Methodology

Game Test Methodology

We tested using our GPU test bench, detailed in the table below. Our thanks to supporting hardware vendors for supplying some of the test components.

AMD 16.8.1 drivers were used for the RX 470 & 460 graphics cards. 16.7.2 were used for testing GTA V & DOOM (incl. Vulkan patch) on the RX 480. Drivers 16.6.2 were used for all other devices or games. NVidia's 372.54 drivers were used for game (FPS) testing on the GTX 1080 and 1060. The 368.69 drivers were used for other devices. Game settings were manually controlled for the DUT. All games were run at presets defined in their respective charts. We disable brand-supported technologies in games, like The Witcher 3's HairWorks and HBAO. All other game settings are defined in respective game benchmarks, which we publish separately from GPU reviews. Our test courses, in the event manual testing is executed, are also uploaded within that content. This allows others to replicate our results by studying our bench courses. In AMD Radeon Settings, we disable all AMD "optimization" of graphics settings, e.g. filtration, tessellation, and AA techniques. This is to ensure that games are compared as "apples to apples" graphics output. We leave the application in control of its graphics, rather than the IHV. In NVIDIA's control panel, we disable G-Sync for testing (and disable FreeSync for AMD).

Windows 10-64 build 10586 was used for testing.

Each game was tested for 30 seconds in an identical scenario, then repeated three times for parity.

Average FPS, 1% low, and 0.1% low times are measured. We do not measure maximum or minimum FPS results as we consider these numbers to be pure outliers. Instead, we take an average of the lowest 1% of results (1% low) to show real-world, noticeable dips; we then take an average of the lowest 0.1% of results for severe spikes.

| GN Test Bench 2015 | Name | Courtesy Of | Cost |

| Video Card | This is what we're testing! | - | - |

| CPU | Intel i7-5930K CPU | iBUYPOWER | $580 |

| Memory | Corsair Dominator 32GB 3200MHz | Corsair | $210 |

| Motherboard | EVGA X99 Classified | GamersNexus | $365 |

| Power Supply | NZXT 1200W HALE90 V2 | NZXT | $300 |

| SSD | HyperX Savage SSD | Kingston Tech. | $130 |

| Case | Top Deck Tech Station | GamersNexus | $250 |

| CPU Cooler | NZXT Kraken X41 CLC | NZXT | $110 |

For Dx12 and Vulkan API testing, we use built-in benchmark tools and rely upon log generation for our metrics. That data is reported at the engine level.

Video Cards Tested

- Corsair Hydro GFX GTX 1080 ($750)

- Sapphire RX 470 Platinum (~$180?)

- MSI RX 480 Gaming X

- MSI GTX 1060 Gaming X ($290)

- NVIDIA GTX 1060 FE ($300)

- AMD RX 480 8GB ($240)

- NVIDIA GTX 1080 Founders Edition ($700)

- NVIDIA GTX 980 Ti Reference ($650)

- NVIDIA GTX 980 Reference ($460)

- NVIDIA GTX 980 2x SLI Reference ($920)

- AMD R9 Fury X 4GB HBM ($630)

- AMD MSI R9 390X 8GB ($460)

- And more

Thermal Test Methodology

We strongly believe that our thermal testing methodology is among the best on this side of the tech-media industry. We've validated our testing methodology with thermal chambers and have proven near-perfect accuracy of results.

Conducting thermal tests requires careful measurement of temperatures in the surrounding environment. We control for ambient by constantly measuring temperatures with K-Type thermocouples and infrared readers. We then produce charts using a Delta T(emperature) over Ambient value. This value subtracts the thermo-logged ambient value from the measured diode temperatures, producing a delta report of thermals. AIDA64 is used for logging thermals of silicon components, including the GPU diode. We additionally log core utilization and frequencies to ensure all components are firing as expected. Voltage levels are measured in addition to fan speeds, frequencies, and thermals. GPU-Z is deployed for redundancy and validation against AIDA64.

All open bench fans are configured to their maximum speed and connected straight to the PSU. This ensures minimal variance when testing, as automatically controlled fan speeds will reduce reliability of benchmarking. The CPU fan is set to use a custom fan curve that was devised in-house after a series of testing. We use a custom-built open air bench that mounts the CPU radiator out of the way of the airflow channels influencing the GPU, so the CPU heat is dumped where it will have no measurable impact on GPU temperatures.

We use an AMPROBE multi-diode thermocouple reader to log ambient actively. This ambient measurement is used to monitor fluctuations and is subtracted from absolute GPU diode readings to produce a delta value. For these tests, we configured the thermocouple reader's logging interval to 1s, matching the logging interval of GPU-Z and AIDA64. Data is calculated using a custom, in-house spreadsheet and software solution.

Endurance tests are conducted for new architectures or devices of particular interest, like the GTX 1080, R9 Fury X, or GTX 980 Ti Hybrid from EVGA. These endurance tests report temperature versus frequency (sometimes versus FPS), providing a look at how cards interact in real-world gaming scenarios over extended periods of time. Because benchmarks do not inherently burn-in a card for a reasonable play period, we use this test method as a net to isolate and discover issues of thermal throttling or frequency tolerance to temperature.

Our test starts with a two-minute idle period to gauge non-gaming performance. A script automatically triggers the beginning of a GPU-intensive benchmark running MSI Kombustor – Titan Lakes for 1080s. Because we use an in-house script, we are able to perfectly execute and align our tests between passes.

Power Testing Methodology

Power consumption is measured at the system level. You can read a full power consumption guide and watt requirements here. When reading power consumption charts, do not read them as a GPU-specific requirements – this is a system-level power draw.

Power draw is measured during a FireStrike Extreme - GFX2 run. We are currently rebuilding our power benchmark.

Corsair Hydro GFX GTX 1080 Thermal Benchmark vs. GN's Hybrid, FE, More

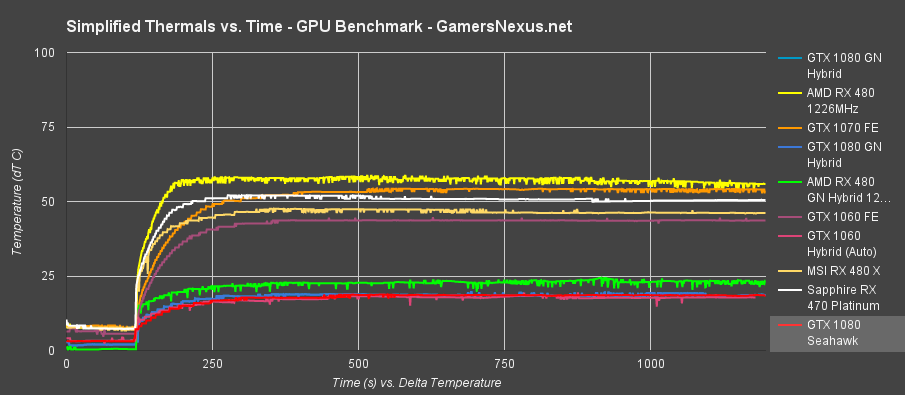

Our thermal tests consist of peak average thermals, using delta T over ambient, and of endurance thermals that present the GPU Diode temperature. This helps us define thermal throttle points and clock-rate instability, as correlated in our versus time charts. Our first chart uses the delta value which, as described on the Test Methodology page, constantly monitors ambient with thermocouples and then subtracts second-to-second ambient from second-to-second GPU Diode temperatures.

Two K-type thermocouples are deployed: One at the fan intake, about 2mm away from the radiator fan, and one that's ~8” above the test bench to collect room ambient. We use a spreadsheet of our own design to perform necessary calculations, resulting in the below charts.

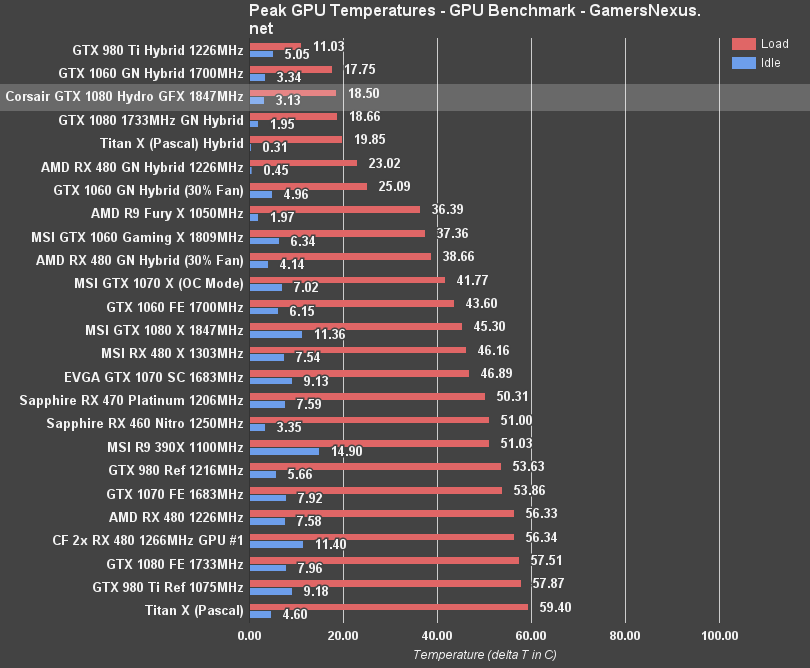

The GTX 1080 Hydro GFX / MSI Seahawk lands first among the high-end Pascal GPUs, at 18.5C delta T over ambient load. This plants the Corsair and MSI amalgam ahead of our DIY Hybrid, which used an EVGA cooler ($100) and less official implementation. Our version of the GTX 1080 Hybrid, the GN Hybrid, operates at 18.66C – so 0.16C warmer – but has a lower clock-rate and, to some extent, voltage. This contributes to the lower idle. We believe that part of the load difference comes from the implementation, since we used longer screws and have imperfect mounting pressure as a result. We also rendered the blower fan mostly useless by sacking the shroud, so neighboring components will get warmer than with a proper EVGA or Corsair / MSI Hybrid card. We'll soon test the official EVGA variant, but only received it today.

For reference, the Founders Edition GTX 1080 operates at around 57.51C delta T over ambient, a swing of about 39C – and that's with a higher clock on the Hydro GFX.

Corsair Hydro GFX GTX 1080 Thermals over Time

Here's the temperature over time plot. This shows our automated test execution kicking off the load test at the same point for all cards. This is mostly used for internal data integrity and validation, but also helps provide a look at ramp-up time for the cards. The data is the same as the above chart – just plotted differently.

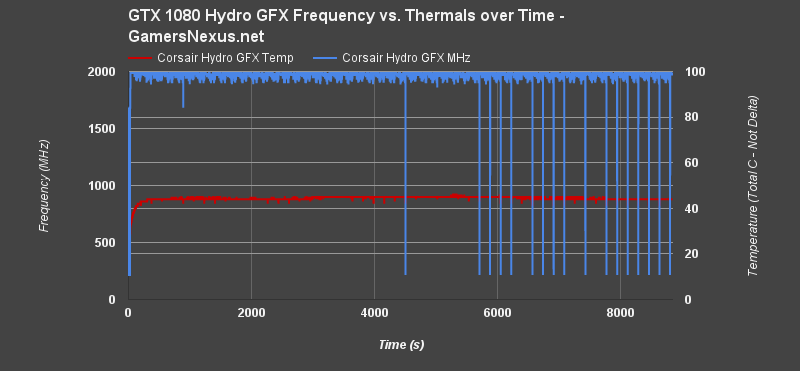

Corsair Hydro GFX GTX 1080 Endurance

Let's look at our endurance charts.

This chart shows frequency versus temperature and time, plotted over a two-hour burn-in period. The clock-rate is fairly tame for the first 90 minutes, with the average frequency range at at about +/-80MHz. Later, we start seeing hard drops to 215MHz – and that's with an advanced cooling solution on the Hydro GFX.

We've seen this behavior on a couple of Pascal GPUs. During the latter half of the endurance test, the clock-rate will occasionally, for a one-second period, drop to 215MHz from the near-2GHz mark a second prior. We've reached out to ask nVidia about this anomaly, as it is occurring somewhat regularly on Pascal cards. In this instance, it's not a thermal throttle – likely power or something similar. NVidia has informed us that this is part of normal Boost functionality, but we are waiting for further feedback as to why it happens on some cards and not others. So far, the answer appears to be that not all cards or GPUs are equal, and that we need a “huge” sample size for further analysis.

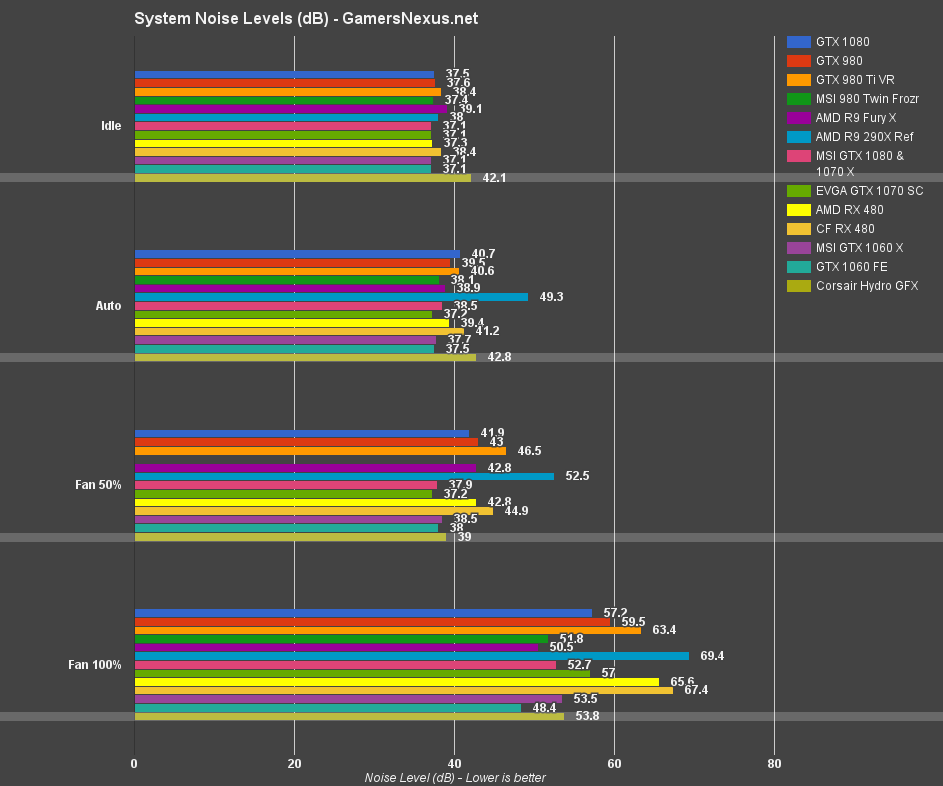

Corsair Hydro GFX GTX 1080 Noise Comparison

This chart, as a reminder, shows the system noise level – that means the entire noise, not just the card. We disconnect all bench fans for this test, but there's still a (quiet) PSU fan and CPU cooler fan on the X41 CLC. Our noise floor for the room is approximately 25-26dB, depending on time of day (this is accounted for in delta values), and the lowest noise of the bench (no GPU) is about 37.1dB.

The Corsair Hydro GFX system performs at about 42.1dB when idle (with the radiator fan on full blast), 42.8dB auto (5 minute load, generating a 50% speed VRM fan), but drops to 39dB when the radiator fan is at 50%. Because the temperatures will still be well below any air cooler card, this is the biggest point: Dropping or customizing your radiator fan speed will allow far greater noise-thermal benefit, and is the secret to actually using “hybrid” cards to their fullest potential.

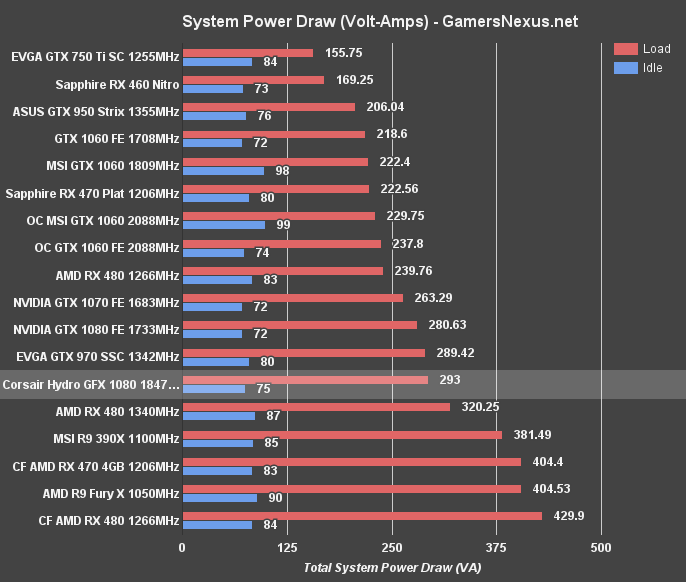

Corsair Hydro GFX GTX 1080 Power Draw

As explained in the test methodology section, we're measuring apparent power draw at the wall using VA. The test shows total system power draw, not per-device power draw. This means we are able to look at deltas between configurations for an understanding of where each device lands in the stack.

Corsair's Hydro GFX, with its pre-overclock, lands just above the GTX 1080 FE in power consumption – as expected. We're at ~293W for total system power draw on the bench platform (detailed on page 2), which is a few VA higher than the FE card (~280VA).

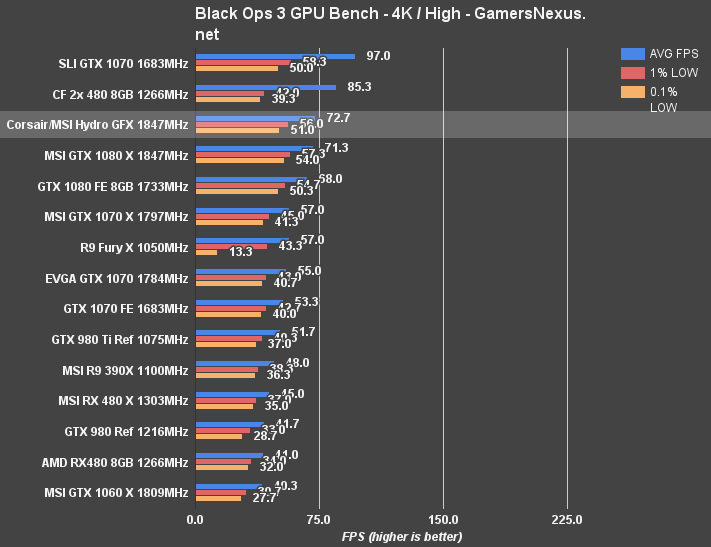

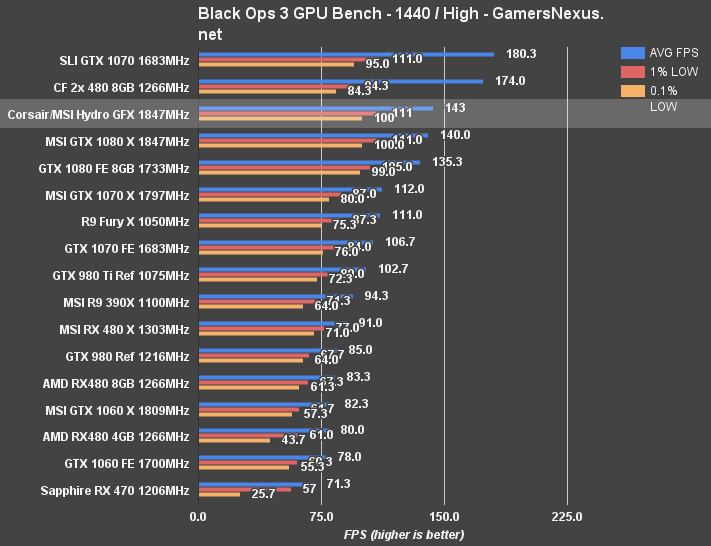

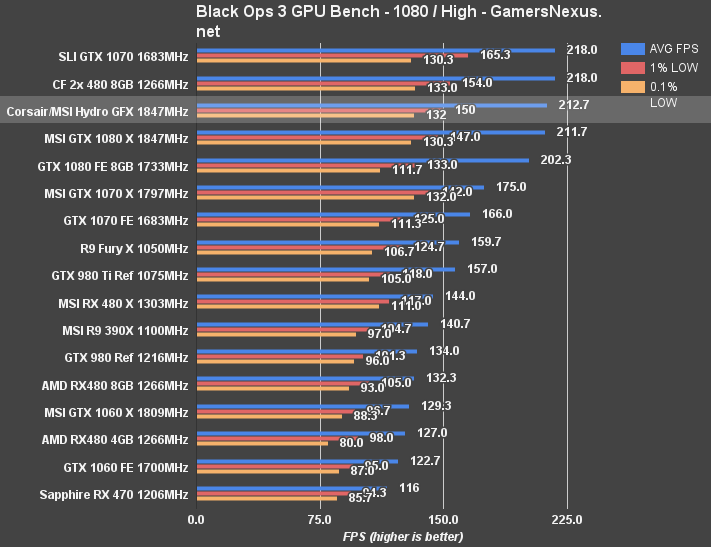

Black Ops 3 Benchmark – Hydro GFX 1080 vs. MSI Gaming X, Founders Edition, etc.

Call of Duty: Black Ops 3 runs on our bench with “high” settings, so there's still room to scale to “extra” (effectively Ultra) with these high-end devices. The game is also exceptionally well optimized and is vendor agnostic, but tends to show some favor toward AMD in FPS results.

We're testing using 4K, 1440p, and 1080p resolutions with the “High” settings (OIT set to “Medium”). 1080p doesn't really need to be tested, but the majority of the market is still running that resolution, so we threw it in.

At 4K/High, we're seeing the GTX 1080 Hydro GFX perform at about 73FPS AVG, with lows that are mostly in-step with the MSI 1080 Gaming X. They're marginally reduced as a result of boost clock functionality during this particular title, but the Hydro GFX does maintain about a 1FPS lead. That's about all we can expect for two cards on the same silicon. In this particular title, CrossFire and SLI configurations will outperform the single 1080 Hydro GFX, though with disproportionately lower 1% and 0.1% metrics.

At 1440p, we see the Hydro GFX pushing to 143FPS – just at the 144Hz point, for FPS players who care about that refresh – with high sustained low metrics. The 1080 Gaming X is about 1-2% slower, with the GTX 1080 FE a full 8FPS, or about 5.7% slower.

1080p is a little unnecessary to test, again, but we're doing it because so much of the market is still on 1080p displays. With High settings, we've still got room to go up to “Extra,” but we're pushing 212FPS out the gate, average, with the GTX 1080 Gaming X just behind. The 1080 FE is showing roughly a 5% change, as with the previous tests.

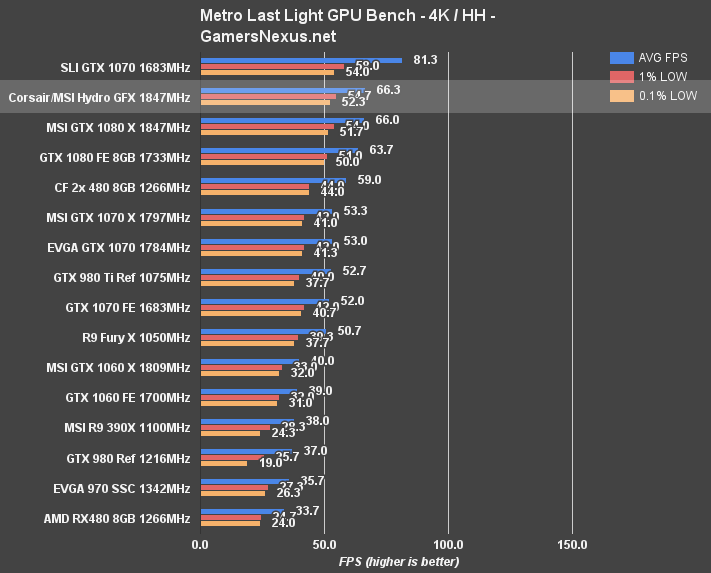

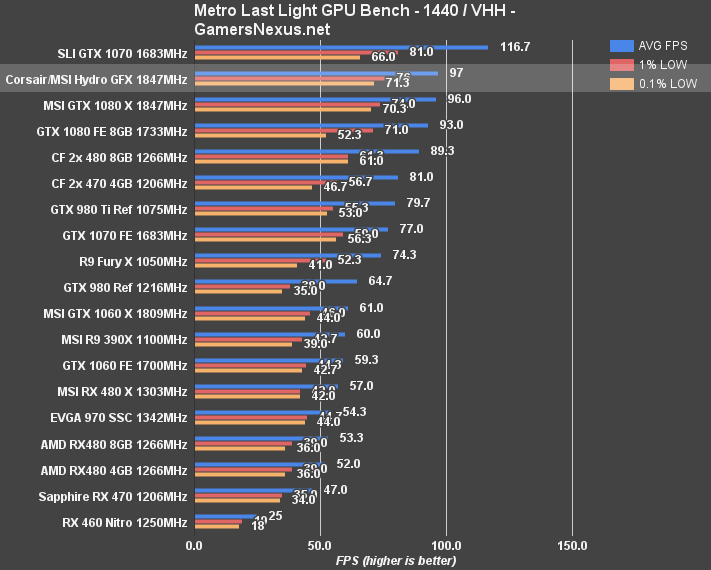

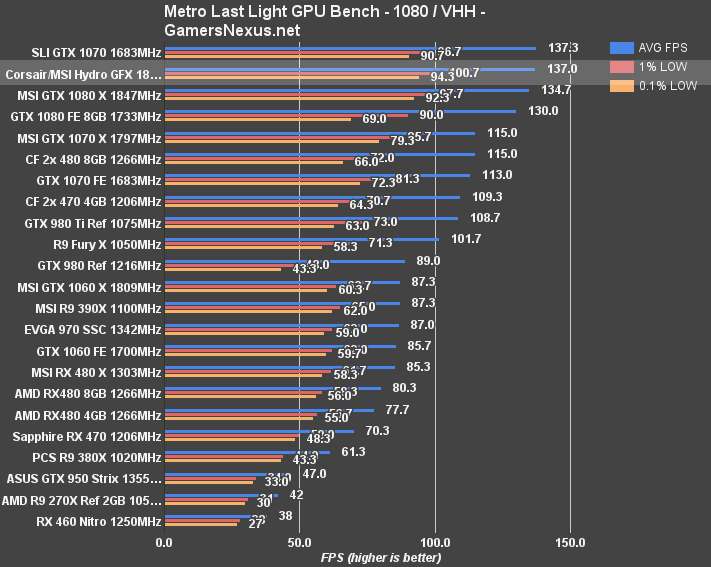

Metro: Last Light – Hydro GFX 1080 vs. Founders Edition, MSI Gaming X, etc.

Metro: Last Light remains the pinnacle of reproducible performance results. That said, we recently re-benched the 1080 with MLL (FE & Gaming X) following some driver updates that resolved 0.1% low metrics.

The Corsair Hydro GFX again sits atop the single card performance results, with 137FPS AVG at 1080p (+7FPS over the 1080 FE), 97FPS AVG at 1440p, and 66.3FPS AVG at 4K. At 4K, performance is mostly identical to the 1080 Gaming X card.

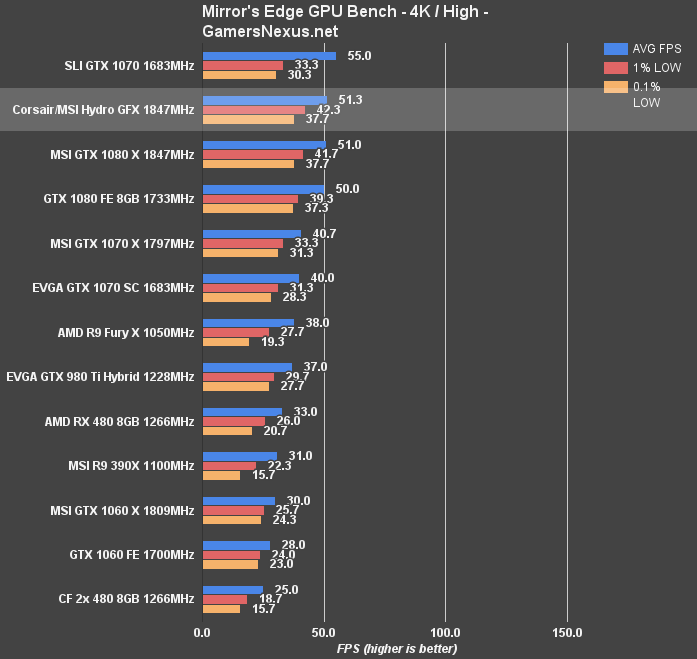

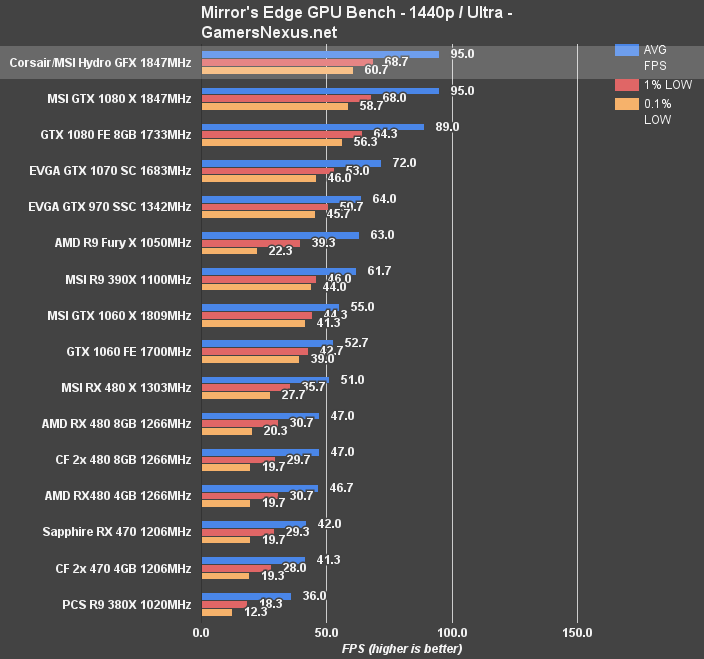

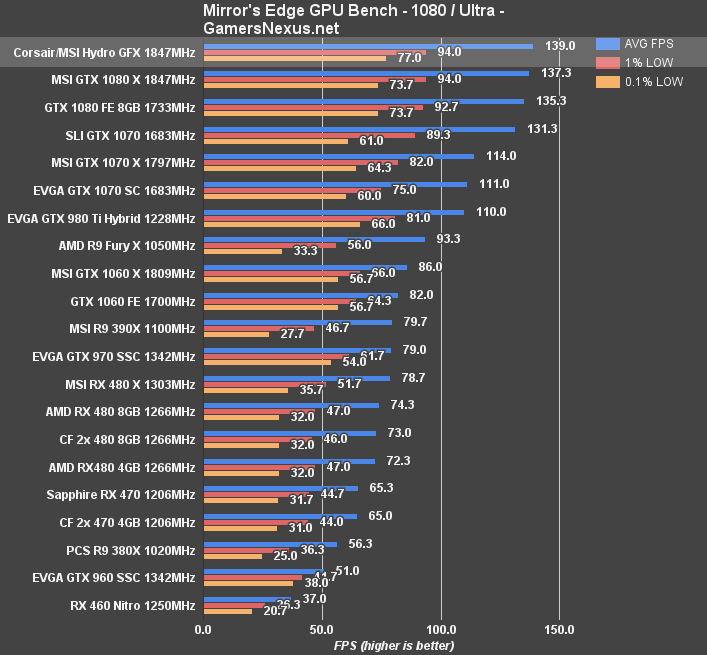

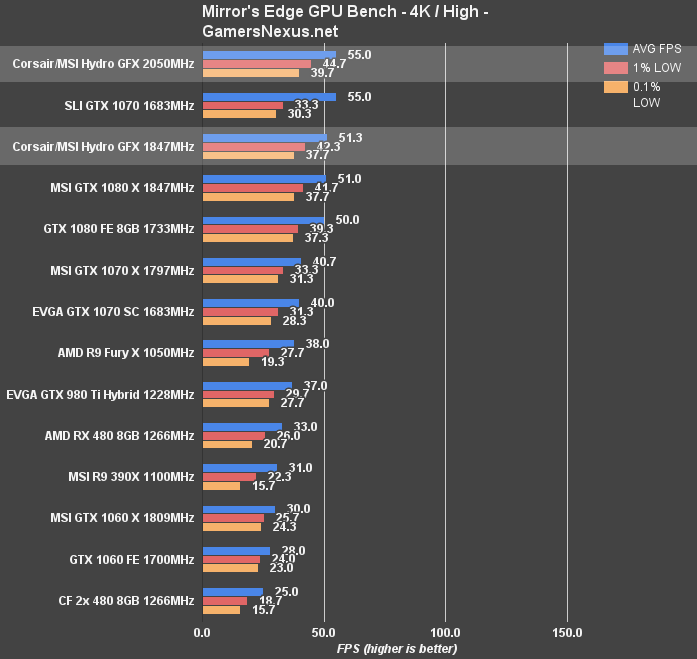

Mirror's Edge Catalyst – Hydro GFX 1080 Benchmark

Mirror's Edge Catalyst is among the more intensive games presently out, and makes heavy use of Post-FX for its visual edge. At 4K High, the Hydro GFX doesn't quite meet 60FPS – but neither does any other configuration. We're at 51FPS AVG with 42FPS 1% lows and 38FPS 0.1% lows, trailed by the 1080 Gaming X at roughly the same output, and then the 1080 FE at 1FPS lower, or 3FPS lower in the 1% metric. As expected, there's no real difference here between cards, since it's all the same silicon and similar clock-rates.

1440p posts the Hydro GFX and 1080 Gaming X at 95FPS AVG, with the 1080 FE trailing by about 6%. This feeds back into our original review of the 1080 FE, where we recommended against its purchase due to thermal limits, a lower clock, and comparatively poor value.

At 1080p, we see performance in the 140FPS range for the Hydro GFX, with the rest trailing a few FPS behind.

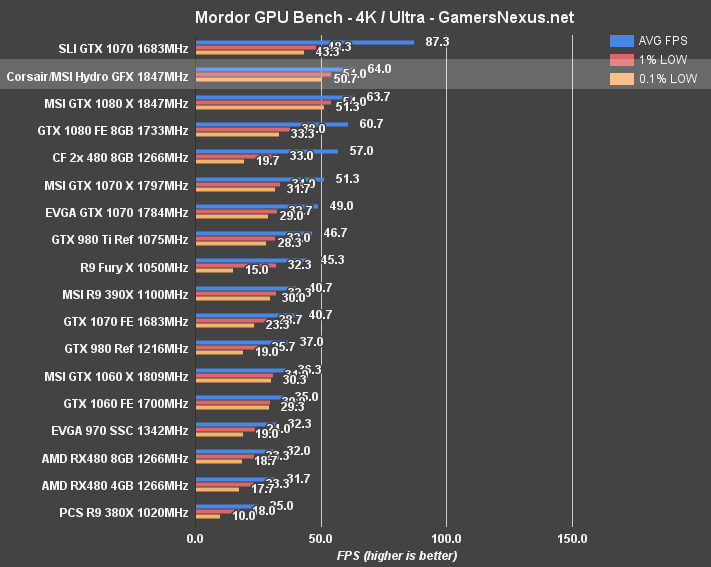

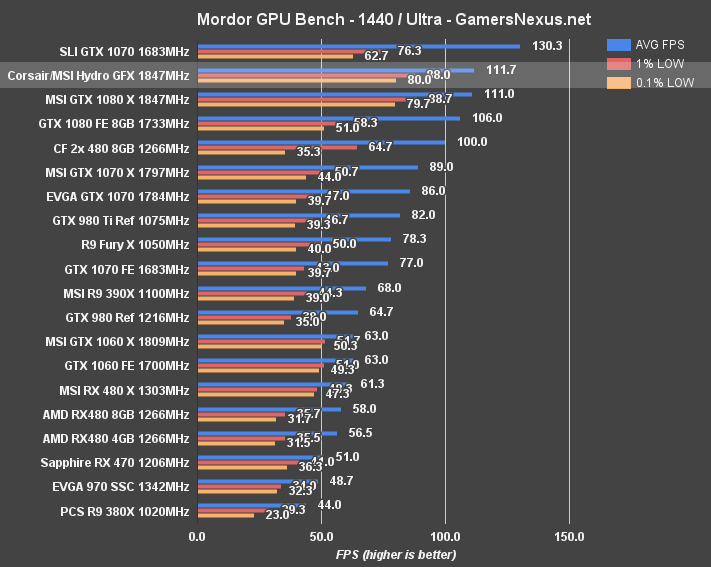

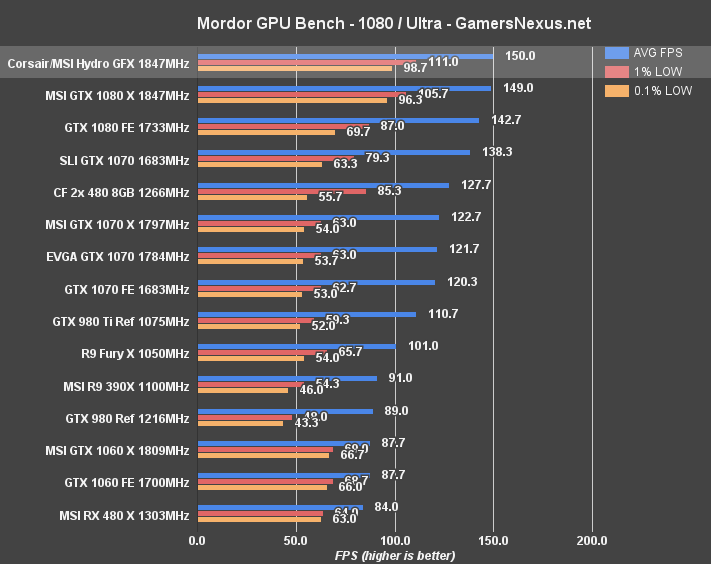

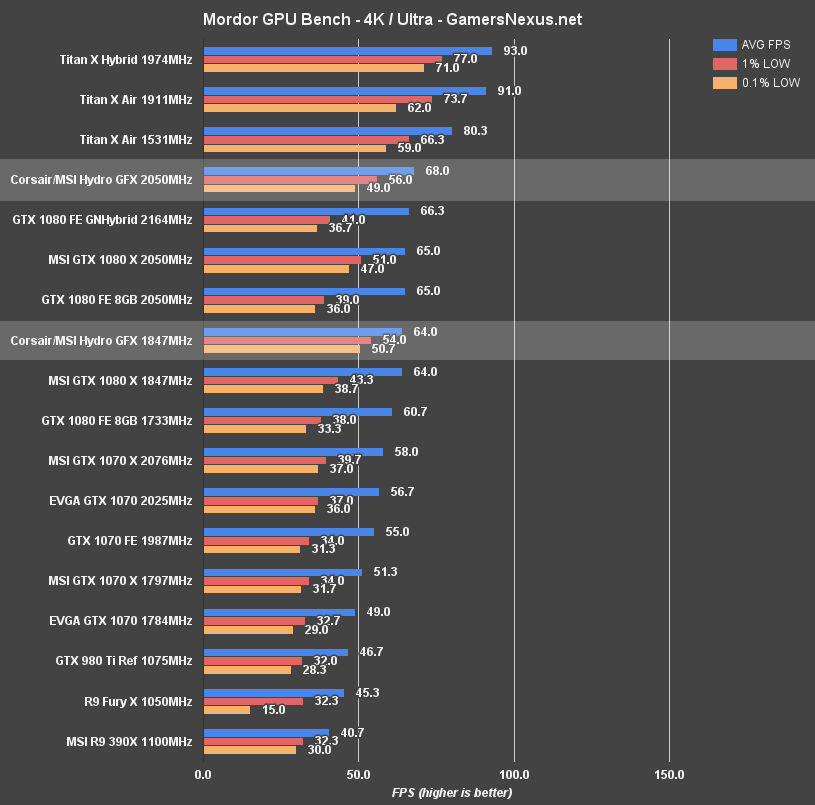

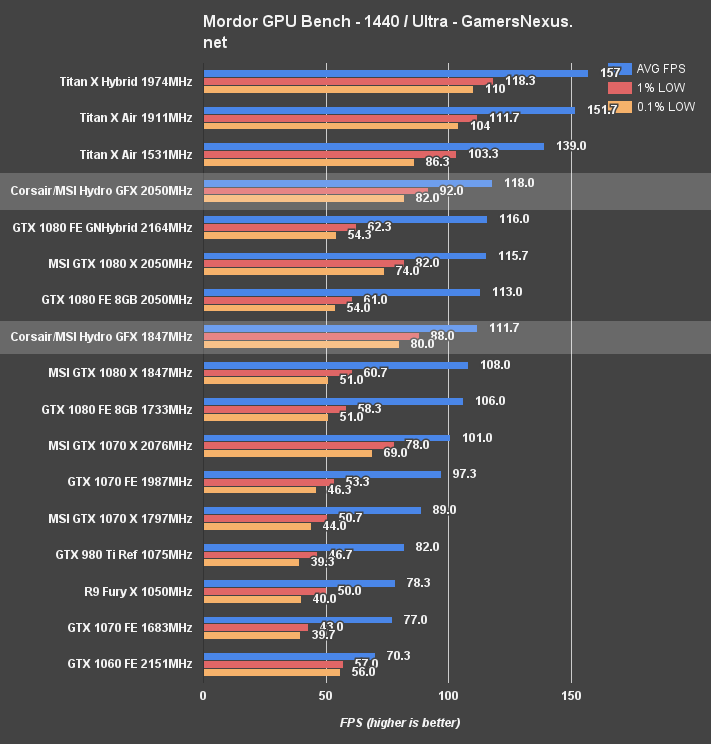

Shadow of Mordor – Hydro GFX 1080 Benchmark at 4K, 1440, 1080p

Shadow of Mordor posts 64FPS AVG at 4K for the Hydro GFX, effectively identical to the Gaming X, but maintains a ~3.3FPS lead over the GTX 1080 FE card. This proportionately scales down to 1440p and 1080p, where the Corsair Hydro GFX 1080 – as with the other tests here – claims top slot for single GPU performance.

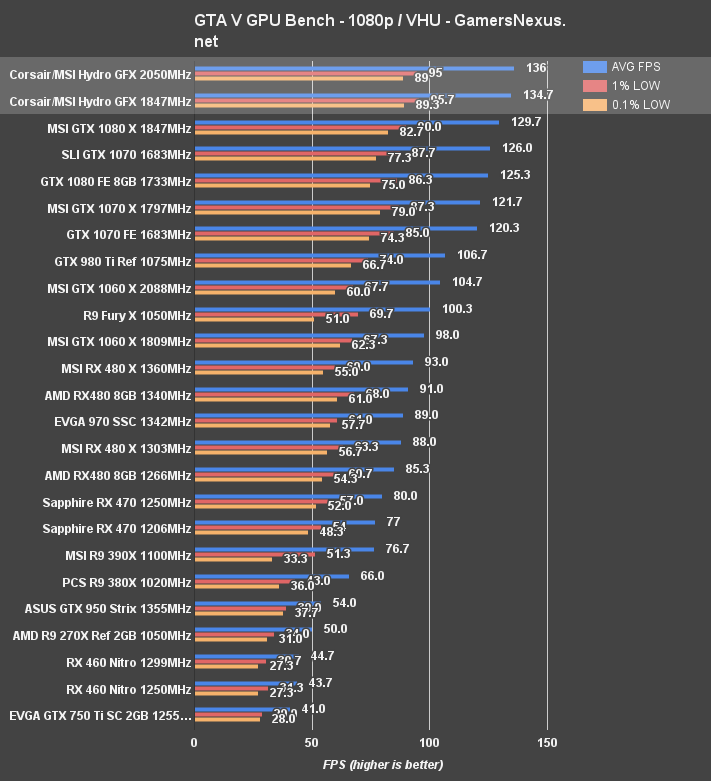

GTA V Benchmark – Hydro GFX 1080 vs. Reference, MSI Gaming X, etc.

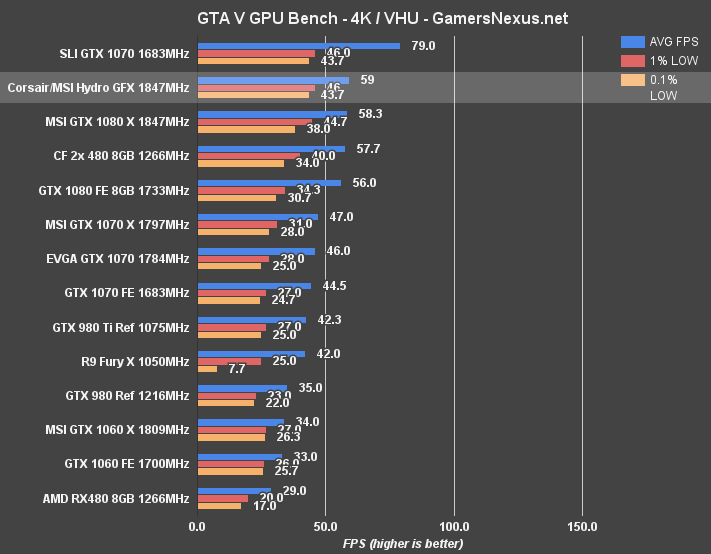

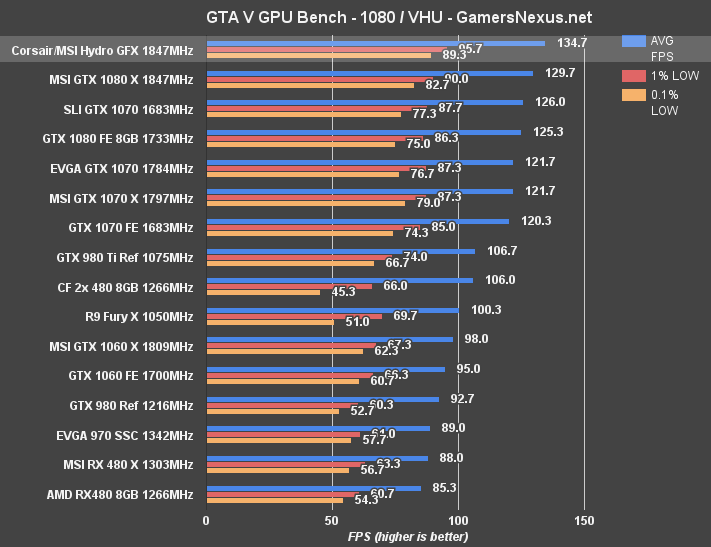

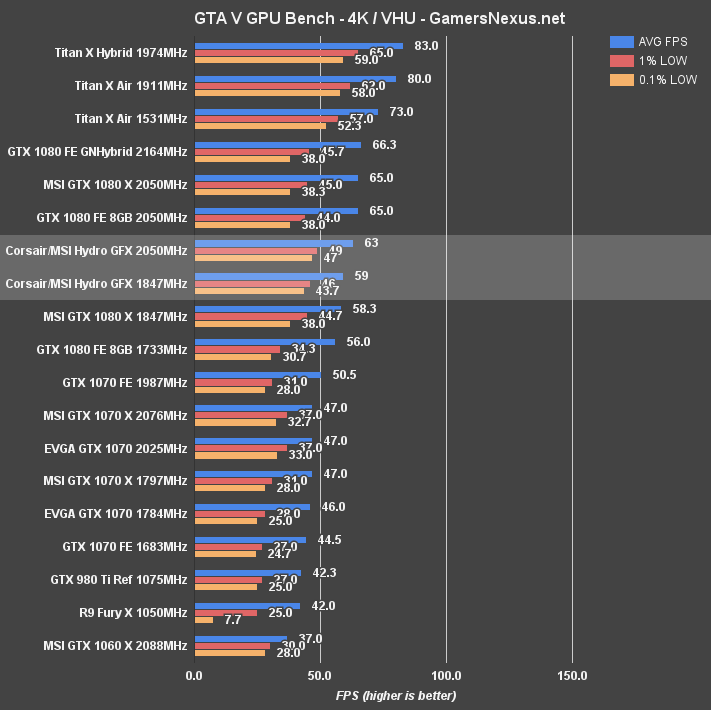

Moving on to GTA V, we see the Hydro GFX performing at 59FPS AVG with 4K ultra settings, with lows of 46FPS and 43.7FPS. That plants the Corsair and MSI card just above the MSI 1080 Gaming X, at 58.3FPS AVG and with imperceptibly slower low FPS. The FE card sits at 56FPS, or a change of about 5% to the Hydro GFX. A step down, the 1070 Gaming X sits at 47FPS AVG, with significantly lower 0.1% low values.

At 1080p, the Hydro GFX is capable of pushing about 135FPS AVG with tightly timed lows. The GTX 1080 Gaming X sits at 129.7FPS AVG, or a change of about 4%. The GTX 1080 FE clocks at 125FPS AVG. We're starting to bump against the CPU limit in this test, though.

The Division Benchmark – Corsair Hydro GFX / MSI Seahawk 1080

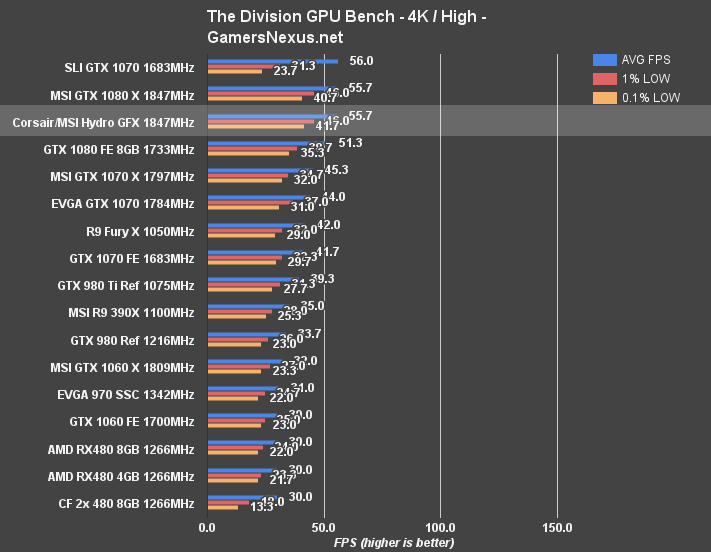

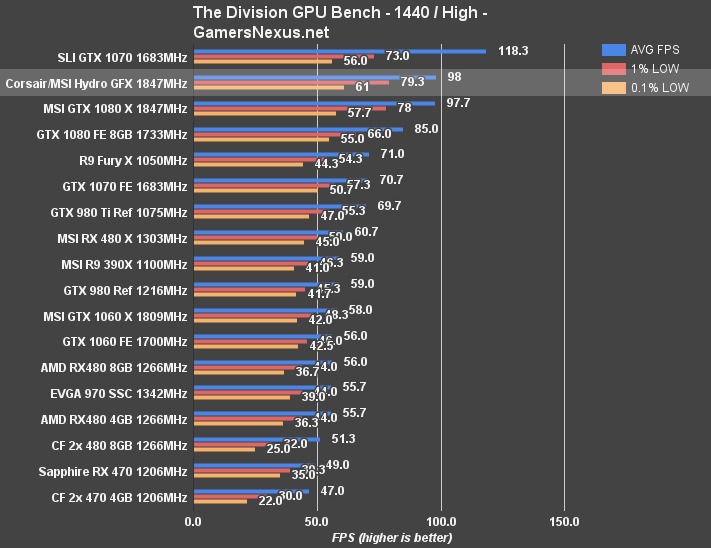

We “only” tested The Division at 1440p and 4K, leaving 1080p untested for this one title. We're seeing the Gaming X and Hydro GFX performing, again, mostly identically – makes sense, given the clock-rate similarities.

Ashes of the Singularity – Corsair Hydro GFX

Moving on to Ashes, this will be our last FPS benchmark before overclocking results.

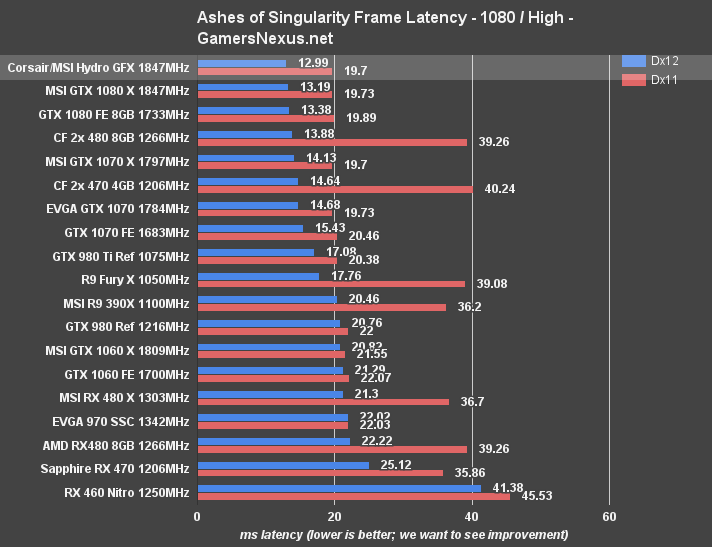

First looking at average millisecond latency between frames, the Corsair Hydro GFX is chart-topping in Ashes of the Singularity for its AVG latency in Dx12, posting 12.99ms between frames. The MSI Gaming X sits at 13.19ms, with the FE card at 13.38. CrossFire RX 480s is the next trailing configuration, at 13.88ms average in Dx12 – but with way worse Dx11 frametimes, partially an artifact of multi-GPU.

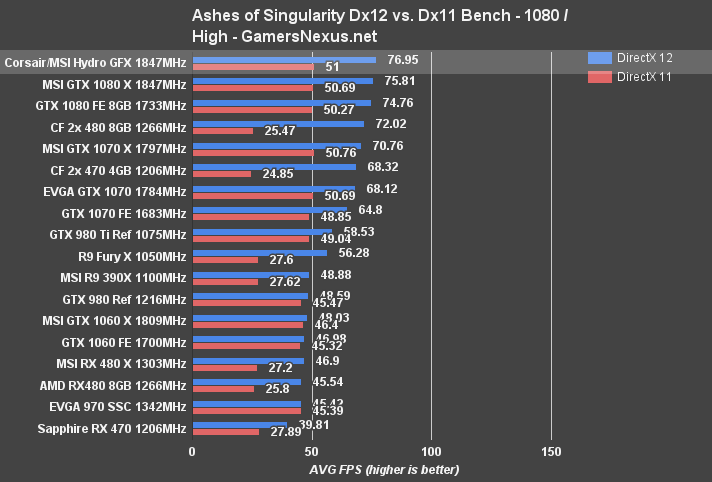

Looking instead at FPS, the 1080 Hydro GFX at 1080p is posting 76.95FPS AVG for DirectX 12, a 25FPS gain over Dx11 performance. The same scaling is shown for the 1080 FE, though the card is about 2FPS slower than the Hydro GFX.

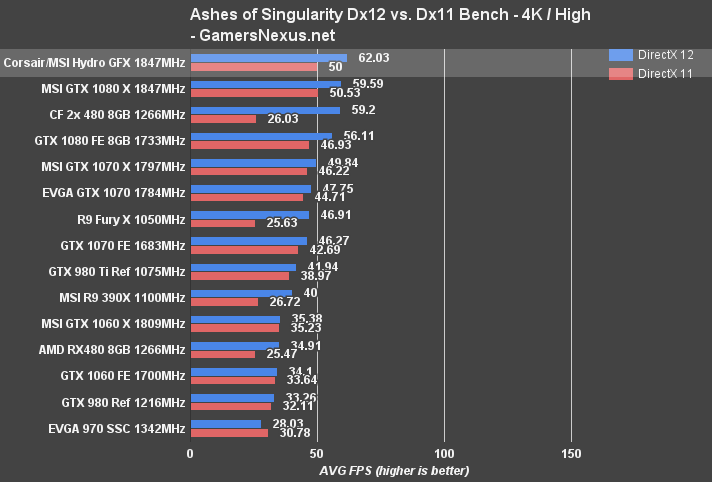

Moving to 4K/High shows the Hydro GFX just above 60FPS, at 62.03FPS. The 1080 Gaming X is a couple FPS lower at 59.6FPS AVG, with the CrossFire cards butting between the 1080 FE and 1080 Gaming X.

Continue on to the next page for overclocking results. For DOOM (OpenGL & Vulkan) testing, see some of our launch-day reviews.

Overclocking the Corsair Hydro GFX GTX 1080 (“Seahawk”)

Overclocking the Corsair Hydro GFX is the same as overclocking the GTX 1080 FE, 1070 FE, and 1060 FE. Check the respective links for more depth on that. For the basics, here's a recap from a previous content piece:

“Overclocking the GTX 1060 is the same as the previous Pascal cards. We bypass the voltage-frequency curve as we find it annoying, and instead take the manual approach. We'll talk more about how to OC the 1060 in separate content in the near future.

As for the results, we've got the below stepping table that shows progression of the FE overclock. The peak clock just shows the spikes, and can be mostly ignored. 'Core Clock (MHz)' is the column you want to look at, as it more accurately represents the 'resting' clock-rate when gaming. Spikes to the high clock-rate can and do manifest themselves as slight performance increases when present. This is why two same-clocked cards may have marginally different FPS results.”

Let's look at the Hydro GFX stepping:

| Peak Core CLK | Core Clock (MHz) | Core Offset (MHz) | Mem CLK (MHz) | Mem Offset (MHz) | Power Target (%) | Peak vCore (V) | Fan Target (%) | 5m Test | 60m Endurance |

| 1949 | 1898 | 0 | 1251.5 | 0 | 100 | 1 | 33 (Auto) | P | P |

| 1949 | 1911 | 0 | 1251.5 | 0 | 105 | 1 | 33 (Auto) | P | P |

| 1974 | 1936 | 50 | 1251.5 | 0 | 105 | 1.012 | 33 (Auto) | P | P |

| 2012 | 1961.5 | 100 | 1251.5 | 0 | 105 | 1.012 | 33 (Auto) | P | - |

| 2037.5 | 1987 | 125 | 1251.5 | 0 | 105 | 1.012 | 48 (Auto) | P | - |

| 2062.5 | 2012 | 175 | 1251.5 | 0 | 105 | 1.012 | 50 (Auto) | P | - |

| 2075.5 | 2012 | 200 | 1251.5 | 0 | 105 | 1.012 | 54 (Auto) | F Artifacts | - |

| 2176.5 | 2012 | 200 | 1251.5 | 0 | 105 | 1.05 | 54 (Auto) | F Instant system crash | - |

| 2126 | 2050 | 175 | 1410.8 | 650 | 105 | 1.05 | 54 (Auto) | P | P |

We're landing at ~2050MHz when looking at the average boosted value, with a peak of 2126MHz (but that's only when thermal/power limits allow – in this case, power/voltage, as thermal is solved for). The card gains a few extra FPS from the push – more significant in clock-dependent games than those which are not – and that can be viewed below:

The overclocking potential isn't tremendous, but considering this is just an FE board, it's not all that bad. We hit 2168MHz on our FE card after adding liquid to it, but were stuck around 2030-2050MHz with the pre-liquid variant. Some of this difference is likely binning or luck of the draw.

Corsair Hydro GFX & MSI Seahawk GTX 1080 Conclusion

At $750, Corsair and MSI are asking a lot for the Seahawk / Hydro GFX – but it does include a liquid cooler, so that's where a lot of the cost comes from. And that's reasonable, too – you're paying for improved thermals. We do think the shroud could use some serious improvement, though, and the PCB – although perhaps fine for its target market – certainly isn't special. It's an FE board. We've seen those.

Gaming performance is about the same as every other GTX 1080, when it comes to out-of-box FPS. It's the thermals that are improved, and that's a big deal for the right setup. Power management and VBIOS limitations for this generation seem to slightly invalidate some of the higher-end custom PCBs and VRMs, unless you're doing hard mods and volt mods, so the PCB does become somewhat less significant than in previous generations. It's still important – but it's not the only factor in card selection. To Corsair/MSI's favor, this does mean that their utilization of the relatively basic 5+1 FE board is largely a non-issue, though overclocking is still somewhat limited. This certainly isn't a board for extreme OCs (with PCB mods).

The card mostly maintains a higher average clock-rate than its FE counterpart, though exhibits the same clock drop behavior as we've seen in other Pascal GPUs. We are still researching this, and are in discussion with nVidia for further validation. Here's a reminder:

That's discussed on page 3, if you missed it.

Still, the Hydro GFX is ultimately the lowest SKU MSI GTX 1080 with a CLC strapped to it. That's not to say the CLC is insignificant, though.

The Hydro GFX solves for thermal issues handily, and operates better than our own DIY GN Hybrid card that uses the EVGA Hybrid liquid cooler. This has us curious about the future of EVGA's FTW Hybrid, which we've got on the bench today for testing. We'll soon be discussing how that card performs versus Corsair's solution. We can definitively state that Corsair's cooling solution for the Hydro GFX GTX 1080 has impressed us. The thermals are superior to all other big (and big-ish) GPUs on our bench, with the closest competition coming from something we built in-house.

It's less impressive that the Hydro GFX is the highest-performer of the single GPUs currently on our bench, but still noteworthy. “Less impressive” because, of course, it's really nVidia's work – the GP104-400 chip performs as it should, within a ~5~6% range of the FE card, and is pushing a few extra frames thanks to the pre-overclock. That's not really news to anyone, nor is it innovative; MSI and Corsair are pre-overclocking boost to 1847MHz (OC Mode, which was enabled on ours by default), and that's a reasonable gain over the 1733MHz advertised boost of the FE card.

This performance does include comparison to the MSI GTX 1080 Gaming X ($730) that we previously tested. It's outperforming the Founders Edition card by about 5-6% in most games, further validating our statement that the reference cooler designs from both AMD and nVidia are generally best forgone, and that you instead opt for an AIB partner model – and it doesn't have to be liquid-cooled, either. You could grab something cheaper than reference, but with a far-and-away superior cooler.

For these characteristics, the Seahawk & Hydro GFX fusion takes our "Best of Bench" award for thermals and FPS performance on a single GPU:

As for the Hydro GFX, for now, it's the best performing single GPU on the bench, and has the lowest thermals of all the big GPUs we've tested this year. Wait for the EVGA Hybrid review and comparison for more information, as that card has a custom PCB, RGB LEDs, and an axial fan. For today, this card has been validated as high performing. $750 does feel a little bit steep, though. This generation is running a little more expensive than seems necessary, and we'd rather place the high-end cards closer to the $700-$730 mark.

Editorial, Test Lead: Steve “Lelldorianx” Burke

Video Production, Photography: Andrew “ColossalCake” Coleman

Sr. Test Technician: Mike “Budekai?” Gaglione

Test Technician: Andie “Draguelian” Burke